The initial request from the real estate agent was predictable. She was losing leads. Specifically, web leads from her site and third-party aggregators were arriving between 10 PM and 4 AM. By the time she reviewed them at 7 AM, the prospect had already engaged with three other agents who had an automated response system. Her manual follow-up process had a time-to-contact of over eight hours for any overnight inquiry. The competition’s was under five minutes.

This delay was lethal. Internal data from brokerages shows that a lead contacted within the first five minutes is over 20 times more likely to convert than one contacted after 30 minutes. Her “contact us” form was a digital graveyard for commissions she never earned. We calculated she was forfeiting at least two potential transactions per quarter, a significant revenue leak directly attributable to response latency.

The Failure of Off-the-Shelf Chatbots

Her first attempt involved a generic, script-based chatbot from a popular SaaS platform. The bot was good at one thing: asking for a name and email. It operated on a rigid decision tree. If a user asked a question outside its pre-programmed script, like “Does the property on Elm Street have a fenced yard for a large dog?”, the bot would default to its only programmed function: “I can’t answer that, but what is your email address?”

This approach fails to build trust. It’s a glorified, interactive contact form that provides zero value to the prospect. The user gets frustrated and leaves. The agent gets a low-intent lead with no context. We ripped it out within a week. The goal was never to just capture a contact; it was to qualify the lead and initiate a business process, specifically booking a showing.

Architecting a Qualification Engine, Not a Greeting Bot

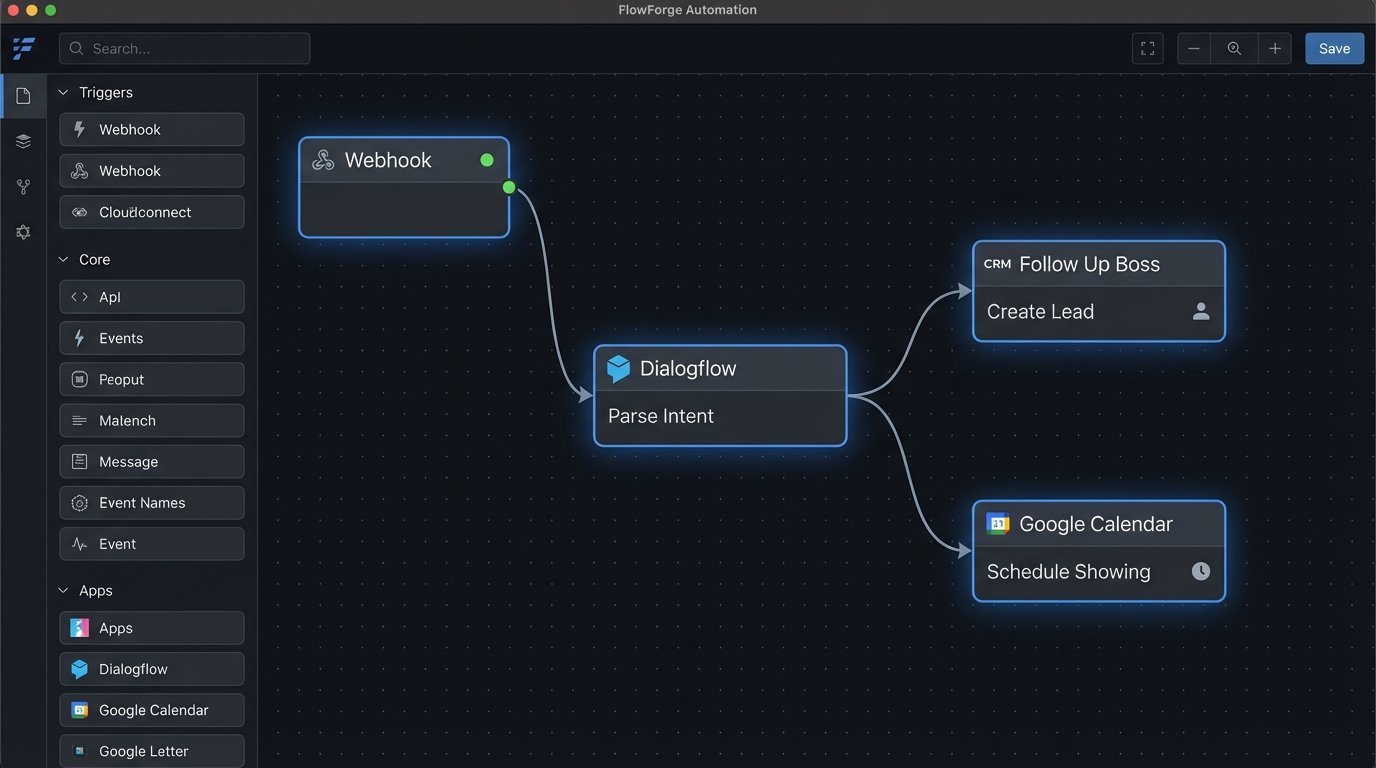

A true virtual assistant needs to function as a junior agent. It must understand context, manage state, and integrate with external systems. Our architecture was built on four pillars: Natural Language Processing (NLP), state management, a robust integration layer, and a logical fallback protocol. Anything less is just a toy.

We selected Google’s Dialogflow for the NLP layer. Its strength is intent mapping. We didn’t care about a friendly greeting. We cared about stripping the user’s unstructured text down to a specific, machine-readable intent. We defined a strict set of intents critical to the business process.

- Find_Property: Triggered by phrases like “I’m looking for a house” or “Show me condos under 500k”. This intent initiates a slot-filling sequence to collect criteria: `property_type`, `bed_count`, `bath_count`, `budget_max`, `location_preference`.

- Schedule_Showing: Triggered by “Can I see this property?” or “Are you free on Tuesday?”. This intent requires an `address` and `datetime` entity.

- Ask_Agent_Question: Handles specific queries about the agent’s experience, commission, or process.

- Human_Handoff: A critical escape hatch triggered by phrases indicating frustration or a complex query the bot is not trained for.

The integration layer was the most complex component. This is where we had to bridge the NLP front-end to the agent’s actual business tools. We built a lightweight service using Python and Flask, deployed on a cloud function, to act as the central webhook fulfillment endpoint for Dialogflow. This service became the brain, executing logic based on the identified intent.

Connecting these different APIs was the main source of project friction. We were forcing a high-pressure data stream from the property database API through the narrow pipe of the chatbot’s conversational context. State management became critical to prevent data loss between turns in the conversation.

The Integration Grind: APIs and Data Mapping

First, we integrated with the agent’s CRM, Follow Up Boss. When the `Find_Property` intent collected all required slots, our Python service would format the data and fire it at the CRM’s API endpoint. This created a new lead with all the qualification criteria pre-filled. The agent would wake up to a fully formed lead, not just a name and an email.

The JSON payload sent to the CRM was structured for immediate actionability. We included not just the lead’s criteria but also a direct link to the full chat transcript for context.

{

"person": {

"firstName": "John",

"lastName": "Doe",

"emails": [{"value": "j.doe@example.com"}],

"phones": [{"value": "555-123-4567"}],

"source": "Website Chatbot"

},

"note": "New lead qualified via VA.\n\nCriteria:\n- Type: Single Family Home\n- Beds: 3+\n- Baths: 2+\n- Budget: $650,000\n- Location: Northwood District\n\nFull Transcript: [URL to transcript log]"

}

Next was the calendar. For the `Schedule_Showing` intent, we used the Google Calendar API. This required setting up an OAuth 2.0 service account with domain-wide delegation to access the agent’s calendar without requiring her to log in repeatedly. Our service would fetch her availability in real-time, cross-reference it with the user’s request, and propose available slots. Once confirmed, it would create the event on her calendar, inviting the prospect and including the property address and notes.

The final, and most expensive, integration was with the MLS data feed via a third-party IDX provider. This API access allowed the bot to answer specific property questions like “Does 123 Main St have central air?”. The bot could perform a live lookup, parse the property data, and provide an immediate, accurate answer. This capability alone set it miles apart from any generic chatbot, as it provided instant value and demonstrated competence.

Deployment and The Human Fallback Protocol

We never perform a “big bang” launch. For the first two weeks, the bot ran in a logging-only mode. It processed incoming chats and logged its intended responses without actually sending them to the user. We reviewed these logs daily to refine the intents and entity recognition based on real-world user queries. This training period was critical to achieving the accuracy needed for a live deployment.

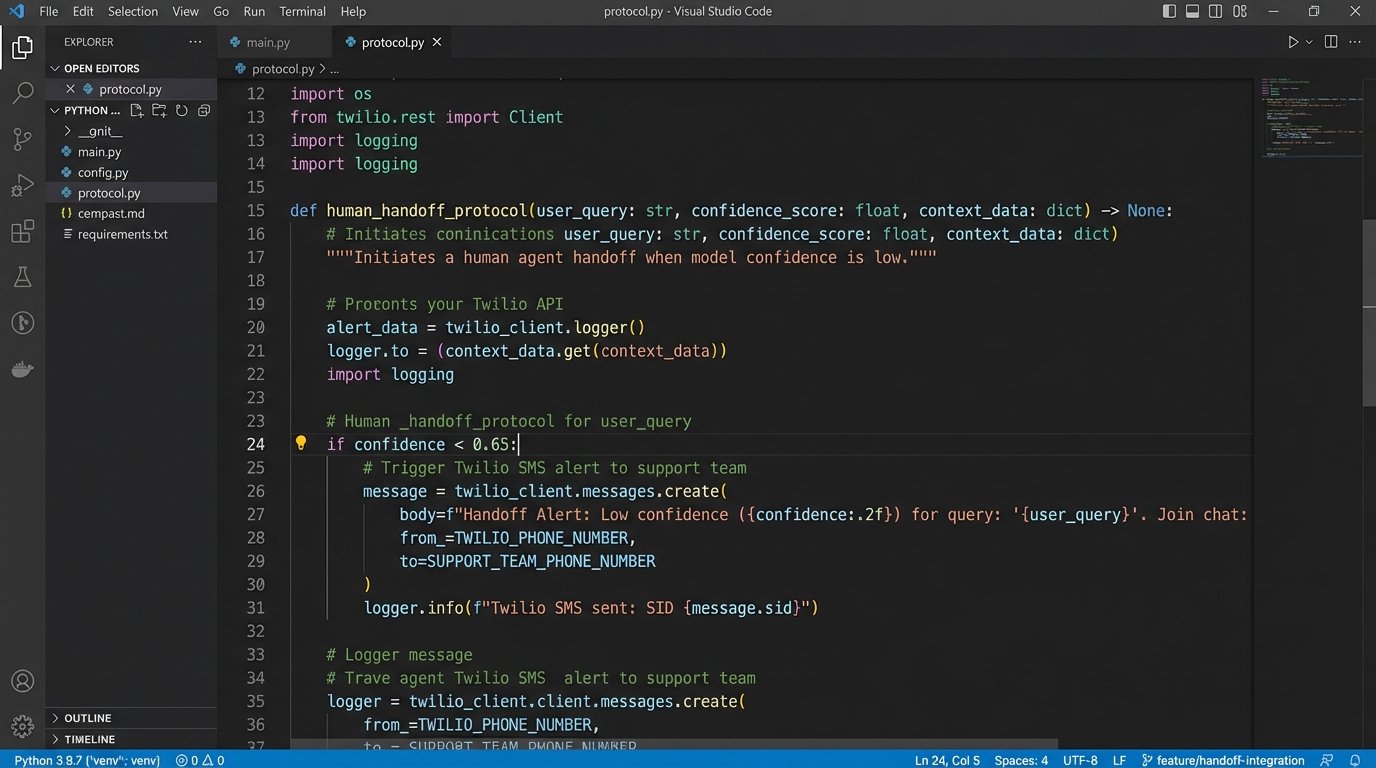

The system’s intelligence isn’t just in what it can answer, but in how it handles failure. We programmed a confidence score threshold. If Dialogflow returned an intent with a confidence score below 0.65, or if the user typed “speak to a human,” the bot wouldn’t invent an answer. It would execute the `Human_Handoff` protocol.

This protocol immediately sent a high-priority SMS to the agent’s phone via the Twilio API. The message contained the user’s name, their query, and a link to the live chat. This allowed the agent, if she happened to be awake, to seamlessly take over the conversation. If she was asleep, it ensured the hottest leads were flagged at the top of her list in the morning. This safety net prevented lead leakage when the automation reached its limits.

Measuring the Results: From Data Leak to Revenue Engine

The impact was tracked with hard metrics. We didn’t care about “engagement” or other soft KPIs. We cared about qualified leads, appointments booked, and closed deals.

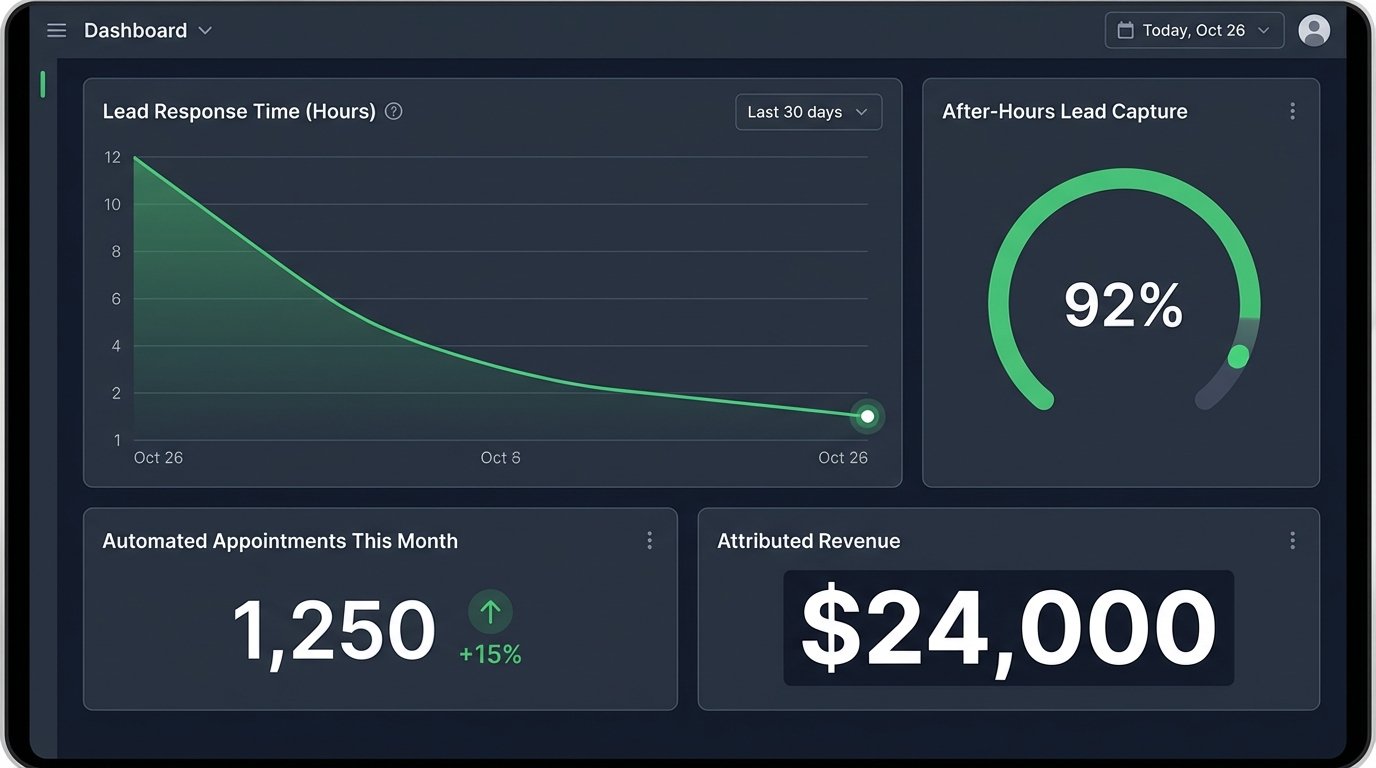

Within the first 60 days, the results were clear.

- After-Hours Lead Capture: The system successfully captured and qualified 92% of all inquiries that came in between 10 PM and 7 AM. The remaining 8% were either spam or triggered the human handoff. This was up from a near-zero capture rate previously.

- Lead Response Time: The average time-to-first-contact for an overnight lead dropped from 8.5 hours to 4 seconds. The entire qualification process was typically completed in under three minutes.

- Automated Appointments: The virtual assistant independently booked an average of six property showings per week directly into the agent’s calendar. These were high-intent prospects who had their initial questions answered and were ready for the next step.

The Financial Impact

The most important metric is revenue. In the first quarter of operation, the agent closed two transactions that originated entirely through the after-hours virtual assistant. With an average commission of $12,000 per transaction, that’s $24,000 in new revenue directly attributable to the system.

The total cost for development, hosting, and API subscriptions was approximately $7,500. The ROI was realized in less than two months. More importantly, it established a scalable system to process an unlimited number of concurrent inquiries without dropping a single one.

This project was not about deploying a chatbot. It was about architecting a business process automation engine. It required a deep understanding of the agent’s sales funnel and the technical grit to connect disparate systems that were never designed to speak to each other. The result is a machine that doesn’t just answer questions, but actively generates revenue while the human expert sleeps.

The system still requires maintenance. We review low-confidence conversations once a month to train new intents. API keys must be rotated and dependencies updated. It is not a passive appliance. It is an active piece of infrastructure that needs supervision. But for this agent, it permanently solved the financial drain of overnight lead decay.