Stop Fearing the Model. Start Directing It.

The conversation around AI in customer support is broken. It fixates on agent replacement, a narrative driven by people who have never closed a Tier 3 ticket at 2 AM. The real threat isn’t a large language model taking your job. The threat is the agent in the next pod who uses that model to handle three times the workload with half the errors.

Fear is a useless response to a tool. Your job isn’t to compete with the AI. It’s to chain it to your workflow and make it do the grunt work you hate. We’re not talking about sentient robots. We’re talking about sophisticated pattern-matchers that can strip, sort, and summarize data faster than any human. Ignoring this is a critical career error.

The Grind Is the Target, Not the Human

Let’s be blunt about the daily reality for a support agent. It’s a swamp of repetition. Password resets, order status inquiries, “Have you tried turning it off and on again?”. These tasks are cognitive dead weight. They require minimal critical thinking but burn maximum time, wrecking your Average Handle Time (AHT) and First Call Resolution (FCR) metrics.

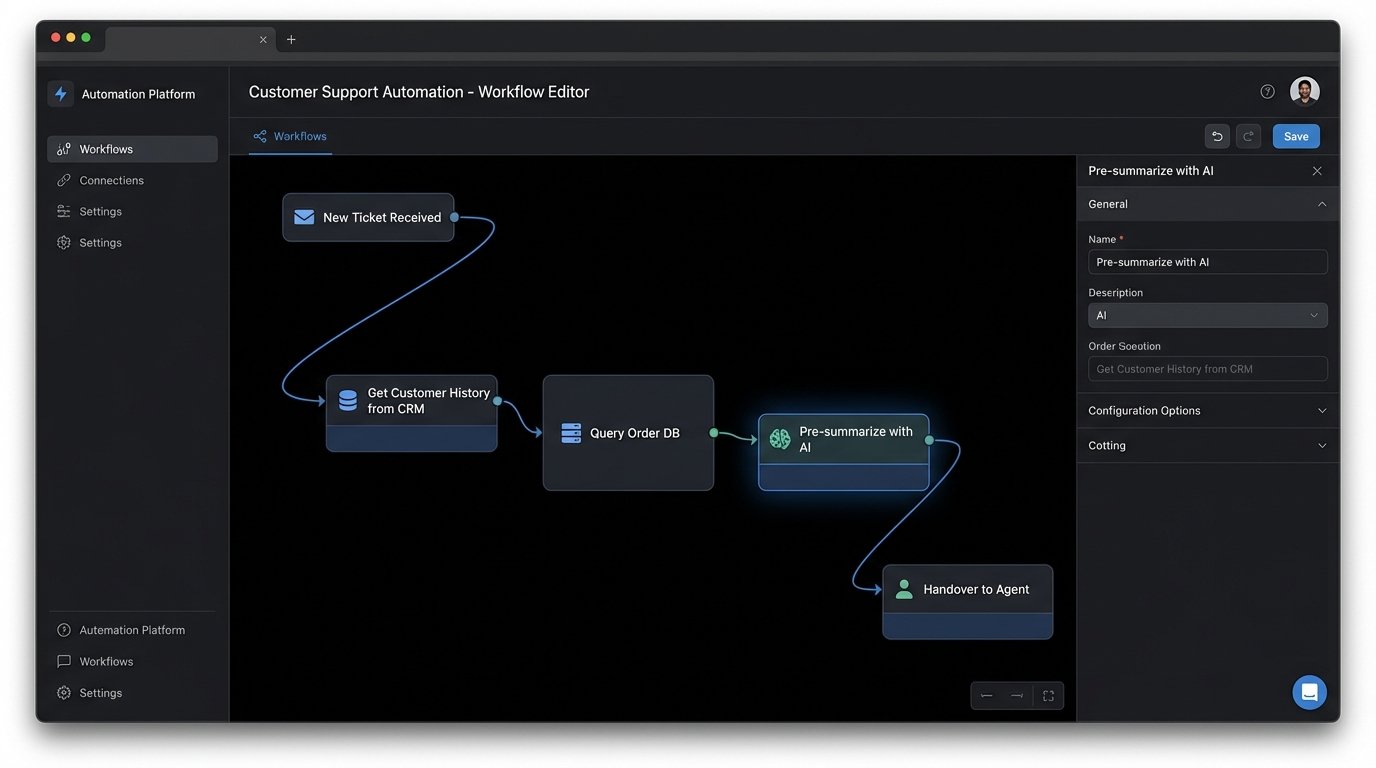

The typical workflow involves juggling three or four different systems. You have the CRM for customer history, a separate knowledge base for SOPs, the ticketing platform itself, and probably a direct messaging app for escalations. Each context switch is a point of friction and a potential source of error. AI is built to gut this exact inefficiency.

It doesn’t need to answer the customer directly. Its primary function should be to serve you, the agent. It acts as an intelligent pre-processor. Before you even read a ticket, the AI should be forced to perform a series of automated checks. It can identify the user by their email, query the database for their last five orders, and ping a status API to check for known outages related to their account type.

This is not replacement. This is augmentation. The machine does the data fetching, leaving you to do the actual thinking.

AI as a Data Aggregation Engine

A support ticket is never just about the initial question. It’s about the entire customer history. The most time-consuming part of a complex issue is digging through previous interactions, trying to build a coherent timeline from a mess of old tickets and chat logs. This is where you inject an LLM to do the heavy lifting.

Summarization is its most practical, immediate application. You can pipe an entire customer interaction history into a model and command it to produce a chronological summary with key events. This transforms 20 minutes of reading into 15 seconds of scanning. You are not asking the AI for a solution. You are commanding it to organize the raw data so you can find the solution faster.

Consider this basic Python script using a generic API endpoint. It’s a crude example of how you can take a disorganized blob of text, like a chat transcript, and force a model to distill it into something useful. We’re just sending a payload with a clear instruction set.

import requests

import json

def summarize_ticket_history(api_key, transcript_text):

"""

Sends a long transcript to a language model for summarization.

This is a conceptual example. Do not use in production without proper error handling.

"""

api_url = "https://api.some_llm_provider.com/v1/completions"

headers = {

"Content-Type": "application/json",

"Authorization": f"Bearer {api_key}"

}

prompt = f"""

Analyze the following customer support transcript.

Provide a bulleted list summarizing the key events in chronological order.

Extract the customer's primary unresolved issue.

Identify any mentioned product SKUs or order numbers.

Transcript:

---

{transcript_text}

---

"""

data = {

"model": "text-davinci-003", # Or whatever model you have access to

"prompt": prompt,

"max_tokens": 250,

"temperature": 0.2 # We want factual, not creative, summaries

}

response = requests.post(api_url, headers=headers, data=json.dumps(data))

# Basic check, a real implementation needs more robust logic

if response.status_code == 200:

return response.json()['choices'][0]['text'].strip()

else:

return f"Error: Received status code {response.status_code}"

# Example Usage:

# long_transcript = "..." # This would be fetched from your CRM/ticketing system

# summary = summarize_ticket_history("YOUR_API_KEY", long_transcript)

# print(summary)

The code itself is simple. The important part is the prompt design. You are instructing the machine with precision. You are not asking it to “help.” You are telling it to “extract,” “identify,” and “summarize.” This is the fundamental shift in mindset. You are the operator.

This whole process is about shoving a firehose of unstructured data through a needle of structured commands. The AI model is the needle, and your prompt engineering skill determines the quality of the output.

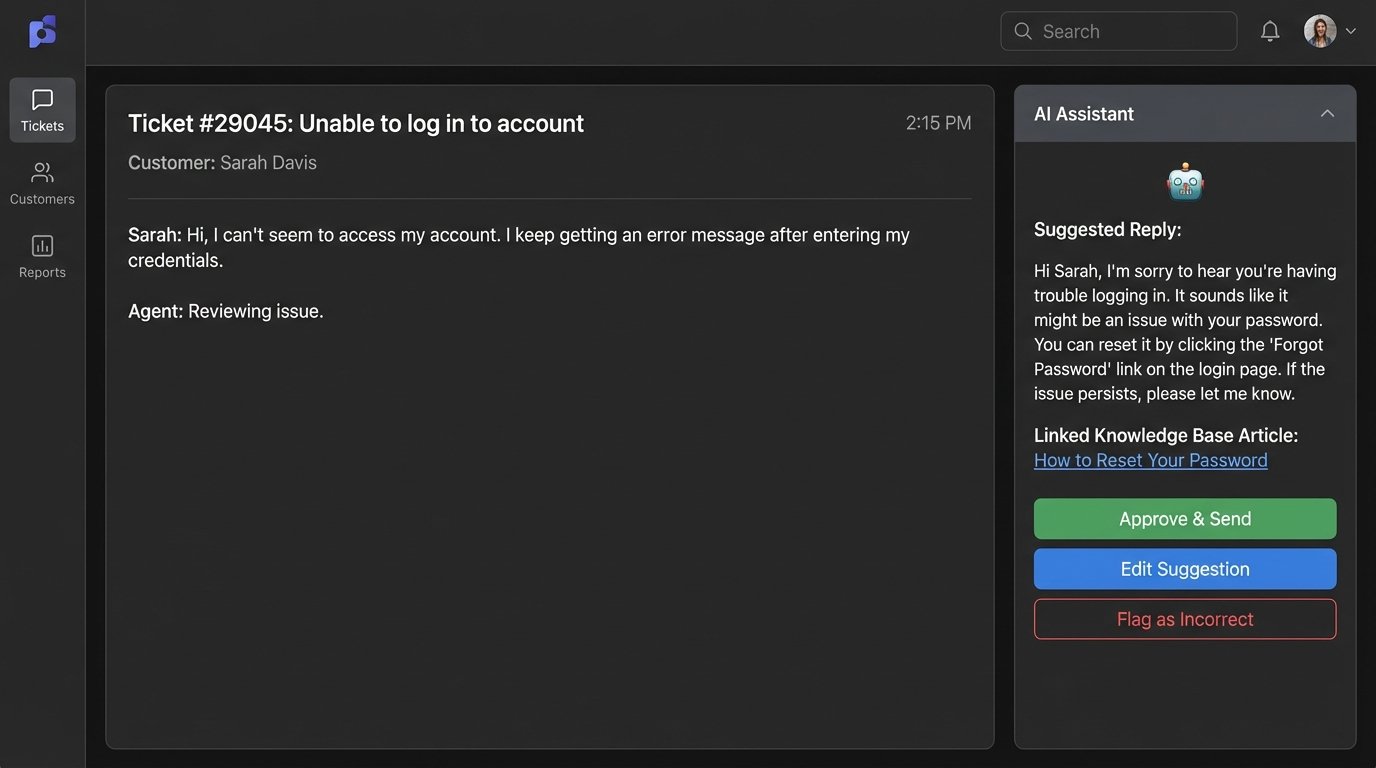

From Agent to AI Auditor

The next logical step is to invert the relationship. Instead of just consuming AI-generated summaries, the senior agent’s role evolves into validating and correcting the AI’s output. The system suggests a reply or a diagnosis, and the human provides the final logic-check. This creates a powerful feedback loop.

When an AI suggests an incorrect knowledge base article, the agent doesn’t just ignore it. They flag it. They link the correct article. This action is captured as training data. Over time, the agent’s expertise is systematically encoded into the model, making it smarter and more accurate. The agent is no longer just closing tickets. They are an active participant in building a more efficient automation system.

This moves the agent up the value chain. Your worth is no longer measured solely by how many tickets you can close per hour. It’s measured by your ability to improve the system that helps everyone else close tickets faster. You become a subject-matter expert who governs the machine, correcting its course and refining its performance. Your institutional knowledge becomes the asset that trains the AI.

This is how you secure your position. You become the human firewall for bad AI outputs.

The Unspoken Costs and Technical Debt

Integrating these systems is not a weekend project. It’s a minefield of technical and financial hurdles. The API calls for powerful models are a constant drain on the budget. Every summary, every suggested reply, every sentiment analysis has a metered cost. Without strict controls and monitoring, you can burn through thousands of dollars before you see any return.

Data security is another massive problem. You cannot send personally identifiable information or sensitive customer data to a public third-party API without thinking through the consequences. This forces a choice between expensive, privacy-focused enterprise plans or the even more complex path of deploying open-source models on your own infrastructure. Both options are wallet-drainers and require specialized engineering talent.

Then there’s the integration itself. You have to build a bridge between the AI and your existing systems. Most companies run on a patchwork of legacy software, CRMs with poorly documented APIs, and homegrown tools. Getting a modern AI to talk to a 10-year-old database is a brutal engineering challenge. It requires custom middleware, robust error handling, and a deep understanding of systems that the original developers have long since forgotten.

The project looks simple on a slide deck. The reality is a slow, painful process of mapping data fields and debugging API authentication failures.

Adapt or Become Redundant

The fear of AI is misplaced. It’s a distraction from the real issue. The nature of skilled technical work is changing. It’s shifting away from manual, repetitive tasks and toward system management and strategic oversight. The agent who refuses to adapt will be out-competed, not by an AI, but by a peer who learned how to leverage it.

The goal is not to work against the machine. The goal is to offload 80% of the cognitive clutter onto the AI so you can focus your entire expertise on the 20% of problems that actually require a human brain. Stop looking at AI as a competitor. Start looking at it as a powerful, stupid, and endlessly patient intern that you can command to do all the boring work for you.