The Transaction Coordinator’s Hell: A Case Study in Forced Sanity

Data entry errors in a real estate transaction are not trivial. A single misplaced digit in a parcel number or a wrong closing date can derail a six-figure deal, triggering legal headaches and costing the agency its commission. The team we walked into was bleeding from a thousand paper cuts. Their process for managing a deal from contract to close was a chain of emails, spreadsheets updated by three different people, and frantic text messages.

The core problem was a complete lack of a single source of truth. The agent, the transaction coordinator (TC), and the broker were all working from their own local versions of reality. This resulted in a measured 18% error rate on critical document fields, defined as a mismatch between the initial contract and the final closing statement. The TC was spending nearly half her day just chasing down correct information and fixing mistakes made by others.

Deconstructing the Failure Points

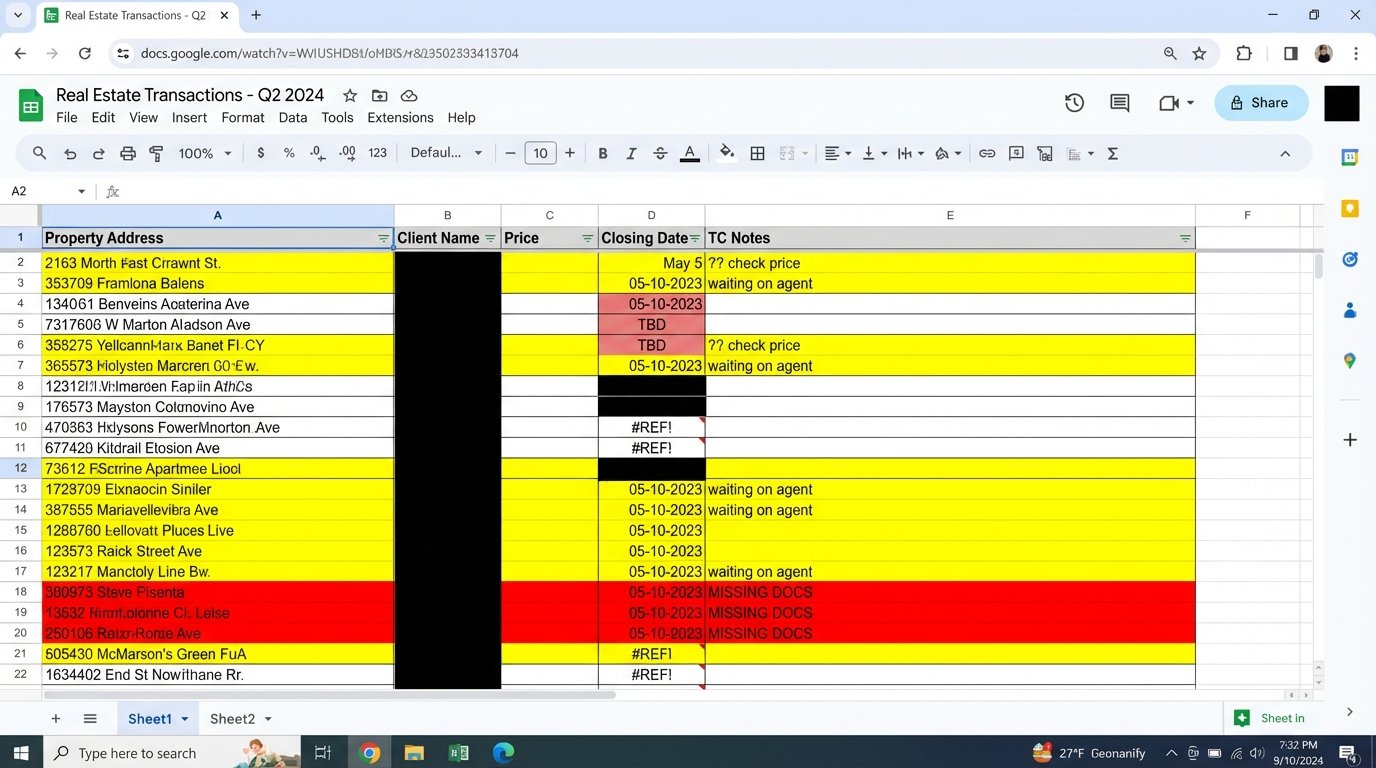

Before building anything, we mapped the existing information flow. It was a mess. An agent would email a PDF of the signed purchase agreement to the TC. The TC would then manually transcribe key data points like names, addresses, prices, and dates into a master Google Sheet. This sheet was the first point of failure. It had no input validation, inconsistent formatting, and was prone to copy-paste errors.

From there, the TC would create a calendar event for the inspection deadline. She would then draft an intro email to the client, the lender, and the title company, often copying information from the chaotic spreadsheet. Each manual data transfer was another opportunity to introduce an error. The process was slow, brittle, and entirely dependent on one person’s ability to spot inconsistencies under pressure.

The second major failure point was communication. Critical updates were lost in long email threads. An agent might text the TC about an addendum, but that information wouldn’t make it into the official file until hours later, if at all. There was no central hub for transaction status, forcing constant, repetitive check-in calls and emails that wasted everyone’s time.

Architecture of the Fix: A Rigid Data Pipeline

We rejected the idea of simply creating a “better spreadsheet.” The goal was to eliminate manual data entry wherever possible and force structured data from the very beginning. The old process was like trying to build an engine with parts being tossed into the room at random. We needed to build a conveyor belt.

The solution was a three-part system built on a stack that prized reliability over bells and whistles.

- Intake: We replaced the email intake with a dedicated form built in Jotform. The form used conditional logic to ensure all required fields were present based on the transaction type. A residential sale required different fields than a land deal. This forced agents to provide complete and correctly formatted data upfront. No more “I’ll send it later” emails.

- Processing & Storage: The Jotform submission triggered a workflow in Make (formerly Integromat). This was the system’s central nervous system. Make’s primary job was to parse the form data and create a new, structured record in an Airtable base. Airtable acted as our database, providing a rigid structure the Google Sheet lacked.

- Execution & Notification: Once the new record was created in Airtable, a cascade of automated actions fired. The Make scenario created a dedicated folder in Google Drive using a standardized naming convention (e.g., `YYYY-MM-DD_PropertyName_ClientName`), created calendar events for all critical deadlines, and sent a templated notification to a dedicated Slack channel for that transaction.

This architecture ensured that from the moment an agent submitted the deal, the core data was locked in and propagated across all necessary platforms without a human touching it.

Injecting Logic Checks for Data Integrity

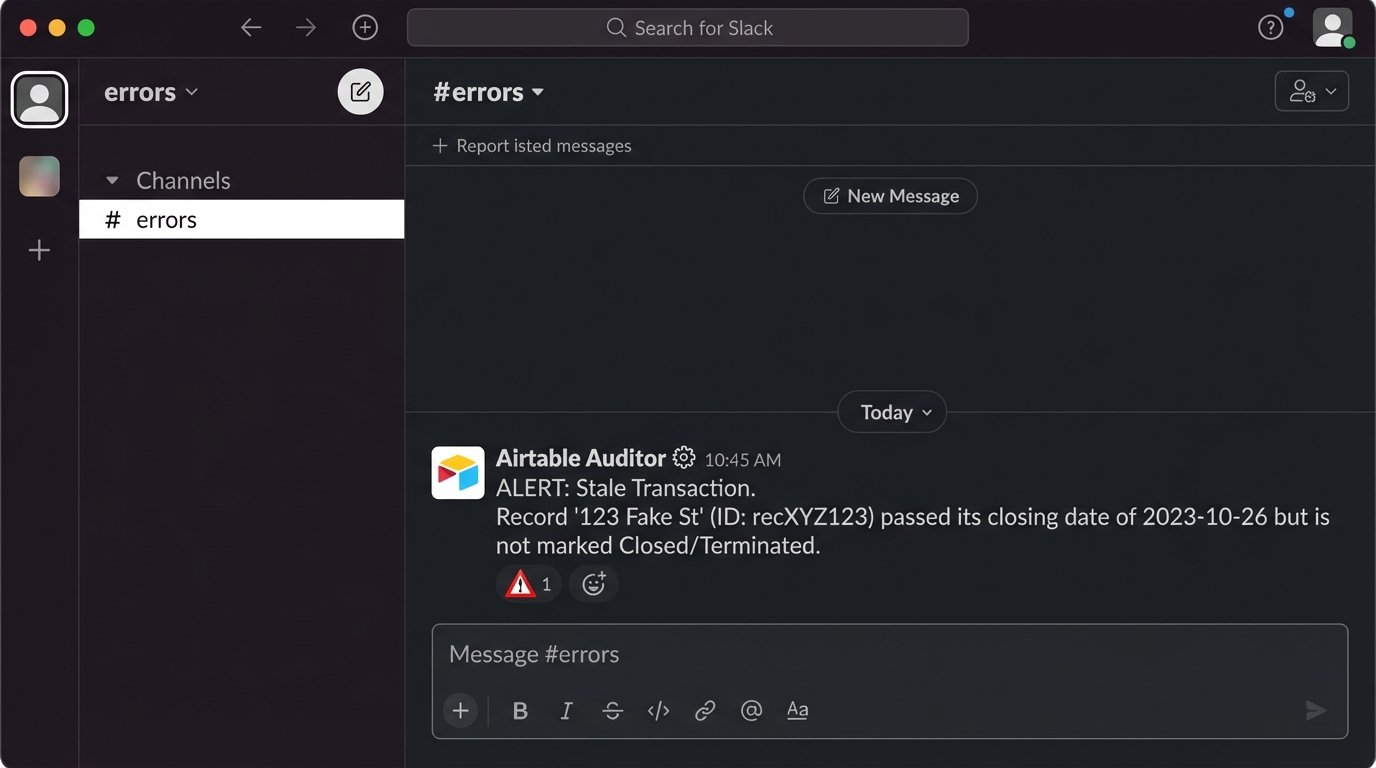

Automation without validation is just a faster way to make mistakes. A key part of the Make workflow was a router that performed sanity checks on the incoming data. For example, it checked if the `closing_date` was logically after the `acceptance_date`. If a check failed, the automation would halt and post an error message to a specific `#errors` channel in Slack, tagging the TC for manual review.

This prevented garbage data from polluting the entire system. Instead of discovering an impossible date two weeks into the transaction, the system flagged it within seconds of submission.

We also built a simple Python script, run as a nightly cron job via a small AWS Lambda function, to audit the Airtable base for orphaned records or deals that had passed their closing date without being marked as “Closed” or “Terminated.” It wasn’t fancy, but it was a necessary backstop.

import os

import requests

from airtable import Airtable

from datetime import date, timedelta

# Basic configuration from environment variables

AIRTABLE_API_KEY = os.environ.get('AIRTABLE_API_KEY')

AIRTABLE_BASE_ID = os.environ.get('AIRTABLE_BASE_ID')

AIRTABLE_TABLE_NAME = 'Transactions'

SLACK_WEBHOOK_URL = os.environ.get('SLACK_WEBHOOK_URL')

def check_stale_transactions():

airtable = Airtable(AIRTABLE_BASE_ID, AIRTABLE_TABLE_NAME, api_key=AIRTABLE_API_KEY)

today = date.today()

records = airtable.get_all(formula="AND({Status} != 'Closed', {Status} != 'Terminated')")

for record in records:

fields = record.get('fields', {})

closing_date_str = fields.get('Closing Date')

record_id = record.get('id')

property_name = fields.get('Property Name', 'Unknown Property')

if closing_date_str:

closing_date = date.fromisoformat(closing_date_str)

if closing_date < today - timedelta(days=2):

message = f"ALERT: Stale Transaction. Record '{property_name}' (ID: {record_id}) passed its closing date of {closing_date_str} but is not marked Closed/Terminated."

payload = {'text': message}

requests.post(SLACK_WEBHOOK_URL, json=payload)

if __name__ == "__main__":

check_stale_transactions()

This script is a simple guardrail. It cross-references the record's status against its closing date and fires a Slack alert if a deal is left in limbo. It's a low-cost way to prevent deals from falling through the cracks.

The Measured Results: KPIs and ROI

The impact was immediate and quantifiable. We tracked metrics for three months post-implementation and compared them to the previous six months of manual data.

Error Rate Reduction: The critical document field error rate, our primary KPI, dropped from 18% to less than 1%. The few errors that remained were from incorrect data being entered into the initial form, not from transcription mistakes during the workflow.

Time Savings: The average time spent by the TC on administrative setup for a new transaction collapsed from 45 minutes to approximately 5 minutes. This time was now spent reviewing the auto-generated file structure and confirming the first Slack notification, not on mind-numbing data entry. This recaptured nearly 15 hours of the TC’s time per week, allowing her to manage a higher volume of transactions or focus on more complex client-facing issues.

Increased Deal Velocity: By automating deadline reminders and centralizing communication in Slack, the average time from contract to close was reduced by 2.5 days. This was a direct result of eliminating communication lag for things like inspection scheduling and appraisal follow-ups.

The Unavoidable Trade-Offs

This system introduced its own set of constraints. The solution is rigid. Agents initially complained about the structured form, preferring the "flexibility" of a quick email. We had to force adoption by making it the only accepted method for submitting a new deal. There was no other way.

The system is also dependent on three different SaaS platforms. An outage at Make, Airtable, or Jotform could bring the entire process to a halt. This is the calculated risk of building on third-party APIs. We mitigated this with clear manual override procedures, but the dependency remains a known weak point.

Finally, the solution wasn't free. The monthly subscription costs for the platforms and the initial development and training time represented a significant upfront investment. It was a wallet-drainer in the short term, but the ROI from preventing even one major deal from collapsing due to clerical error paid for the system multiple times over within the first year.