The Platform is a Lie

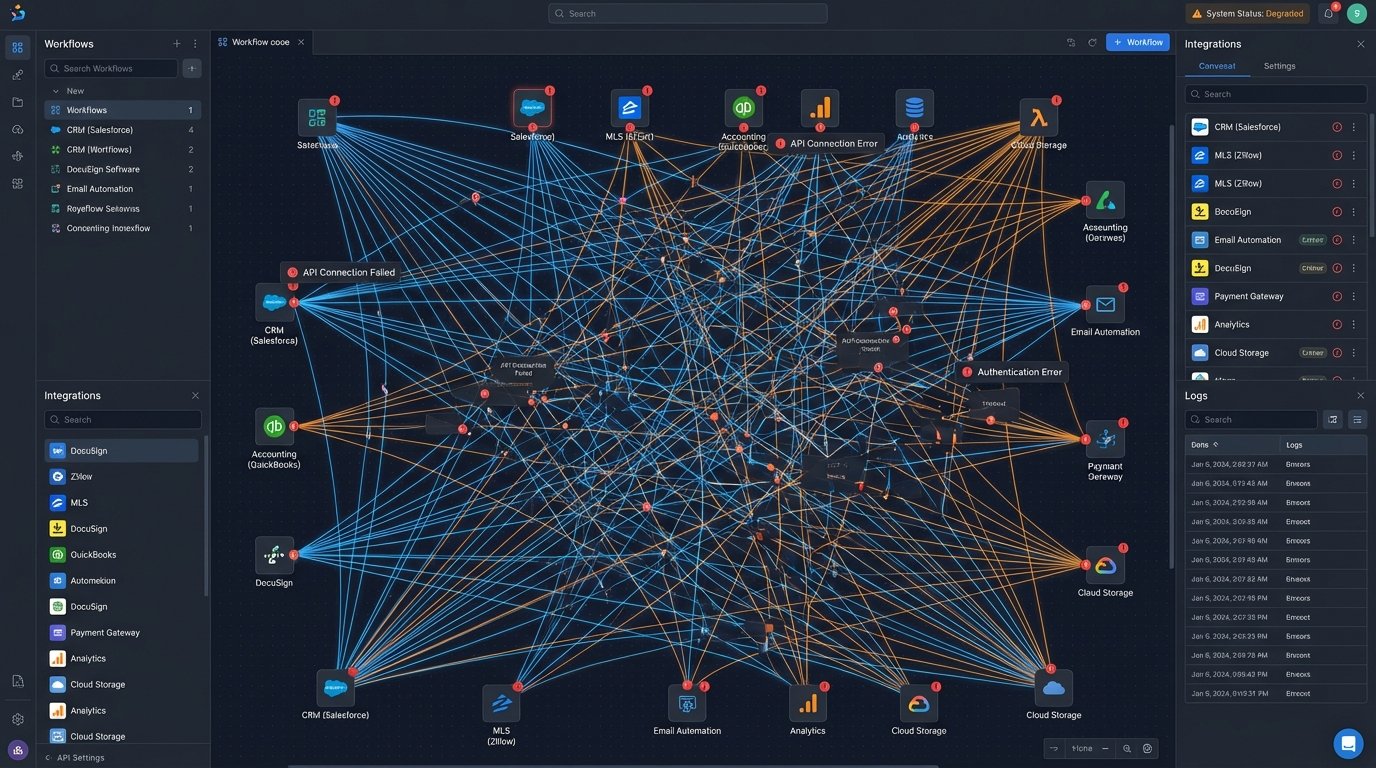

Every virtual brokerage sells the dream of a single pane of glass. They market a unified platform, but what they deliver is a patchwork of third-party services held together with brittle API calls and Zapier workflows. The core architecture is a Frankenstein’s monster of a legacy CRM, a transaction management system bought in an acquisition, and a document service with API rate limits that nobody checked. The result is data drift, latency, and a support team that spends its days force-syncing records manually.

This isn’t a platform. It’s a collection of isolated data islands connected by leaky, poorly-maintained bridges. An agent updates a client’s email in the CRM. That change should propagate to the transaction system, the marketing automation tool, and the e-signature service. Instead, it triggers a cascade of failures because each point-to-point integration has its own failure mode, its own authentication token to refresh, and its own special interpretation of a “contact” object. The whole thing is a time bomb.

Data Integrity is the First Casualty

The fundamental flaw in the current model is the assumption that direct, synchronous API calls can maintain state across a dozen different systems. A single transaction involves the MLS, a CRM, a document storage provider, a compliance engine, and a commission calculation tool. Each system is its own source of truth, leading to an operational nightmare. We spend countless engineering cycles building reconciliation scripts to fix data that should have been correct from the start.

Consider the commission calculation process. The logic depends on data from the transaction management system, agent profiles from the CRM, and potentially tiered bonus structures stored in a separate database. A synchronous call to fetch this data is slow and prone to failure. If one service is down, the entire calculation fails. This is not a scalable or resilient architecture. It is a house of cards built on hope and HTTP 200 responses.

The problem is that we are treating a distributed workflow as a simple, linear process. We logic-check the data flow from A to B, then from B to C. But we ignore the fact that system D might need data from A, forcing us to build another brittle connection. This approach creates a spiderweb of dependencies that makes any change, any update, a high-risk deployment. We are shipping our org chart, with all its communication breakdowns, directly into our production code.

This entire mess is an expensive way to pretend we have a modern tech stack. The real cost isn’t just the subscription fees for all these disparate services. It’s the payroll for the engineers building glue code and the support staff cleaning up the inevitable data messes. It’s the opportunity cost of not being able to launch new features because the underlying architecture is too fragile to touch.

A Shift from API Calls to Business Events

The alternative is to gut the point-to-point integration model and replace it with an event-driven architecture. Stop thinking about systems “calling” each other. Start thinking about systems publishing facts. When a document is signed, the e-signature system doesn’t need to know which three other applications care about that fact. It just needs to broadcast a single, immutable event: DocumentSigned. This event is a statement of record, a fact that has occurred in the business.

This event gets published to a central message bus, a system like Apache Kafka or AWS Kinesis. From there, any other service in the brokerage’s ecosystem can subscribe to that stream of events. The compliance engine consumes the DocumentSigned event and updates its checklist. The transaction management system consumes it and moves the file to the next stage. The agent notification service consumes it and sends an alert. Each service reacts independently.

Current systems force-feed data between APIs like shoving a firehose through a needle. An event bus acts like a nervous system, broadcasting signals that organs react to on their own terms. This decouples the services entirely. If the notification service is down, the compliance update still happens. The document signature event is durable, waiting in the bus until the subscriber comes back online to process it. This provides a level of resilience that is impossible in a tightly coupled, API-driven world.

The Technical Backbone of an Event-Driven Brokerage

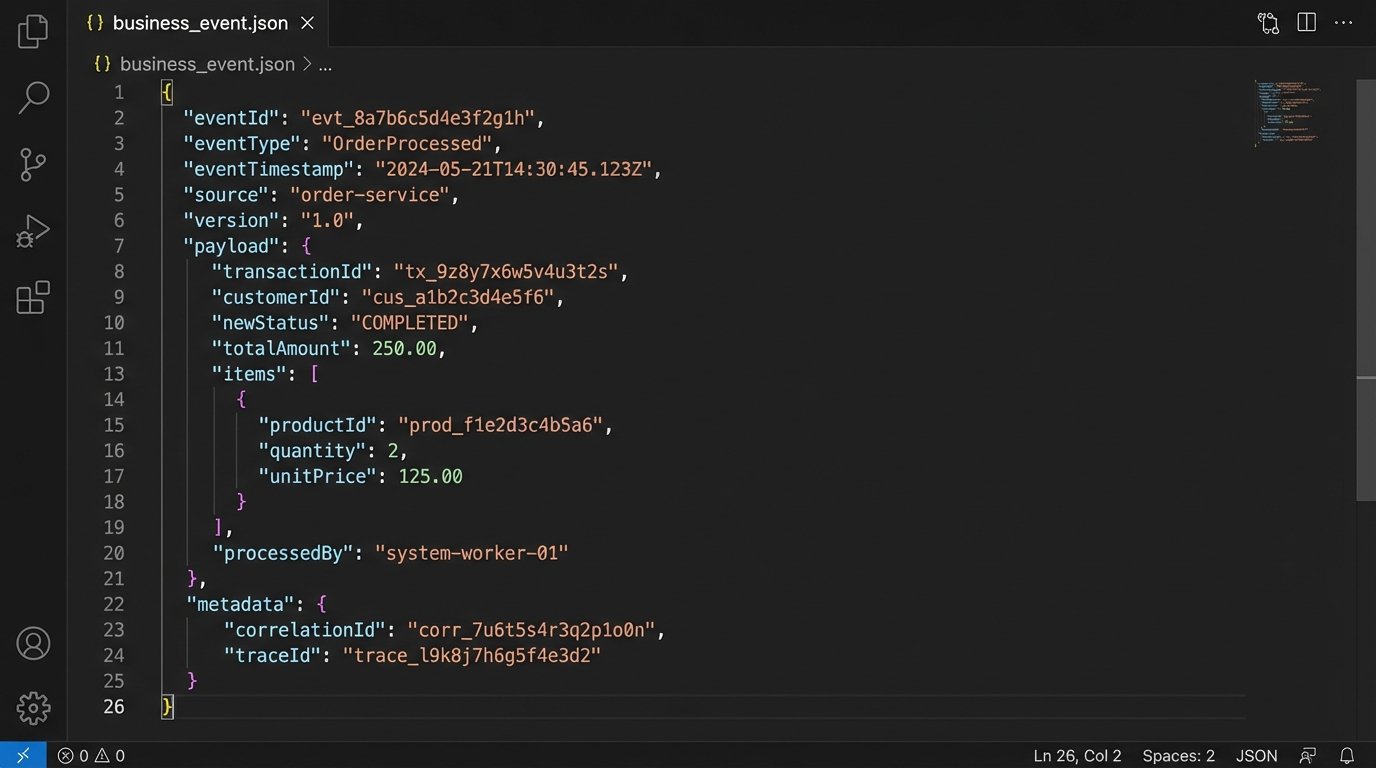

Implementing this requires more than just picking a message broker. It requires a disciplined approach to data contracts. Each event must have a strictly enforced schema. Without it, you are just trading API chaos for messaging chaos. We use something like Apache Avro or Protobuf to define these schemas and store them in a central registry. This forces every service to agree on what a Transaction.Created event actually looks like.

A typical event payload is not a massive data dump. It contains the essential facts of what happened, along with identifiers to allow other services to fetch more details if needed. It is a signal, not a state transfer.

{

"eventId": "f47ac10b-58cc-4372-a567-0e02b2c3d479",

"eventType": "Transaction.StatusUpdated",

"eventTimestamp": "2023-10-27T10:00:00Z",

"eventSource": "TransactionManagementSystem-v2",

"payload": {

"transactionId": "TXN-98765",

"priorStatus": "PENDING_INSPECTION",

"newStatus": "PENDING_APPRAISAL",

"updatedBy": "agent-jane-doe",

"property": {

"mlsId": "12345678",

"address": "123 Main St, Anytown, USA"

}

}

}

Another non-negotiable component is idempotency in the consumer services. A service must be able to process the same event multiple times without causing side effects. Network glitches happen. Message brokers can redeliver messages. If your commission calculation service receives the Transaction.Closed event twice and pays out the commission twice, you have built a very expensive failure. Consumers must logic-check if they have already processed a given event ID before taking action.

The trade-off here is upfront complexity. Setting up a Kafka cluster and a schema registry is a heavier lift than writing a Python script to call a REST endpoint. Debugging becomes a different beast. You are no longer tracing a single request through a call stack. You are tracing an event through a distributed system. This requires proper tooling for distributed tracing and log aggregation. It is a serious engineering investment, not a weekend project.

Workflow Composition Over Brute-Force Integration

The payoff for this investment is a shift from simple integration to true workflow composition. With a central stream of business events, building new functionality no longer requires modifying existing, fragile systems. Instead, you build new, independent services that listen for the events they care about and perform a specific function.

Imagine the brokerage wants to create a new, real-time dashboard for agent performance. In the old model, this would require the dashboard to poll the CRM, the transaction system, and maybe even the accounting software. It would be slow, inefficient, and place a heavy load on those source systems. In an event-driven model, you build a new “PerformanceAnalytics” service. This service subscribes to events like Showing.Scheduled, Offer.Submitted, and Transaction.Closed. It processes these events as they happen, calculates KPIs, and pushes the results to a dedicated database that powers the dashboard. The dashboard is fast, real-time, and does not impact the performance of any other system.

Redefining Team Collaboration

This architecture directly impacts how teams work. The transaction coordinator no longer needs to manually check the CRM to see if the agent has uploaded the signed purchase agreement. The system does it for them. A Document.Uploaded event, tagged with the transaction ID, can automatically trigger a task in the TC’s work queue. The single source of truth becomes the event stream itself, not a person’s memory or a manually updated spreadsheet.

Compliance becomes proactive instead of reactive. A compliance service can listen for all relevant events in a transaction’s lifecycle. If an InspectionDeadline.Approaching event is published and there is no corresponding InspectionReport.Received event within 48 hours, the system can automatically flag the transaction and escalate it. This is automated risk management, driven by the actual flow of work, not by a nightly batch job that reads from a stale database.

The entire operational model of the brokerage can be reconfigured by adding new event subscribers. If a new state regulation requires an additional disclosure form, you don’t hack the core transaction management code. You build a small, isolated “StateCompliance-TX” service. It listens for Transaction.Created events where the property state is ‘Texas’ and injects the required document task into the workflow. This is faster to build, safer to deploy, and easier to maintain.

This is the actual future of virtual brokerages. Not prettier dashboards slapped on top of the same old fragmented databases. The real innovation will come from tearing down the point-to-point spaghetti code and building a resilient, composable architecture around a central nervous system of business events. Brokerages that make this architectural investment will be able to adapt and innovate, while those that continue to bolt on more services to their already-strained “platforms” will eventually collapse under the weight of their own technical debt.