Most automation in real estate is a glorified spreadsheet macro. It pulls a list from the MLS, dumps it into a CRM, and sends a canned email. Agents get a firehose of low-intent leads, developers get to check a box for “automation,” and nobody addresses the actual friction in a transaction. The core problem is that this approach treats data as a static asset, something to be collected and stored. This is fundamentally wrong.

The data that matters in a real estate transaction has a half-life measured in hours, not weeks. A property status change, a price reduction, an offer submission. These are events, not just data points. True automation value comes from building systems that react to these events in near real-time, chaining processes together across what are normally disconnected platforms. Forget scraping. We should be building state machines that mirror the lifecycle of a transaction.

Moving Beyond the Poll-and-Pray Model

The standard architecture for pulling property data is built on polling. A script runs on a cron job every hour, or if you’re feeling ambitious, every 15 minutes, hammering the MLS provider’s API for updates. This approach is inefficient, brittle, and always late. You are perpetually reacting to old news. The network overhead is significant, and you run a constant risk of hitting rate limits, which can get your key suspended just when the market is hottest.

This is a brute-force solution to a problem that requires precision. It’s like trying to listen for a whisper by turning on every radio in the house at full volume. You’ll mostly get static and miss the critical signal. The entire poll-and-pray model is a relic.

Embracing Event-Driven Workflows

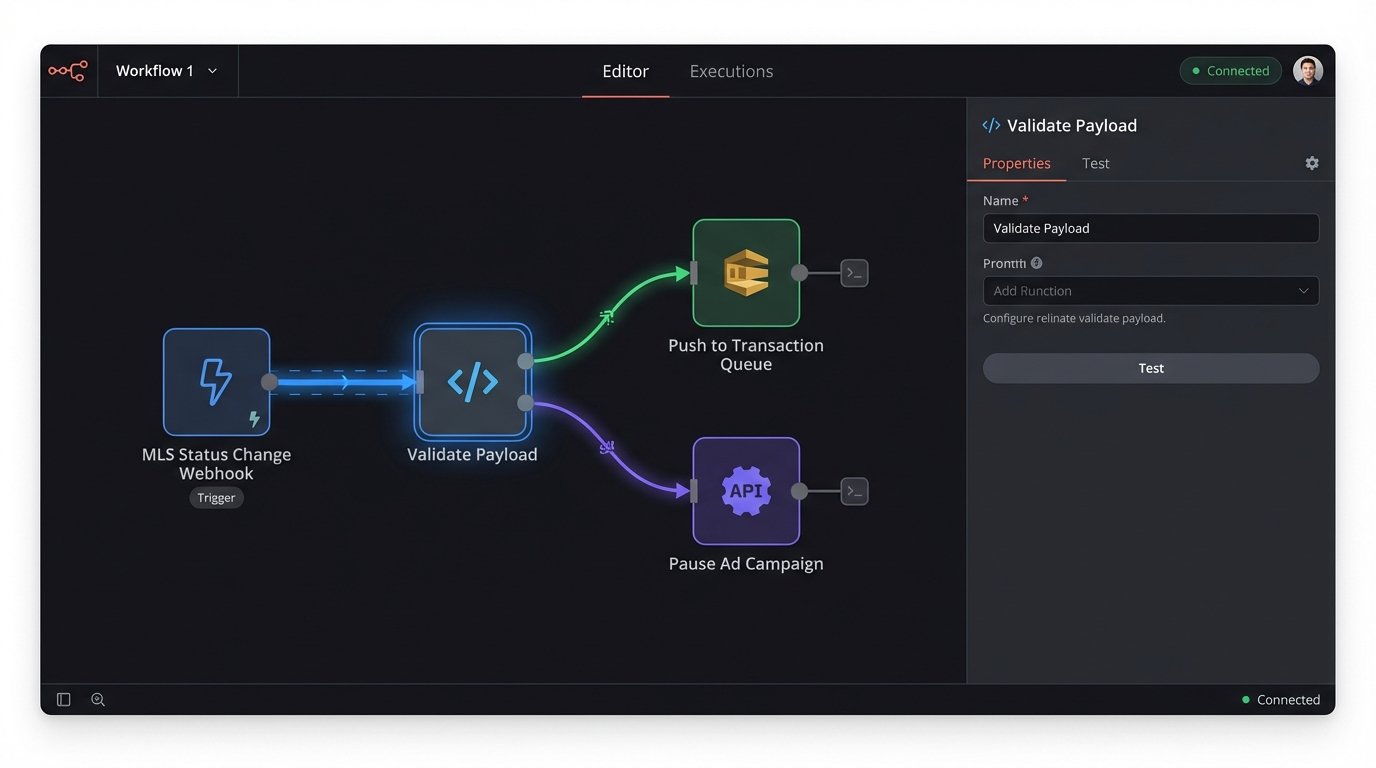

The correct model is event-driven, using webhooks where they exist and creating them where they don’t. When a listing status changes from ‘Active’ to ‘Pending’ in the MLS, a webhook should fire. That single HTTP POST is the trigger. It’s a signal that a specific, material event has occurred. Our job is to build the nervous system that receives that signal and initiates a cascade of logical actions, not just update a database field.

A simple listener, maybe a Cloudflare Worker or AWS Lambda function, can act as the central router. Its only job is to catch the incoming webhook, validate its payload and origin, and then trigger the appropriate downstream processes. This is lean, scalable, and cheap to run. You’re paying for compute only when an actual event happens, not for constantly asking “anything new yet?”.

The listener logic does not need to be complex. Its primary function is to be a reliable entry point and dispatcher. It authenticates the request, checks for the required fields, and then pushes the validated event payload onto a message queue like SQS or RabbitMQ. This decouples the intake process from the execution logic, which prevents a single slow endpoint from bringing down the entire chain.

A downstream service then picks up the message and starts the real work. The property status change could trigger several parallel actions. It might call the ad platform’s API to pause the marketing campaigns for that listing. It could update the internal CRM to move the property to a different stage in the pipeline. It could even generate a preliminary document package and stage it for the transaction coordinator. This is process automation, not just data synchronization.

The Data Fusion Imperative

Relying solely on MLS data is a critical failure. The MLS is the source of truth for marketing data, but it is not the source of truth for the property itself. Title information, permit history, tax assessments, and lien records live in entirely different systems, usually buried in municipal or county government databases with APIs that look like they were designed in 1998. The real architectural challenge is to fuse these disparate data sources into a single, coherent view of an asset.

This means writing connectors to APIs that are often poorly documented and have unpredictable performance. You will build robust error handling and retry logic because these endpoints will fail. You will write normalization routines to reconcile addresses, parcel numbers, and owner names that are formatted differently across every single system. It’s gritty, unglamorous work that is absolutely essential to building a reliable automation platform.

This process is like assembling a high-resolution satellite image from hundreds of smaller photos taken by different cameras at different times. You have to account for distortion, different color balances, and missing pieces, stitching them all together to create a single, accurate picture. Get the alignment wrong, and the entire image is worthless.

Example: Automated Title Red Flagging

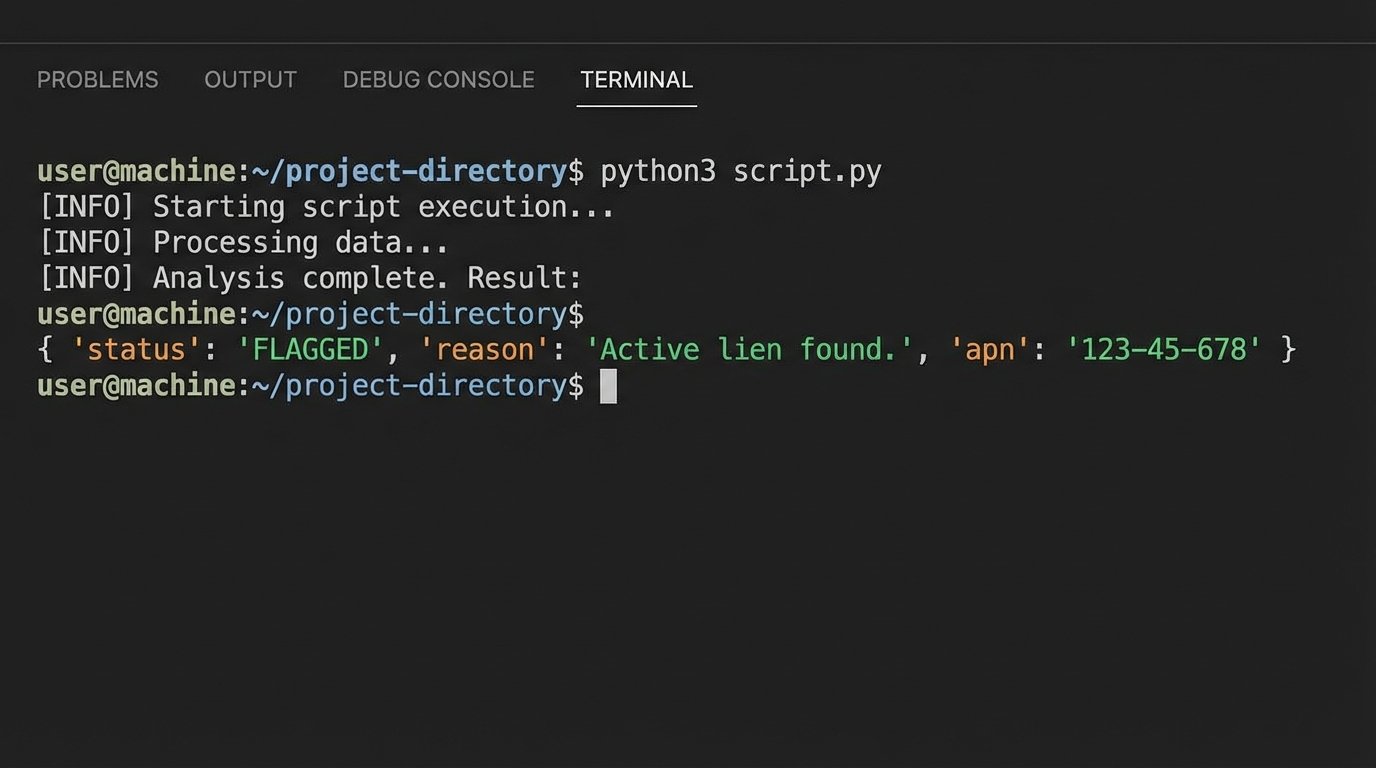

Consider a practical application. A new listing is ingested via an MLS webhook. The system immediately takes the property’s legal description and fires off a request to the county recorder’s API. The automation isn’t looking for the full title report. It’s looking for red flags. It logic-checks for recent mechanic’s liens, pending litigation notices (lis pendens), or ownership transfers that don’t match the seller’s name on the listing agreement.

Here’s a conceptual Python snippet of what that logic-check might look like using a hypothetical county API client. It’s not about the specific code, but the sequence of operations.

class TitleChecker:

def __init__(self, county_api_client):

self.api = county_api_client

def check_for_liens(self, apn):

"""Queries for recent mechanic's liens against a parcel number."""

try:

records = self.api.get_recent_documents(apn=apn, doc_type='LIEN')

if records and any(rec['status'] == 'ACTIVE' for rec in records):

return {'status': 'FLAGGED', 'reason': 'Active lien found.'}

return {'status': 'CLEAR', 'reason': 'No active liens found.'}

except APIConnectionError as e:

# Log the error and handle the connection failure

return {'status': 'ERROR', 'reason': str(e)}

# Usage

# new_listing_apn = '123-45-678'

# title_check_result = TitleChecker(county_client).check_for_liens(new_listing_apn)

# if title_check_result['status'] == 'FLAGGED':

# alert_compliance_team(new_listing_apn, title_check_result['reason'])

This script doesn’t replace a title company. It provides an early warning system. Finding a lien one hour after listing is a problem that can be solved. Finding it two days before closing is a catastrophe that kills the deal. This automation compresses the feedback loop from weeks to seconds.

The Hard Truths: Cost, Complexity, and Data Integrity

Building this kind of infrastructure is not a weekend project. Access to premium data sources is a serious wallet-drainer. Some county APIs charge per call, and the costs add up quickly. The engineering investment is also substantial. You are not just connecting systems. You are building a resilient, fault-tolerant platform that can handle the messy reality of third-party data.

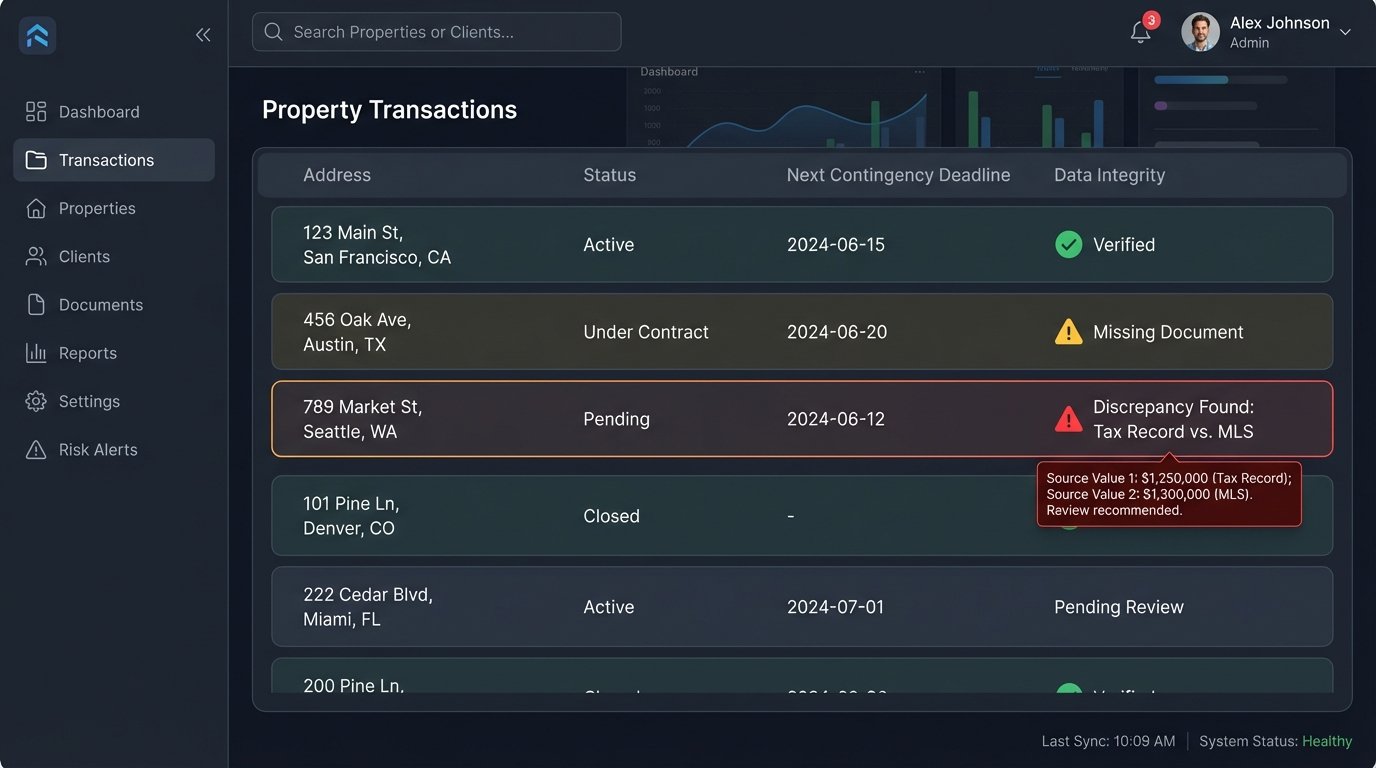

Data integrity will become your obsession. You will have to make architectural decisions about the source of truth. What happens when the MLS says the property has three bathrooms but the last appraisal record from your data provider says it has two? Which source do you trust? You have to build business logic that can weigh the reliability of different data sources and flag discrepancies for human review. Without this, your automation will confidently make bad decisions at scale.

Maintenance is another brutal reality. An unannounced API change from a municipal data provider can break a critical workflow. You need comprehensive monitoring and alerting to detect these failures immediately. This isn’t a “set it and forget it” system. It’s a living product that requires constant attention and adaptation.

The Real Return is in Risk Mitigation

Management often wants to measure the ROI of automation in terms of saved man-hours. That’s the wrong metric. Shaving 20 minutes off an agent’s daily workload is a rounding error. The real return comes from de-risking the transaction process itself.

Every manual data entry point, every checklist item that relies on human memory, every handoff between departments is a potential point of failure. A missed deadline for an inspection contingency can void a contract. An incorrect wire instruction can lead to wire fraud. These are not minor inconveniences. They are five, six, or seven-figure mistakes.

API-driven automation, when architected correctly, provides process enforcement. It can create an immutable audit trail for every critical step in a transaction. It ensures that contingency deadlines are automatically calendared and reminders are sent without human intervention. It can cross-reference data from multiple sources to validate information before it’s passed to the next stage. This systematic reduction of unforced errors is the hidden value.

The goal is to build a system that makes it harder for people to make mistakes. By automating the flow of information and the validation of data between milestones, you are not replacing the real estate professional. You are augmenting them with a system that handles the procedural complexity, freeing them to focus on the negotiation, strategy, and client service that machines cannot replicate.