The Revolution Will Be API-Driven, Not Televised

The core of every real estate operation is a dataset held together with digital duct tape and manual data entry. We are told the industry is modernizing because agents have iPads and clients can take 3D tours. That’s a lie. The foundation is rotten with fragmented data silos, inconsistent APIs, and workflows that still depend on scanning PDFs. The tech revolution real estate needs won’t come from a prettier CRM. It will come from gutting the backend.

Every major brokerage fights the same battle. They pull data from dozens of Multiple Listing Services, each a private kingdom with its own bizarre schema and access method. The Real Estate Standards Organization (RESO) Web API is supposed to fix this, but adoption is slow and implementations are inconsistent. One MLS calls a field `MLSNUM`, another calls it `ListingId`, and a third might use `sysid`. This isn’t a simple mapping problem. It’s a constant, resource-draining war against data entropy.

The MLS Aggregation Nightmare is Real

Forget slick UIs. The real work is forcing these disparate feeds into a single, coherent data model. We write custom ETL scripts for each MLS feed, logic-checking every field. Is `GAR` the code for a two-car garage or a two-story garage? The documentation is often a five-year-old PDF, assuming it exists at all. The entire system is fragile. When an MLS board decides to change a field name without notice, your production application breaks at 2 AM.

Building a unified property view requires brute force. You create a canonical schema for your internal database and then write a translation layer for every single data source you consume. This layer becomes a monstrous piece of legacy code the second it’s written, requiring constant maintenance as each MLS provider makes arbitrary changes to their system.

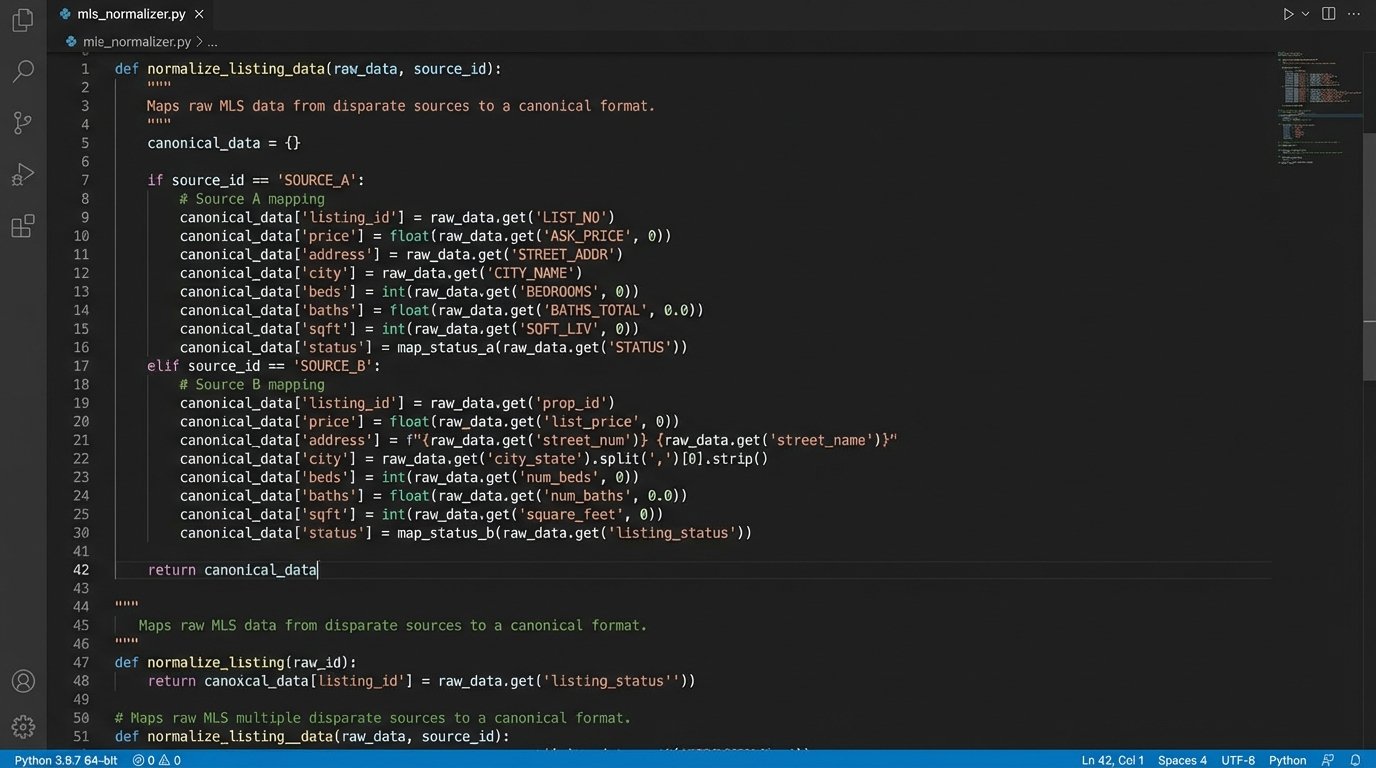

Here is a painfully simplified look at what the mapping logic might involve for just two fields from two different sources. Imagine this scaled across 200 fields and 50 data sources.

# THIS IS A CONCEPTUAL EXAMPLE. DO NOT RUN.

def normalize_listing_data(source_a_listing, source_b_listing):

canonical_listing = {}

# Example for Listing Price

if source_a_listing:

price_raw = source_a_listing.get('ListPrice', '0')

canonical_listing['price'] = int(float(price_raw))

elif source_b_listing:

# Source B provides price in cents. Classic.

price_raw = source_b_listing.get('CurrentPrice', '0')

canonical_listing['price'] = int(price_raw) / 100

# Example for Garage Spaces

if source_a_listing:

# Source A uses a direct integer.

spaces = source_a_listing.get('GarageSpaces', 0)

canonical_listing['garage_spaces'] = int(spaces) if spaces else 0

elif source_b_listing:

# Source B uses a text code.

garage_code = source_b_listing.get('GAR', 'No')

mapping = {'1Car': 1, '2Car': 2, '3+Car': 3}

canonical_listing['garage_spaces'] = mapping.get(garage_code, 0)

return canonical_listing

This code is a liability. Every line is a potential point of failure dependent on an external system you don’t control.

Beyond Listings: The Transactional Black Hole

Property data is only the first circle of hell. The actual transaction process is where efficiency goes to die. An accepted offer kicks off a chaotic sequence of emails, phone calls, and document uploads involving agents, transaction coordinators, lenders, title officers, and inspectors. Each party operates from their own software, and the primary integration point is a human staring at an inbox.

This is not a technology problem. This is an architecture problem. We have a dozen disconnected applications that should be communicating via webhooks and APIs. Instead, we pay people to be human middleware, copying information from a PDF attached to an email and pasting it into a web form. This process is slow, expensive, and riddled with errors. Trying to orchestrate this is like conducting a symphony by having runners carry handwritten notes between the brass and string sections.

A signed purchase agreement should trigger an event. That event should be consumed by the title company’s system to automatically open an order. It should be consumed by the lender’s system to kick off underwriting. It should create a transaction record in the brokerage’s back-office system and schedule critical deadline reminders. This is not science fiction. It’s a standard event-driven architecture that other industries mastered a decade ago.

Stop Buying Software, Start Building Platforms

The market is flooded with “solutions” that are nothing more than chrome applied to a rusted-out chassis. They provide a better user interface for the agent but do nothing to fix the broken, manual workflows underneath. A slick mobile app for tracking a deal is useless if a transaction coordinator still has to manually read the contract to figure out the financing deadline.

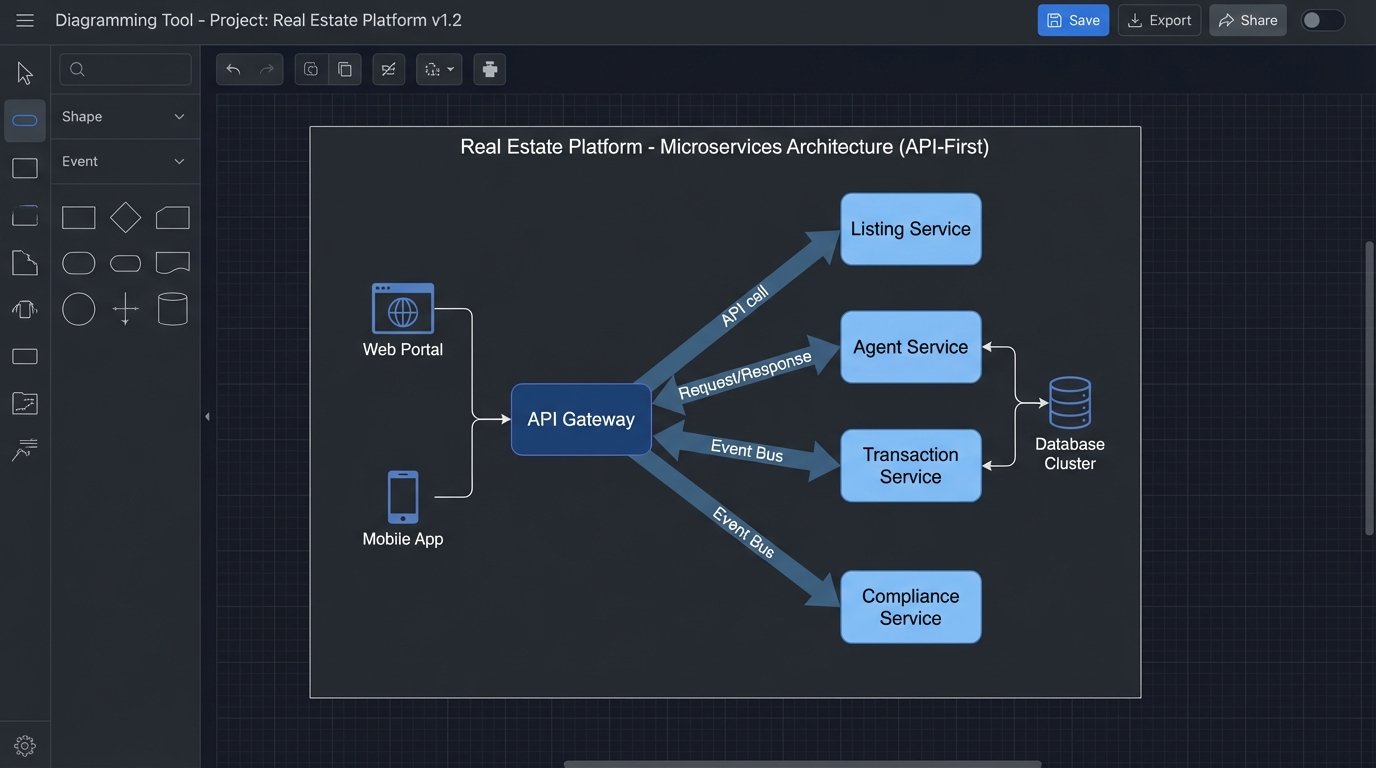

The only way forward is to stop thinking about buying monolithic software and start thinking about building a cohesive, API-first platform. This means identifying every core business function and exposing it as an internal, well-documented service.

- Listing Service: A single internal endpoint to create, read, update, and delete property listings, abstracting away the MLS aggregation nightmare.

- Agent Service: Manages agent data, licensing, commission splits, and onboarding workflows.

- Transaction Service: The core engine for managing deals from offer to close. It doesn’t need a UI. It needs to be a state machine driven by API calls.

- Document Service: A repository for contracts and disclosures with APIs for upload, versioning, and e-signature integration.

- Compliance Service: An automated engine that consumes documents and transaction data, then runs them against a ruleset to flag missing signatures or incomplete fields.

Breaking the monolith into these services forces clean separation of concerns. It allows a small team to own the Agent Service while another focuses entirely on the Compliance Service. You can upgrade, replace, or scale each component independently. This is not about building everything from scratch. It’s about owning the central nervous system and then plugging in best-in-class third-party tools where they make sense, like DocuSign for e-signatures or Plaid for earnest money verification.

The Data Is The Asset, Not The Application

This service-oriented approach generates a clean, structured stream of event data. Every status change, every document upload, every price adjustment becomes an immutable event that can be piped into a data warehouse like BigQuery or Snowflake. This is where the real value is created.

You stop asking “What is the status of this deal?” and start asking “What are the common attributes of deals that fall out of escrow during the inspection phase?”. You stop looking at vanity metrics like website traffic and start building lead scoring models based on a user’s property viewing history correlated with their saved searches and agent interactions. This is how you build a competitive moat. The data, when structured and accessible, becomes the most valuable asset in the company.

A Concrete Example: Automating Compliance Review

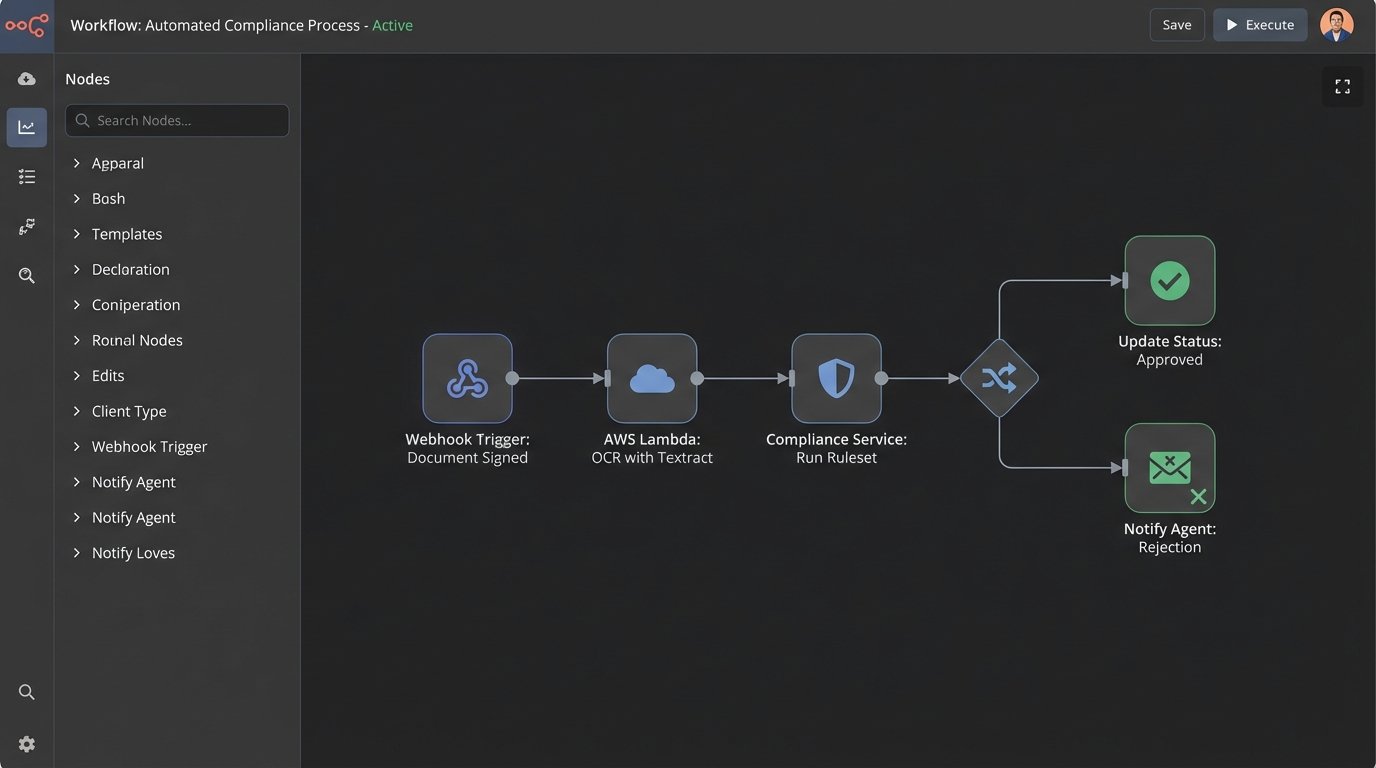

Let’s make this tangible. A core function that drains resources is the compliance review, where a broker or office manager checks every contract for errors. We can automate 80% of this.

The workflow looks like this:

- An agent uploads a signed purchase agreement via an internal portal. This hits the Document Service.

- The Document Service triggers a webhook that passes the document ID to a serverless function (e.g., AWS Lambda).

- The function pulls the PDF and uses an OCR service like Amazon Textract to rip the text and form data into a structured JSON object.

- This JSON is passed to the Compliance Service. It runs a series of logic checks: Are all the required signatures present? Does the offer price match the price in the Transaction Service? Is the closing date a valid date and not on a weekend?

- The results are pushed back to the Transaction Service. If it passes, the transaction state is updated to `ComplianceApproved-Initial`. If it fails, the state becomes `ComplianceRejected` and a notification with the specific error is sent back to the agent.

No human was involved. The agent gets immediate feedback, and the compliance officer only has to review the exceptions. This frees up their time for genuine problem-solving instead of rote checklist work. This isn’t a fantasy. The tools to build this exist right now.

This Is a Wallet-Drainer, But The Alternative is Irrelevance

Building this architecture is not cheap. It requires hiring engineers who think in terms of systems, not just features. It requires a significant investment in cloud infrastructure. It involves a painful process of decoupling from legacy vendors who hold your data hostage in proprietary systems. The temptation is to just buy the next all-in-one CRM that promises to solve all your problems.

That approach is a dead end. It optimizes for short-term comfort while accumulating long-term technical debt. The real estate companies that will dominate the next decade are the ones that recognize their business is not just selling houses. It’s operating a technology platform that makes selling houses more efficient. They will be the ones who bite the bullet, tear down their fractured systems, and rebuild around a clean, API-driven core. The rest will be wondering why their operating costs are so high and their top agents are leaving for a competitor with better tools.

The revolution is here. It’s just happening in the data center, not the open house.