Scraping the Bottom of the Uncanny Valley

The core premise of most AI cold calling platforms is deception. The technical goal is to build an agent that passes a vocal Turing test for the first 30 seconds, long enough to hook a prospect before they detect the artifice. This entire approach is built on a foundation of bad faith and, more importantly, brittle technology that ignores the realities of human communication.

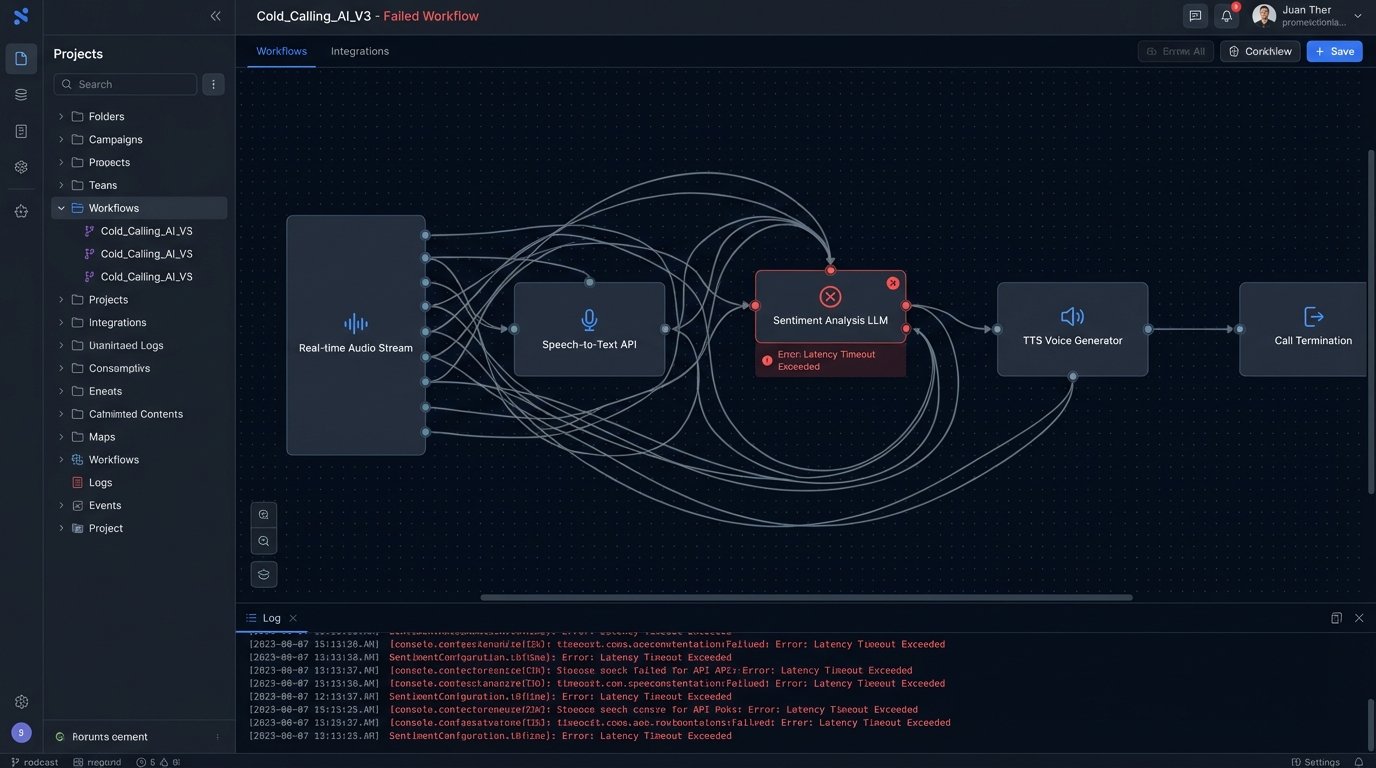

These systems rely on a daisy chain of speech-to-text, natural language understanding, and text-to-speech services. Every step in this chain introduces latency. Trying to achieve real-time sentiment analysis and response generation with a cloud-based LLM is like trying to have a conversation through a satellite phone with a 3-second delay. The gaps kill the flow and immediately signal to the listener that something is wrong.

The Latency Fallacy and Synthetic Voices

Vendors will show you demos in a controlled environment with near-zero network latency and a clean audio stream. Production is another story. A call recipient on a cell phone, driving through a tunnel, introduces packet loss and audio artifacts that completely derail the speech-to-text engine. The AI misinterprets a phrase, fires the wrong conversational branch, and the entire call collapses into a nonsensical loop.

The voice itself is the second point of failure. We’ve moved past the robotic monotone of early synthesizers, but the current generation of “human-like” voices still fails on prosody. They can’t replicate the subtle, non-verbal shifts in pitch, tone, and pacing that convey sincerity or empathy. The AI voice is stuck trying to thread a needle with a rope. It has the general shape of human speech but lacks the fine-grained texture of authentic communication, making the entire attempt feel clumsy and wrong.

You end up with a vocal performance that is just good enough to be unsettling.

Disclosure Is Not a Weakness, It’s an API Contract

The industry’s fear of disclosure is a failure of imagination. Instead of hiding the AI, the technically superior and ethically sound approach is to declare it upfront. This isn’t about legal compliance. It’s about setting a clear protocol for the interaction. An upfront disclosure recalibrates the user’s expectations and changes the nature of the conversation from a botched social interaction to an efficient data exchange.

Consider the initial payload of a call. The deceptive model attempts to hide the agent’s identity. The transparent model declares it explicitly.

A bad implementation looks something like this, where the agent’s nature is buried or omitted:

{

"call_id": "c7a8f9b0-1234-5678-9abc-def012345678",

"target_number": "+15558675309",

"script_id": "Q4_PROMO_SCRIPT_V2",

"agent_config": {

"name": "Sarah",

"voice_model": "en-US-Wavenet-F",

"persona": "friendly_but_professional"

}

}

This structure is designed to masquerade as a human-to-human call log. A technically honest structure forces disclosure into the protocol itself. It frames the agent as a service, not a person.

{

"call_id": "c7a8f9b0-1234-5678-9abc-def012345678",

"target_number": "+15558675309",

"script_id": "Q4_PROMO_SCRIPT_V2",

"agent_config": {

"agent_type": "automated_voice_assistant",

"voice_model": "en-US-Neural2-C",

"disclosure_required": true,

"disclosure_string": "Hi, you're speaking with an automated assistant from [Company Name]. Is now an acceptable time to talk for one minute?"

}

}

By logic-checking a `disclosure_required` flag, we bake ethics directly into the system architecture. The call cannot proceed without first executing the disclosure function. This isn’t a legal footnote. It’s a system requirement that builds a more predictable and trustworthy interaction from the first packet.

Building a Transparent AI Calling Architecture

A system built on transparency looks different from one built on deception. It prioritizes data integrity and directness over conversational trickery. The goal shifts from mimicking a human to providing a fast, accurate, and predictable automated experience. This requires a fundamental change in how we structure the data pipeline and the agent’s logic.

Data Integrity Over Conversational Flow

The first casualty of the deceptive model is data quality. When an AI agent tries and fails to have a human-like conversation, the transcript is a mess of false starts, corrections, and misinterpreted phrases. The data extracted is often unreliable. A transparent agent, by contrast, can be designed to ask direct, unambiguous questions optimized for machine parsing.

The agent should be programmed to say, “To confirm, is your shipping address still 123 Main Street?” This is a closed question that expects a simple binary response. A deceptive agent might be programmed to ask, “So where should we send that out to?” This open-ended question invites ambiguity and increases the probability of the speech-to-text engine making an error. Feeding an AI raw, un-scrubbed CRM data is like pouring gravel into a car’s gas tank. The engine will choke on the inconsistencies and eventually seize.

The system should be built to strip nuance, not to simulate it.

Error Handling as a Core Feature

Deceptive agents handle errors poorly. When they fail to understand, they often default to a generic “Could you repeat that?” which only increases the user’s frustration. A transparent agent can use a more direct error-handling protocol. It can state its limitations clearly: “I am an automated system and did not understand your request. Can we try again with a ‘yes’ or ‘no’ answer?”

This approach bypasses the uncanny valley entirely. It confirms the user’s suspicion that they are talking to a machine and gives them a clear path to proceed. The objective is not to win a social game. It is to complete a task. By explicitly stating its limitations, the AI becomes a more effective tool, not a failed imitation of a person.

The Long-Term Cost of Consumer Distrust

Every call from a deceptive AI agent erodes consumer trust in automated systems. It trains people to be hostile and suspicious toward any unsolicited call, legitimate or not. This creates a negative feedback loop. As trust declines, contact rates plummet, and companies feel pressured to deploy even more aggressive and deceptive tactics to hit their numbers. The entire channel gets poisoned.

This isn’t a hypothetical problem. We’ve already seen this exact pattern with email spam and robocalls. A communication channel becomes saturated with low-quality, bad-faith interactions until it is rendered almost useless for legitimate business. The short-term gains from a few successful AI-driven sales calls are dwarfed by the long-term destruction of the voice channel’s viability.

From Deception to Delegation

The only sustainable path forward is to reposition AI voice agents as tools of delegation, not deception. A customer should feel they are delegating a task to an efficient machine, not being tricked by one. This means the AI should be used for high-volume, low-complexity tasks like appointment confirmations, status updates, or simple data verification.

These are tasks where efficiency is valued above personality. No one wants to have a deep, meaningful conversation with their airline’s automated system about a flight delay. They want the new flight information injected directly into their calendar with a minimum of fuss. The value proposition of the AI is speed and accuracy, not a hollow attempt at artificial empathy.

We must engineer these systems to be explicit, honest, and task-oriented. The goal is to build a tool that people will choose to interact with because it respects their time and intelligence, not a system that tricks them into a conversation they never agreed to have. Anything else is just a sophisticated way to burn your own reputation.