Most legal research platforms are just glorified search engines bolted onto a static library. They run on keyword matching and Boolean logic that hasn’t fundamentally changed in twenty years. The result is a haystack of irrelevant cases an associate has to burn hours sifting through. True case law analysis requires tools that don’t just find documents but interpret them, identifying patterns and arguments at a machine level. The platforms that do this well are few, and none of them are perfect.

Evaluating these tools means looking past the marketing demos. We need to dissect their NLP models, test their API endpoints for reliability, and calculate the true cost of integration with existing document management systems. A platform that gives you brilliant results but walls them off in a proprietary garden is a net loss. This is a breakdown of the top platforms that attempt to solve the analysis problem, warts and all.

1. CoCounsel (Casetext/Thomson Reuters)

CoCounsel burst onto the scene by wrapping a GPT-4 model in a legal-specific workflow. It’s not just a search tool. It’s an engine for performing legal tasks: summarizing documents, extracting contract data, and building timelines from a pile of discovery files. Its core strength is moving beyond simple precedent retrieval to argument generation and synthesis. It can take a prompt like “Find cases supporting the argument that a software license agreement is a service, not a good, under the UCC” and produce a draft memo with citations.

This is a significant departure from keyword-based systems. It forces a different mental model on the legal user, moving them from search operator to task delegator.

Core Functionality

The platform’s analysis engine, CARA A.I., was its original claim to fame. You upload a brief or complaint, and it guts the document, identifies the legal arguments and citations, and then finds other relevant authorities the drafter missed. CoCounsel extends this with skills-based modules. Instead of a single search bar, you select a task like “Review Documents” or “Search a Database.” This structured approach prevents the kind of vague, unhelpful queries that plague general-purpose LLMs.

Under the hood, it’s leveraging a fine-tuned large language model against Casetext’s database. The outputs are impressive, but they require careful fact-checking. The AI hallucinates less than a public model, but it still happens. The system is designed as a force multiplier for an experienced attorney, not a replacement for one.

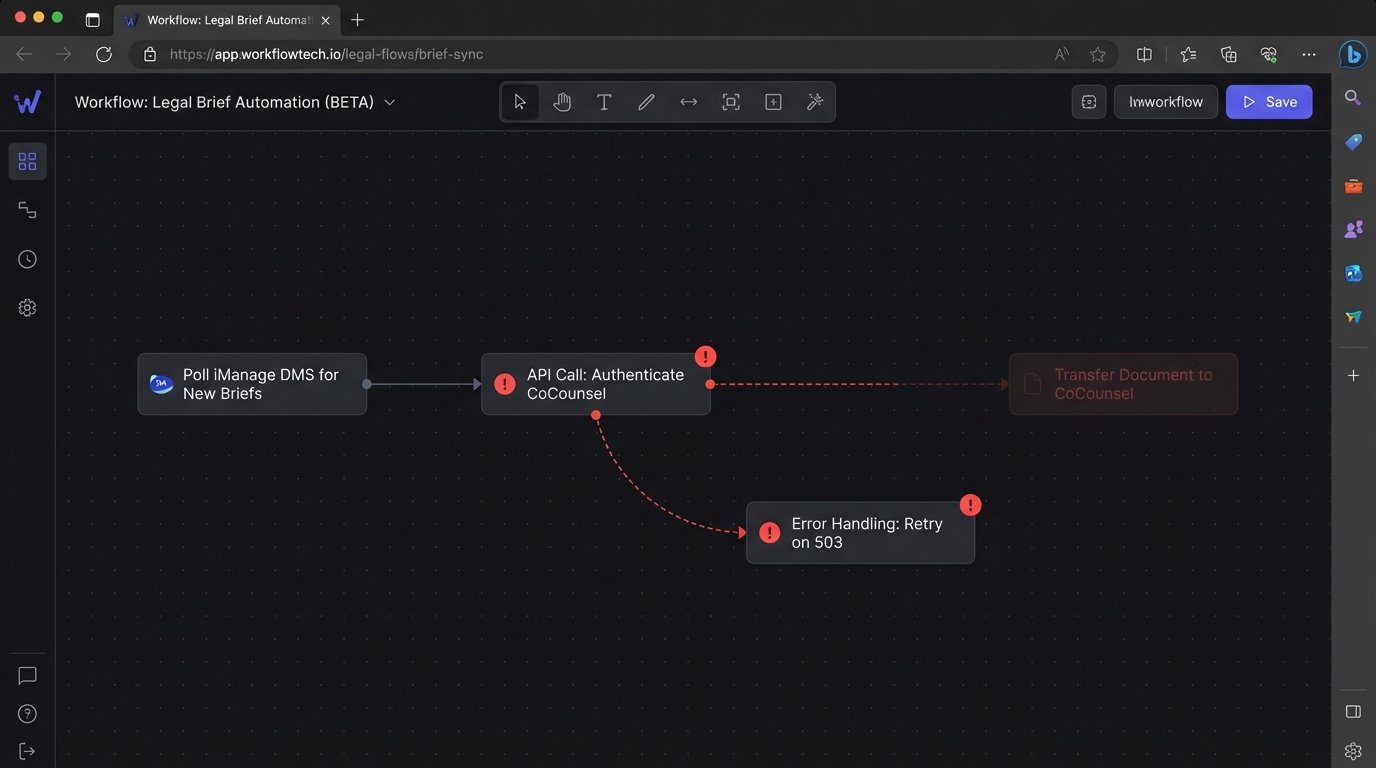

Integration Headaches

Getting CoCounsel to talk to your firm’s existing infrastructure is the main challenge. While it has a public API, it’s clearly designed for point-to-point data pulls, not deep workflow integration. Trying to automate the process of feeding it documents from a NetDocuments or iManage DMS requires a custom-built connector. This isn’t a simple Zapier job. You’re building a service that has to handle authentication, manage file transfers, and poll for results. It feels like shoving a firehose of data through a garden hose connection. The process is sluggish and brittle.

The system wants to be your primary interface, and it resists being just another backend process your own applications call. This creates data silos and forces users to toggle between their CMS and the CoCounsel UI.

The Verdict

CoCounsel is powerful for firms willing to adapt their workflows to its task-based model. It excels at accelerating the first-draft and initial research phases. But it’s a wallet-drainer, and the price tag is justified only if you force high adoption rates. Treat it as a closed ecosystem, because attempts to integrate it deeply will lead to custom development dead ends.

2. Lexis+ AI

LexisNexis took a more conservative route. Instead of building a new platform from the ground up, they injected generative AI features directly into the familiar Lexis+ interface. This was a smart move for user adoption. There is no new system to learn. The AI functions appear as additional options within the existing research workflow. Users can summarize a case, generate a draft argument, or ask conversational questions about a legal topic without leaving the page.

The strategy is to augment the traditional research process, not replace it.

Core Functionality

The system offers conversational search, document summarization, and draft generation. Its key differentiator is the direct link to Lexis’s own massive, proprietary repository of primary and secondary sources. When the AI generates an answer, it provides direct citations with links back to the source documents. This is critical. It grounds the AI’s output in verifiable data, mitigating the risk of hallucinated citations. It also leverages Shepard’s for citation analysis, allowing a user to ask, “Is this case still good law?” and get a direct answer.

The technology feels less like a raw LLM and more like a carefully controlled response-generation system. The answers are less creative than what you might get from CoCounsel but are generally more reliable and directly tied to citable authority. It’s a closed-loop system, which is both a strength and a weakness.

Here’s a simplified look at what a request to its internal API might resemble, abstracting away the authentication layers. The focus is on structured input to constrain the model’s output and force citation grounding.

{

"request_id": "a1b2c3d4-e5f6-7890-ghij-klmnopqrstuv",

"user_session": "xyz789-session-abc123",

"query_type": "conversational_draft",

"prompt_text": "Draft an argument section for a motion to dismiss, asserting that the plaintiff's claim is barred by the statute of limitations under California Code of Civil Procedure § 335.1.",

"jurisdiction": "CA",

"constraints": {

"force_citation": true,

"cite_source": ["primary_law", "shepardized_positive"],

"output_format": "memo_argument_section",

"max_length": 500

}

}

This structured approach is what keeps the output tied to legal reality.

Integration Headaches

Lexis+ AI offers virtually zero public-facing integration. It is a walled garden by design. There is no API for you to call to programmatically ask it to summarize a case or generate a document. All interaction must happen through their web UI. This makes it impossible to build custom automation that leverages its intelligence. You cannot, for example, build a workflow that automatically takes new complaints filed in a case, sends them to Lexis+ AI for a summary, and then injects that summary into your case management system.

This lack of connectivity is a massive friction point for any firm serious about building an efficient, integrated technology stack. You are buying access to a tool, not a capability.

The Verdict

Lexis+ AI is for firms heavily invested in the LexisNexis ecosystem who want a safe, predictable entry into generative AI. It’s reliable for what it does, but its closed nature makes it a strategic dead end for automation architects. It improves the efficiency of a researcher, but it doesn’t unlock new possibilities for system-level automation.

3. Westlaw Precision

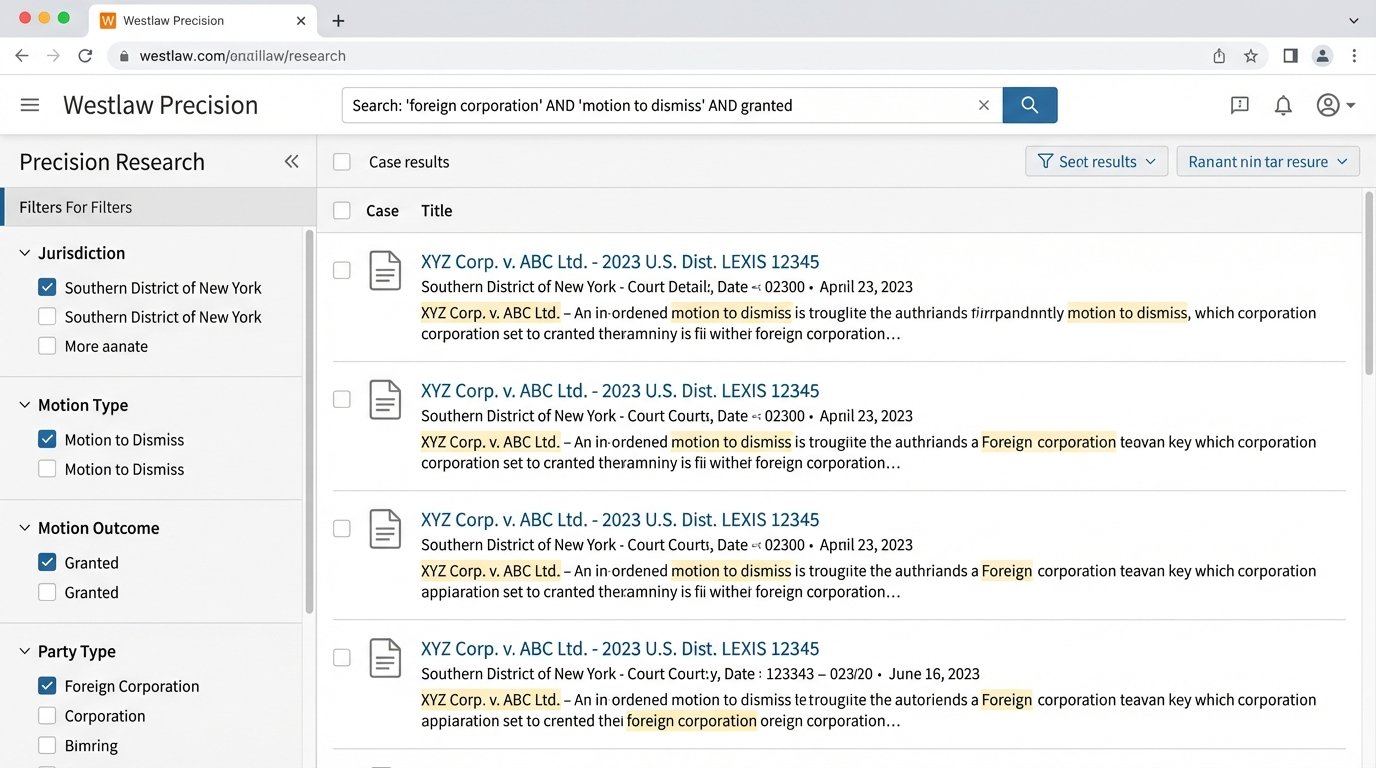

Thomson Reuters has been layering AI onto Westlaw for years, starting with features like KeyCite Overruling Risk, which uses machine learning to predict if a case is likely to be overturned. Westlaw Precision is the latest evolution, focusing on highly specific, targeted search capabilities. Instead of broad, conversational queries, Precision allows users to filter by motion type, outcome, cause of action, and party type with a frightening level of granularity.

It’s less about generating text and more about surgically precise filtering of the entire universe of case law.

Core Functionality

The platform’s strength is its structured data. Thomson Reuters has invested millions of man-hours in having human attorneys tag and categorize cases across dozens of vectors. The AI in Precision leverages this structured data to deliver hyper-relevant results. You can search for “motions to dismiss for lack of personal jurisdiction that were granted in the Southern District of New York in the last 24 months involving a foreign corporation.” A keyword search could never accomplish this with any reliability.

It also introduced a “find similar cases” feature that goes beyond simple topic matching. It analyzes the factual pattern and legal reasoning of a source case to find others that are conceptually analogous, even if they don’t share the same keywords. This is a vector-search-based approach that uncovers connections a human might miss. It is trying to map the DNA of a legal argument, which is a much harder problem than just matching words on a page.

Integration Headaches

Similar to Lexis, Westlaw is a fortress. Its APIs are notoriously difficult to work with, expensive, and limited in scope. They are designed to allow external systems to pull data from Westlaw, not to allow them to execute commands within it. You can build a tool that retrieves a document if you have its citation, but you can’t programmatically execute a complex Precision search and get the results back in a structured format.

The data you can extract is often wrapped in proprietary XML schemas that require significant parsing and transformation before they can be used in another application. You end up spending more time writing data-massaging scripts than building valuable automation.

The Verdict

Westlaw Precision is the best tool on the market for the pure task of finding the perfect on-point case. It’s a researcher’s scalpel. Firms that handle complex, high-stakes litigation will find the cost justifiable. But from an automation perspective, it’s a black box. You can’t build on top of it, so its value is confined to the manual work of an attorney sitting in front of its interface.

4. vLex (Vincent AI)

vLex, which acquired Fastcase, is positioning itself as the global alternative to the big two. Its major asset is a massive international law library. Their AI assistant, Vincent, functions similarly to Casetext’s CARA. You upload a document, and it analyzes it to find related authorities. The key difference is the breadth of the underlying dataset. Vincent can pull authorities from dozens of countries, which is a significant advantage for firms with international practices.

It competes not by having a marginally better NLP model, but by having a dataset the others can’t easily replicate.

Core Functionality

Vincent AI builds a “knowledge graph” from a source document, identifying key legal concepts and their relationships. It then searches the vLex database for documents that match this conceptual map. This is a more sophisticated approach than simple keyword extraction. It allows the system to find relevant documents that use different terminology to discuss the same legal issue. The platform also offers a feature called “Precedent Map,” which visually graphs the citation network of a case, making it easier to see how an argument has evolved over time.

The user interface is cleaner and faster than the legacy platforms, reflecting its more modern tech stack. The focus is on speed and data visualization.

Integration Headaches

vLex offers a more modern and accessible API than Lexis or Westlaw. It’s REST-based and returns clean JSON, which is a relief. However, the API licenses are expensive, and the rate limits on the lower tiers are restrictive. You can build connectors to your internal systems, but you have to be careful about the number of calls you make per minute. A high-volume process, like analyzing every new document that enters the DMS, could easily hit the throttle and bring your workflow to a halt.

The API documentation is also a step behind the product’s features. We’ve found undocumented endpoints and parameters that change without notice. It’s more open, but that openness comes with instability.

The Verdict

For firms with a significant international or cross-border practice, vLex is a serious contender. Its global dataset is a true differentiator. From an automation standpoint, its API is more promising than the incumbents, but it’s a “buyer beware” situation. You’ll need to build robust error handling and be prepared for unexpected changes.

5. Alexsei

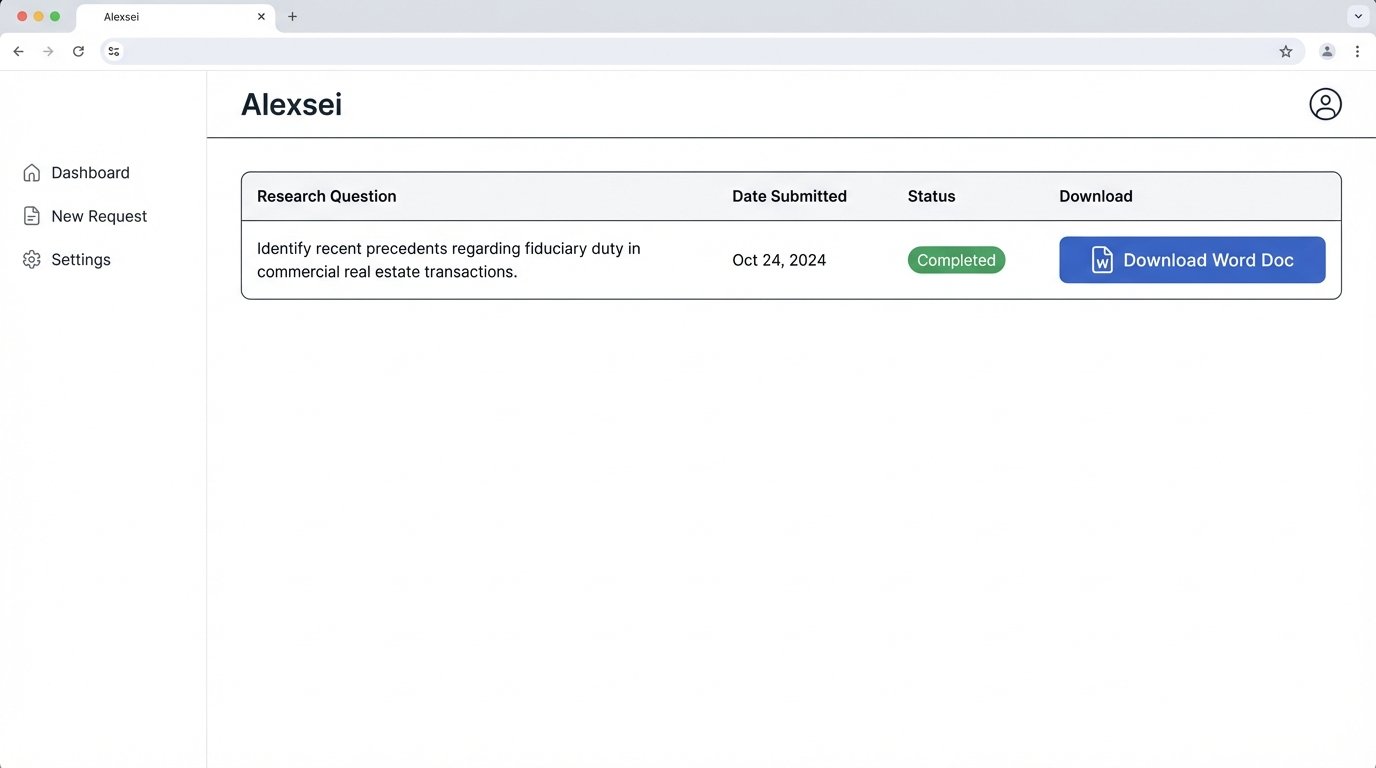

Alexsei is a different beast entirely. It’s not a search platform you use. It’s a service that produces a finished product: a legal research memo. You submit a question in plain English, and within a few hours, the system delivers a memo answering your question, complete with citations and analysis. It’s a closed service designed to replace a specific, time-consuming task performed by junior associates.

The goal is not to help you research faster, but to eliminate the need for you to do the research at all.

Core Functionality

The platform uses a combination of AI and human lawyers. An AI system performs the initial broad search and analysis, identifying potentially relevant cases and statutes. Then, a team of staff attorneys reviews, refines, and synthesizes the AI’s output into a coherent memo. This human-in-the-loop model is their solution to the AI hallucination and quality control problem. You’re not getting a raw AI dump; you’re getting a curated, human-validated work product.

This makes the output far more reliable than a pure generative AI tool. The memos are well-structured and directly address the question asked. It is an outsourced service masquerading as a tech platform.

Getting this to work requires a fundamental restructuring of how a firm thinks about delegation. It’s not a tool for an associate, but a replacement for one. Trying to jam this service into a traditional workflow is like trying to fit a square peg in a round hole by hitting it with a sledgehammer. It breaks the process.

Integration Headaches

There is no integration. The service is accessed through a web portal where you submit your question. The deliverable is a Word document or PDF. There is no API to submit questions, no way to get the results back as structured data, and no method for connecting it to a case management system. The workflow is entirely manual: download the memo, save it to the correct folder in your DMS, and update the case file.

This total lack of connectivity makes it an automation island. It might save associate hours on a specific task, but it adds manual administrative work and creates another data silo.

The Verdict

Alexsei is an intriguing model for small firms or solo practitioners who lack the staff to handle extensive research tasks. It provides a high-quality work product for a fixed price. However, for larger firms with established workflows and a focus on building an integrated tech stack, Alexsei is a non-starter. Its manual, disconnected nature runs counter to every principle of legal operations automation.