Most automated real estate funnels are brittle contraptions held together with API keys and optimism. They function until a lead source changes a field name without notice or a CRM hits its daily execution limit. The goal is not to build a perfect, linear path. The goal is to build a resilient system that anticipates failure, because failure is the default state of interconnected platforms.

These are not suggestions. They are the minimum requirements for an automation architecture that will let you sleep through the night.

Ingest and Sterilize Data First, Ask Questions Later

Your funnel starts with data ingestion, the most common point of fracture. You will be pulling from multiple MLS feeds, Zillow, Redfin, and maybe a dozen lead-gen form providers. Each source has its own schema, its own definition of “null,” and its own creative interpretation of address formatting. Do not pipe this raw data directly into your CRM or marketing platform.

The only sane approach is to force all incoming data through a staging layer. This could be a temporary database table or even a serverless function that exists only to normalize the payload. Your function’s job is to strip junk characters, standardize address fields using a service like Google’s Geocoding API, and logic-check for impossible values like a 1-bedroom, 9,000-square-foot house. This intermediate step decouples your core logic from the chaos of third-party sources.

Trying to normalize data from three different MLS feeds is like trying to sync three clocks that are all drifting at different rates. You’re not just setting the time, you’re constantly recalculating the drift.

Assume every API will eventually send you garbage. Build a wall against it.

Example: Raw vs. Cleaned Payload

A lead form might send a webhook with a payload that looks like a mess. Your normalization layer’s job is to fix it before it contaminates your system.

Incoming Payload (The Problem):

{

"first_name": " jon ",

"lastName": "Doe",

"phone": "(555) 867-5309",

"email_address": "jon.doe@example.com",

"property_interest": {

"address": "123 main st, anytown",

"zip": "90210"

},

"source": null

}

Normalized Payload (The Solution):

{

"firstName": "Jon",

"lastName": "Doe",

"phone": "5558675309",

"email": "jon.doe@example.com",

"address": "123 Main St, Anytown, CA 90210",

"source": "Unknown",

"ingestionTimestamp": "2023-10-27T10:00:00Z"

}

The normalized version has consistent casing, a stripped phone number for easier lookups, a full address string, and a default value for the missing source. This is non-negotiable work.

Your Lead Scoring Model Is Probably Wrong

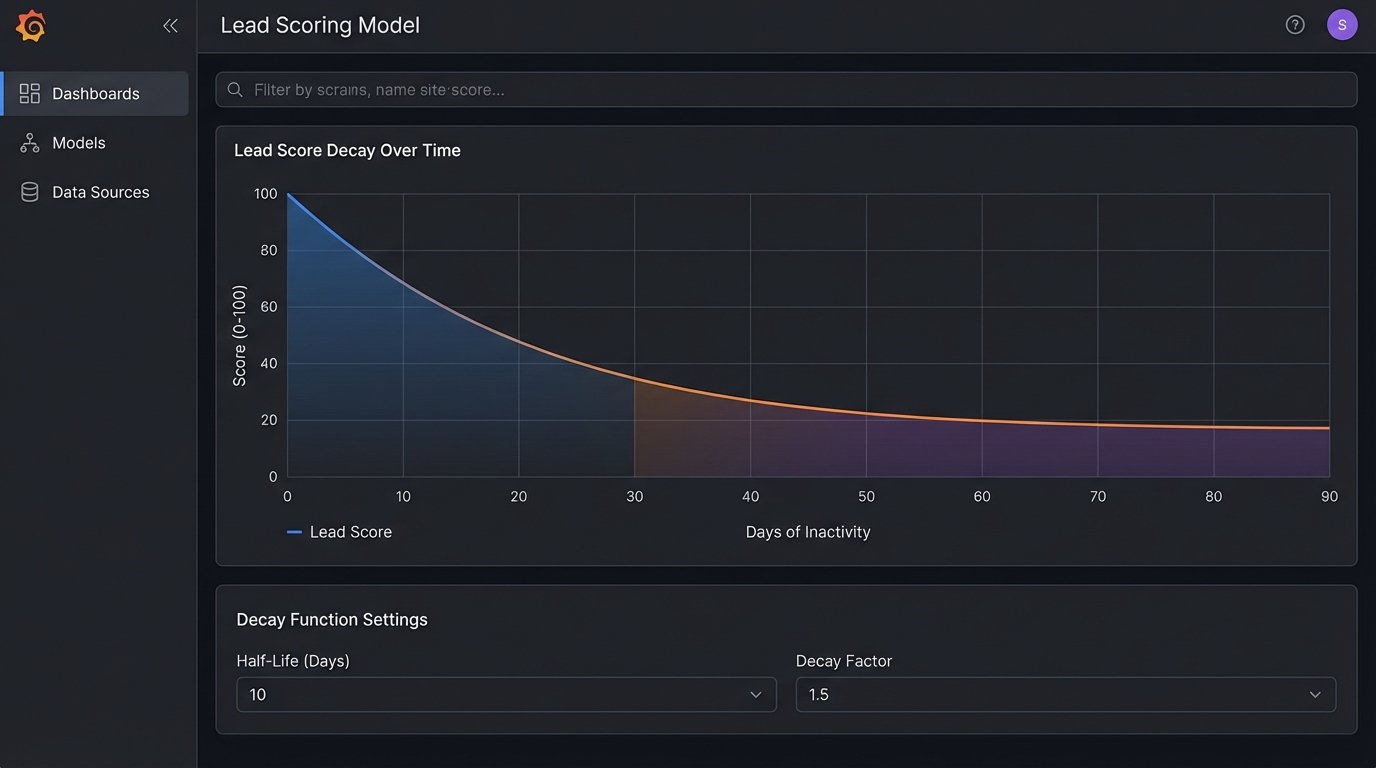

A static lead scoring model is useless. Awarding 10 points for a page view and 20 for a form submission ignores the most critical variable: time. A lead who visited your pricing page three times in the last hour is infinitely more valuable than a lead who downloaded a whitepaper six months ago. Your scoring logic must incorporate a decay function.

Implement a system where a lead’s score diminishes over time. For example, a lead’s score might decrease by 1% every 24 hours of inactivity. This requires more compute resources, as you need a scheduled job to periodically re-evaluate scores across your database. The alternative is a sales team wasting cycles on leads that went cold weeks ago. This is a clear case where increased operational cost directly prevents wasted payroll.

Stop treating lead scores as a high-water mark. They are a volatile stock price reflecting current intent.

Build a State Machine, Not a Sequence

Most marketing automation platforms encourage you to build linear workflows: “If lead does X, then do Y, then wait 3 days, then do Z.” This model shatters the moment a lead behaves unpredictably. What happens if a lead in the “Long-Term Nurture” sequence suddenly requests a showing? Your linear workflow has no mechanism to gracefully move them backward or jump them to a different track.

Instead, structure your funnel as a state machine. A lead can exist in one of several defined states: `New`, `AttemptingContact`, `Contacted`, `Nurturing`, `Showing`, `UnderContract`, `Closed`, `Lost`. Every action a lead takes does not trigger a linear step. It triggers a potential state transition. A form submission might transition a lead from `Nurturing` to `AttemptingContact`. This architecture correctly models the messy, non-linear reality of a sales cycle.

A linear sequence is a monorail. A state machine is a proper rail yard with switches and transfer tracks.

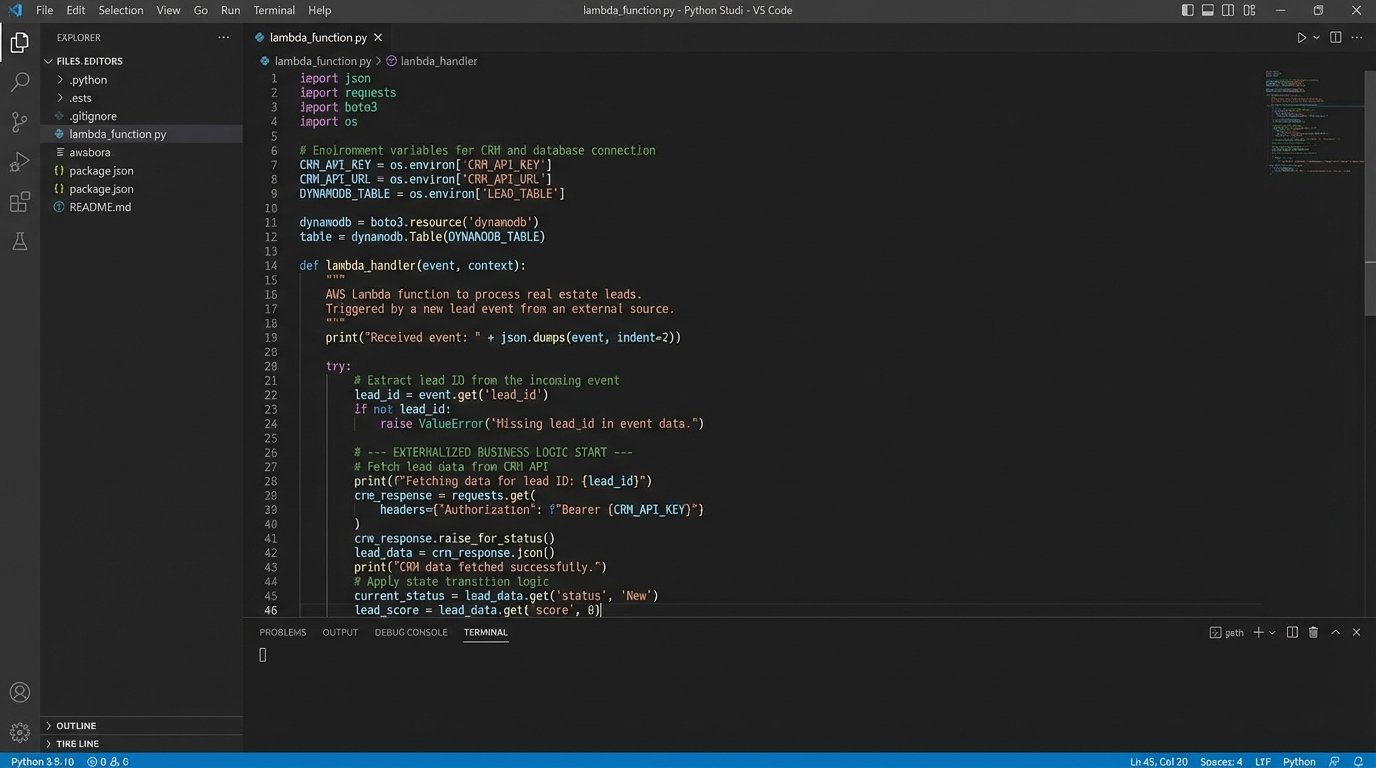

Externalize Core Logic from the CRM

This is where people get defensive. Your CRM’s built-in automation builder, whether it’s Salesforce Process Builder or HubSpot Workflows, is a trap. These tools are designed for simple tasks, but they are sluggish, have opaque execution limits, lack proper version control, and are a nightmare to debug when something goes wrong. They are a black box running on shared infrastructure you don’t control.

The superior architecture is to gut the automation logic from the CRM entirely. Use an external system like AWS Lambda or Google Cloud Functions to house your business rules. The CRM becomes a simple data store. Your Lambda function fetches data via API, executes your complex logic (lead scoring, state transitions, assignment rules), and then pushes the results back to the CRM via API. You get proper logging, version control via Git, and total control over the execution environment.

This adds a point of failure, but it’s a point of failure you can actually monitor and fix. Relying on a CRM’s internal automation is like building your house on rented land.

Every Endpoint Must Be Idempotent

What happens if your webhook fires twice because of a network retry? Does the lead get two welcome emails? Is a duplicate opportunity created? If the answer is yes, your system is broken. Every action that modifies data or communicates with a lead must be idempotent. This means that receiving the same request multiple times has the same effect as receiving it once.

The standard way to force this is by generating a unique event ID for every trigger. When your automation receives a request, its first job is to check a log or database to see if that event ID has already been processed. If it has, the system returns a `200 OK` status but does no further work. If not, it processes the request and then immediately logs the event ID.

This is not an optional feature for “high-volume” funnels. It is a fundamental requirement for any professional system.

Plan for API Rate Limits and Transient Errors

Every third-party API you integrate with has a rate limit. You will hit it. The question is whether your system panics and drops data or handles it gracefully. When you get a `429 Too Many Requests` response, your code should not simply fail. It should pause execution based on the `Retry-After` header or implement an exponential backoff algorithm, waiting for a short period before retrying the request.

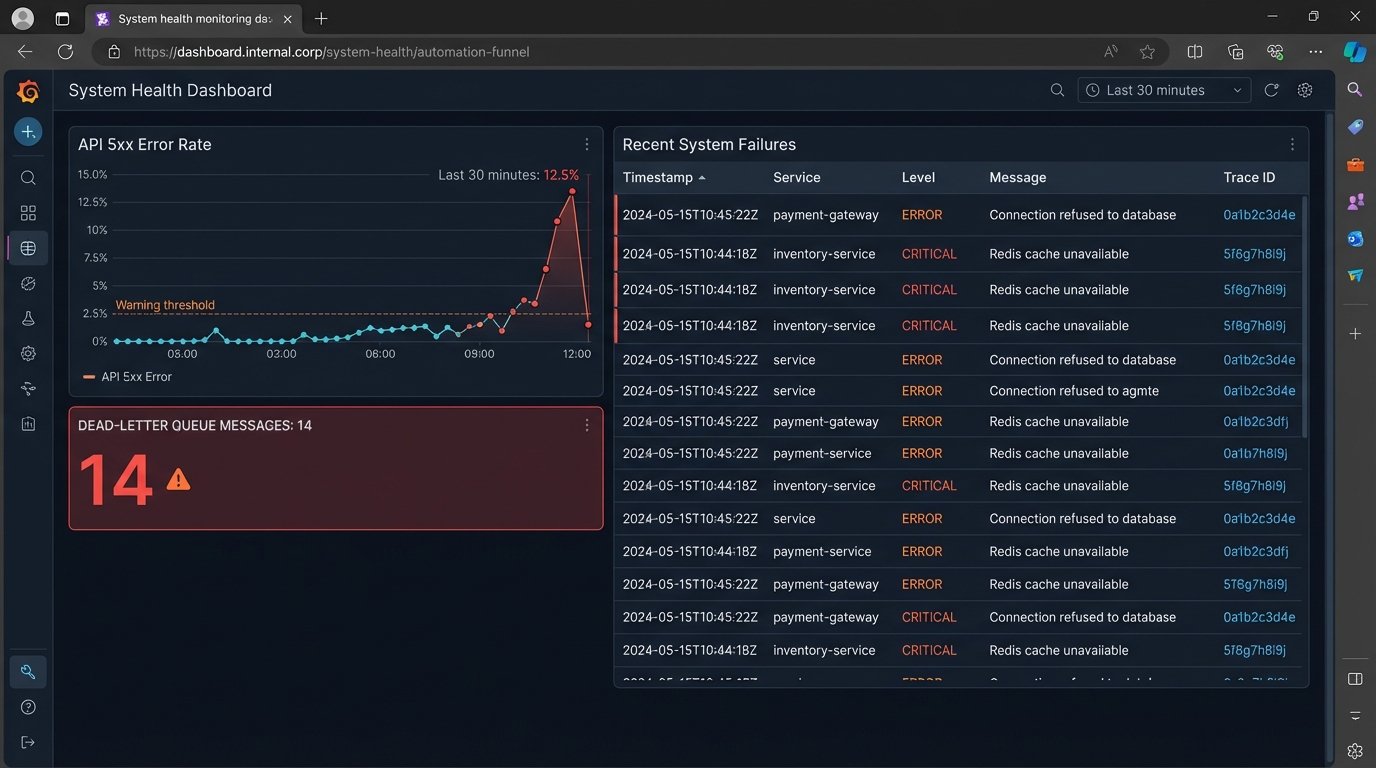

A funnel without deep monitoring is a black box. You’re shoving leads in one end and hoping money comes out the other, with no visibility into the grinding, breaking gears inside.

For persistent failures, you need a dead-letter queue (DLQ). If a webhook payload fails to process after a set number of retries (e.g., 5 attempts over 10 minutes), the entire payload and its metadata are shunted to a DLQ, like an SQS queue or a dedicated database table. This prevents data loss and creates a specific location for an engineer to investigate the malformed data or endpoint failure without halting the entire pipeline.

A webhook without a retry queue is like throwing a message in a bottle into the ocean. It might get there. It probably will not. And you will never know.

Aggressive, Granular Monitoring is Non-Negotiable

The “set it and forget it” mindset is a recipe for disaster. You need a dashboard, and that dashboard needs to track more than just lead volume. You need to monitor the health of the machine itself. Track API error rates by endpoint, focusing on spikes in 4xx and 5xx responses. Monitor the average execution time of your serverless functions. A sudden increase could signal a problem with a downstream API.

Set up specific alerts for failure conditions. An alert should fire if the number of messages in your dead-letter queue exceeds zero. An alert should fire if your primary ingestion function does not run successfully at least once per hour. You are looking for negative signals, the absence of good data, which is often the first sign of a silent failure.

Your monitoring system is the nervous system of your funnel. Without it, you are flying blind.