Most change management guides are written by people who have never had to revert a production database at 2 AM. They talk about buy-in and communication as abstract concepts. For us, change management is a technical discipline. It is the process of architecting user adoption to prevent system misuse, minimize support tickets, and ensure the automation actually survives its first contact with the legal department.

Failure to instrument change management correctly results in systems that are functionally perfect but operationally useless. The platform works, but nobody uses it correctly, leading to data corruption, bypassed workflows, and an eventual, quiet decommissioning. This is not about making people feel good. It is about reducing operational friction and failure points.

Deconstructing Sponsorship into Technical Prerequisites

Executive sponsorship is not a motivational email from a managing partner. It is a functional requirement for resource allocation and political shielding. Without it, your project will be starved of API access, stonewalled by IT security, and eventually deprioritized for the next shiny object. The sponsor’s role is to sign off on the risk inherent in altering a core legal process, not to cheerlead.

Your first step is to establish a project triad. This requires a technical lead (you), an operational lead (a senior paralegal or practice manager who understands the manual workflow), and the executive sponsor. The operational lead is your source for ground truth on exceptions and edge cases. The sponsor is your escalation path for clearing roadblocks. Without this structure, you are just building in a vacuum.

Get a signed charter. This document should explicitly define the project scope, the success metrics (e.g., reduce document generation time from 45 minutes to 3), and the key dependencies. Most importantly, it must list the systems you need access to, like the document management system API, the billing platform’s database, and the e-signature service. This charter is your political leverage when another department claims they are too busy to generate the API key you need.

This is not bureaucracy. It is a contract that prevents scope creep and resource denial later.

Communication as a Protocol, Not a Newsletter

Forget mass emails and intranet posts. Communication for an automation project is about versioning, dependency mapping, and structured feedback loops. Your audience is not a passive recipient. They are a distributed testing and validation network. Treating them as such requires specific channels and protocols.

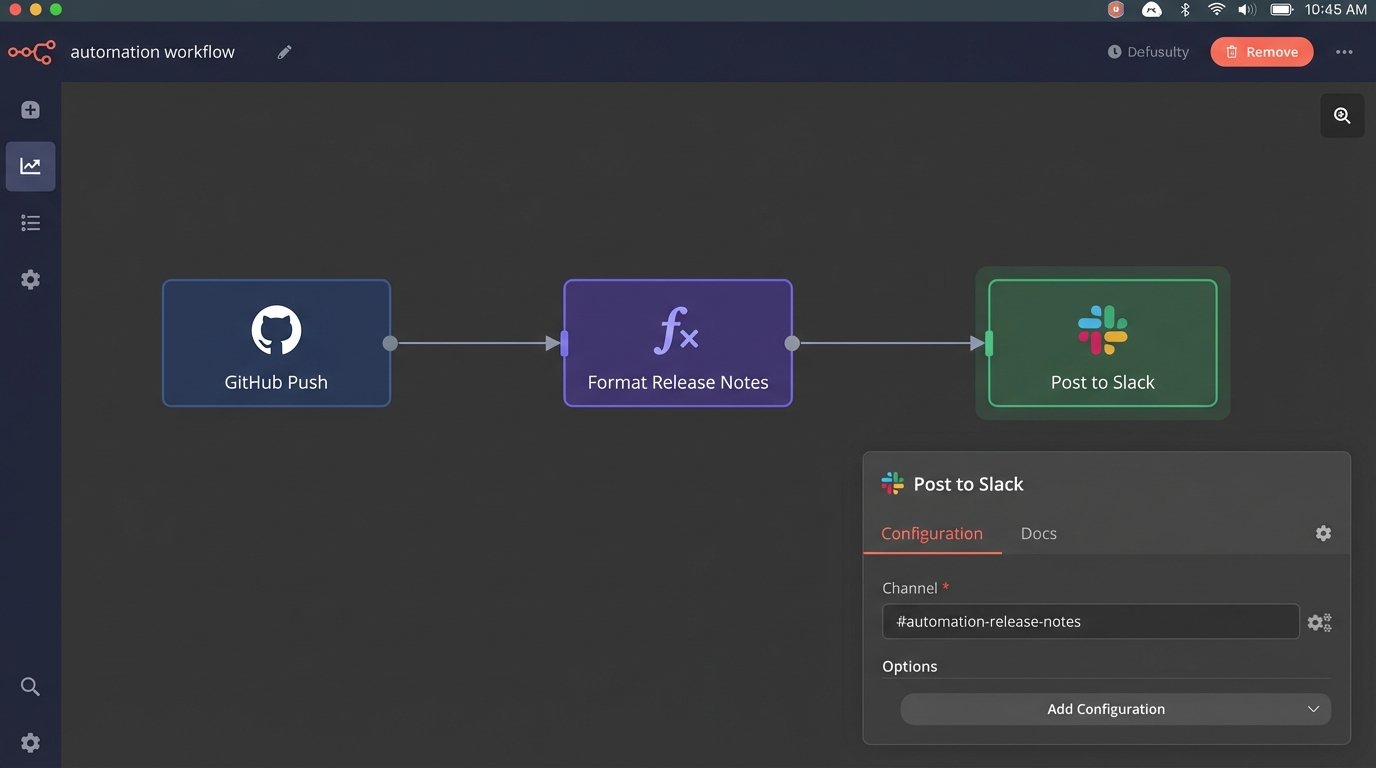

Establish two distinct communication channels immediately. The first is a broadcast-only channel, like a locked Teams or Slack channel named `#automation-release-notes`. This is for your team to post structured updates on deployments, schema changes, or planned downtime. The second is an interactive channel, `#automation-feedback`, where users can report issues and ask questions. Segregating these prevents critical release information from being buried under user chatter.

Every communication about a system change must follow a consistent format. Include the version number, a brief description of the change, the direct impact on user workflow, and the deployment time. This disciplined approach treats internal communication with the same seriousness as external API documentation. It forces clarity and creates a searchable history of the project’s evolution.

Your deployment pipeline should trigger these notifications automatically. A simple webhook can post a message to your release channel upon a successful production deploy. This is not complex to implement, but it is a critical piece of infrastructure for building trust and predictability. You are conditioning users to expect and understand change, not to be surprised by it.

Manual updates are forgotten updates.

User Acceptance Testing as a Code Review for Process

User Acceptance Testing (UAT) is the most critical phase of change management. It is not a formality or a box-checking exercise. It is the final integration test where your logic is stress-tested against the most unpredictable variable: human behavior. You are not testing if the code works. You are testing if the workflow is viable.

Select your UAT group with precision. Do not pick the most enthusiastic people. Pick the most skeptical and detail-oriented ones, including the senior paralegal who has been doing the same process manually for 15 years. Their job is to break your system. They will find the edge cases you never imagined, like a client name with special characters that breaks your string sanitation or a billing code that does not fit your database schema.

Structure UAT with explicit test scripts. Give testers a set of tasks to perform, with expected outcomes. For example, “Generate a non-disclosure agreement for a counterparty in Germany. Verify that the governing law clause is correctly populated.” Vague instructions like “try out the new system” yield useless feedback. The goal is to systematically validate every logical branch of the automation against real-world scenarios.

Running a proper UAT is like debugging a live system. You need to log everything. Track their clicks, capture their error messages, and document their feedback in a ticketing system like Jira or Azure DevOps. Every piece of feedback is a potential bug or a required design change. Ignoring it is choosing to deal with the problem in production, which is always more expensive.

Embedding SMEs to Prevent Logic Gaps

The biggest failures in legal automation occur when an engineer attempts to codify a process they do not fully understand. You cannot automate a legal workflow by reading a high-level process map. You must embed a Subject Matter Expert (SME) from the legal team directly into the development cycle.

This SME is not a stakeholder you interview once. They are a team member who participates in sprint planning and demos. Their function is to provide the nuanced business logic that never makes it into formal documentation. They know that a certain clause is required for clients in one industry but is a deal-breaker in another. They know which approval chain is the standard and which one is the undocumented exception for a specific high-value client. Trying to build the system without this continuous input is like shoving a firehose through a needle. You will force a massive amount of complexity through a narrow, poorly defined model, and the result is a high-pressure mess.

Building logic based on assumptions is just a structured way to be wrong.

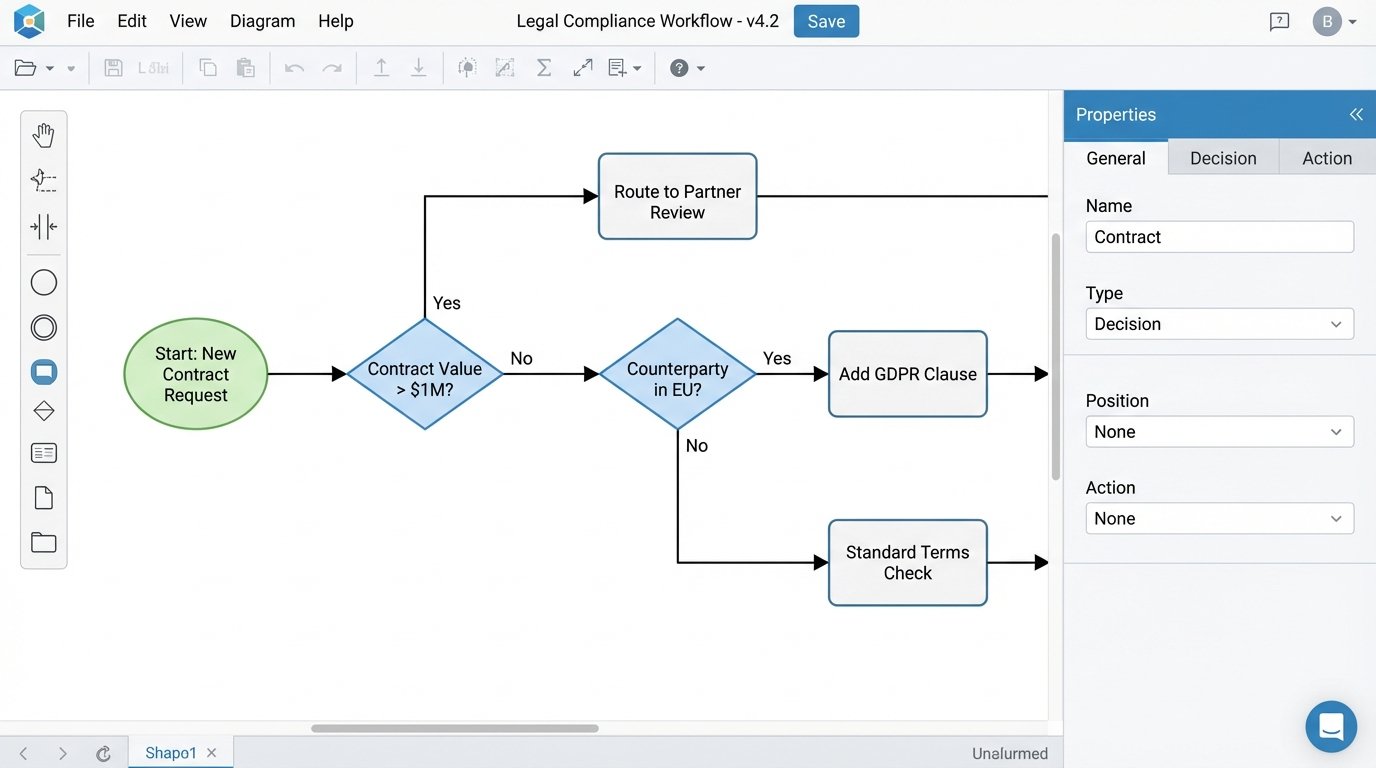

The SME’s primary role is to help you model exceptions. The “happy path” of a process is easy to automate. The value of the system is in how it handles the 20% of cases that are not standard. Your code must account for these variations. The SME is the only person who can define the conditions for these logic gates. This requires mapping out their decision-making process into a state machine or a decision tree before writing a single line of code.

Configuration Files: The First Line of Defense Against Hardcoded Failure

Change management continues long after deployment. A system that requires a developer to change a line of code every time a new user is added or an approval threshold is updated is a failed system. It creates a bottleneck and is brittle. You must externalize all operational variables into configuration files.

Instead of hardcoding a list of approvers for a contract, you put it in a JSON or YAML file that a non-technical administrator can edit. This decouples the operational logic from the application code. It means the head of the litigation department can adjust their team’s review thresholds without submitting a ticket and waiting for a full development cycle.

A simple configuration file might look like this:

---

# Contract Approval Workflow Configuration

# Last Updated: 2023-10-27 by j.doe

# This file controls routing and value thresholds. Do not edit without clearance.

workflows:

- name: "Standard NDA"

value_threshold: 0

requires_legal_review: false

approval_group: "paralegal_team"

- name: "Master Service Agreement"

value_threshold: 50000

requires_legal_review: true

approval_group: "senior_counsel"

- name: "High-Value Contracts"

value_threshold: 1000000

requires_legal_review: true

approval_group: "partner_review_board"

user_groups:

paralegal_team:

- "user1@firm.com"

- "user5@firm.com"

senior_counsel:

- "user2@firm.com"

partner_review_board:

- "user9@firm.com"

- "user11@firm.com"

Your application simply reads this configuration on startup. This approach transforms a code change request into a simple text file edit. It empowers the legal operations team to manage their own workflows, which is the entire point of automation.

Architecting for Graceful Degradation and Auditing

Your new automation does not exist in a vacuum. It depends on other systems: the DMS, the CRM, the billing API. These dependencies will fail. Change management means planning for these failures. The system must be designed to degrade gracefully, not to crash.

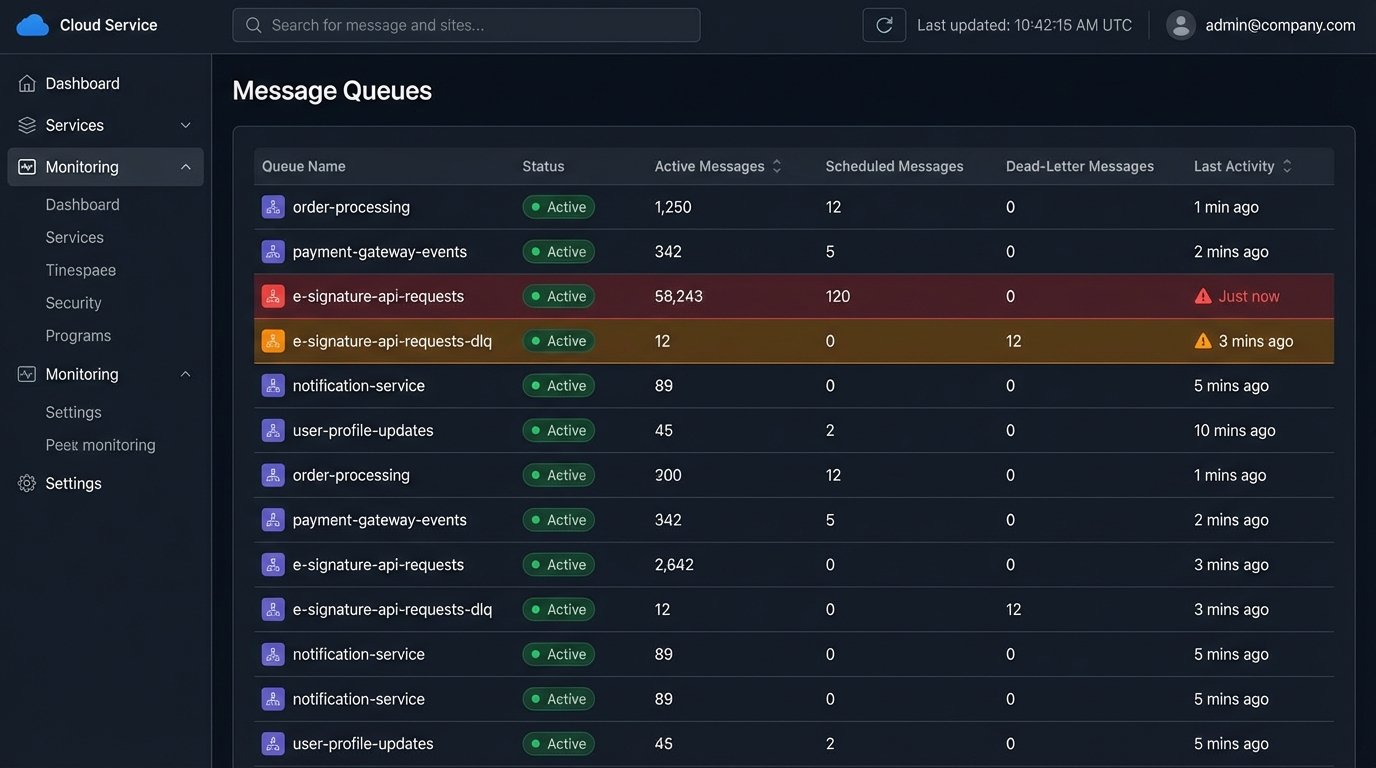

If the e-signature API is down, does the entire contract generation process halt? A well-architected system would instead queue the document, tag it as “pending signature,” and notify an operator. It maintains the integrity of the overall process while isolating the point of failure. This requires building explicit error handling and fallback logic for every external API call.

Build queues and dead-letter channels for every critical integration point. When an API call fails after a set number of retries, the payload is shunted to a separate queue for manual inspection. This prevents data loss and gives you a clear worklist of failed transactions to investigate. Without this, failed jobs can simply disappear, silently breaking the process.

Every action taken by the automation must be logged to an immutable audit trail. When a contract is approved, log which user triggered the action, which version of the approval logic was executed, and a timestamp. This is not just for debugging. It is a core compliance and defensibility requirement. When a partner asks why a contract was sent out without their review, you need to be able to provide a definitive, system-of-record answer, not a guess.

These practices are not optional. They are the difference between a scalable, trusted automation platform and a tool that creates more problems than it solves. The goal is to build a system that manages change on its own, with minimal human intervention. That is the only way it provides lasting value.