10 Emerging Real Estate Automation Trends in 2025

Most real estate tech is a patchwork of brittle APIs and glorified spreadsheets. The industry runs on manual data entry and email chains that belong in a museum. The goal for 2025 is not to add more dashboards. The goal is to gut the underlying processes and replace them with systems that don’t require a human to babysit them at 2 AM.

1. Hyper-Personalized Lead Scoring Engines

Standard lead scoring is a joke. It’s a fixed ruleset that treats a CEO looking for a penthouse the same as a student browsing rentals, just with a different point value. The shift is toward ML models that ingest behavioral data, social signals, and public records to generate a dynamic probability score. We’re talking about tracking clickstream data on your site, scraping public LinkedIn profiles, and cross-referencing property tax records to build a feature set for a gradient boosting model.

The technical benefit is a surgically precise focus for sales teams. Instead of chasing a list of 100 “hot” leads, they target the 5 leads with a 95% probability of transacting within 30 days. This lets you bypass the entire top-of-funnel noise and directly engage high-intent prospects. It also means you can automate the nurturing of low-probability leads instead of wasting a salesperson’s time.

The catch is data decay and feature engineering hell. The signals that predict a sale today are useless in six months. You are forced to constantly retrain the model and hunt for new data sources. This isn’t a one-time build. It’s a full-time data science commitment that becomes a permanent operational expense.

2. Autonomous Transaction Coordination via State Machines

Transaction coordinators are the human routers of the real estate world. They push documents, send reminders, and track deadlines. This entire workflow can be modeled as a finite state machine. Each stage of the transaction, from “Offer Accepted” to “Financing Approved” to “Closed,” is a state. The transitions are triggered by webhooks from various platforms: the e-signature service, the lender’s portal, the title company’s API.

Building this strips out the single greatest point of failure: human memory. An automated system doesn’t forget to send the earnest money reminder or follow up on the inspection report. It logic-checks document submissions and flags missing signatures instantly, not hours later. This reduces the close time by days and kills the possibility of deadline-related contract failures.

The problem is the lack of API standards. Every title company, lender, and municipality has its own proprietary, often poorly documented, API. Your state machine ends up being a grotesque collection of custom adapters and fragile parsers. One unannounced endpoint change from a vendor can shatter the entire closing process for dozens of transactions.

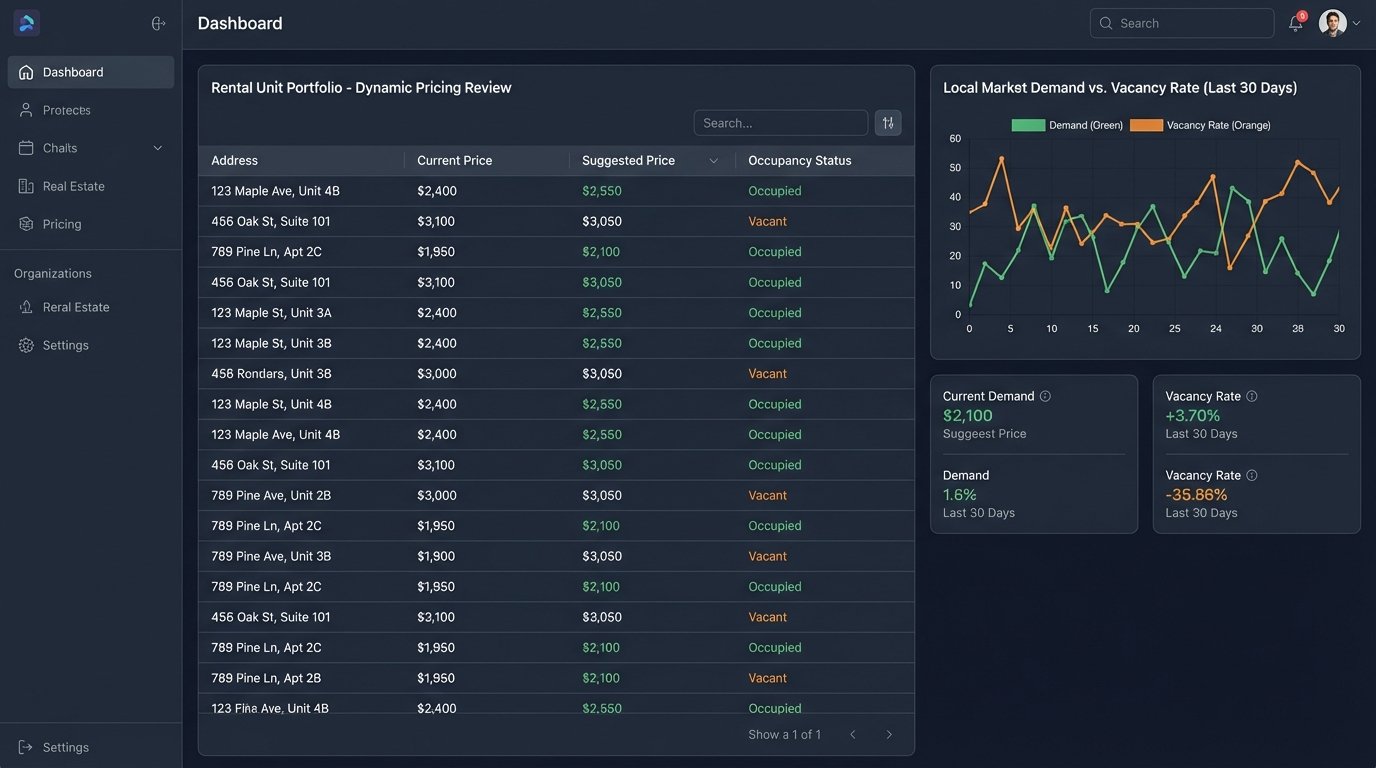

3. Dynamic Pricing Engines for Large-Scale Rentals

Managing rental pricing across a portfolio of thousands of units with a spreadsheet is financial malpractice. The new standard is automated, dynamic pricing engines that adjust rates daily or even hourly. These systems continuously scrape competitor pricing, analyze local demand signals from sources like Airbnb, and factor in internal data like unit vacancy rates and lead flow velocity. It’s time-series forecasting applied to property management.

The immediate result is yield optimization. You capture maximum revenue during peak season and reduce vacancy during lulls by making small, data-driven price adjustments. A 2% increase in occupancy across a 1,000-unit portfolio is not a small number. These systems react to market shifts faster than any human team possibly could.

This is a constant war against anti-bot measures. Your scraping infrastructure will be blocked, challenged with CAPTCHAs, and fed bad data. You need a sophisticated proxy network and parsers that can handle constant changes to target websites. Expect to spend a significant budget just on keeping the data pipelines from getting shut down.

4. Predictive Maintenance Scheduling from IoT Sensors

Preventative maintenance is based on a calendar. Predictive maintenance is based on reality. By embedding low-cost IoT sensors in critical infrastructure like HVAC units, water heaters, and roofing, property managers can collect real-time operational data. We’re talking vibration analysis, temperature fluctuations, and pressure readings streamed to a central platform for anomaly detection.

The benefit is a massive reduction in emergency repair costs. Instead of a call at midnight about a flooded apartment from a burst water heater, the system flags an anomalous pressure drop a week earlier and automatically schedules a technician. This transforms a capital-intensive, reactive process into a predictable, proactive operational workflow. It also provides an auditable data trail for insurance claims.

Hardware is the anchor dragging this down. The sensors are cheap, but deploying and maintaining thousands of them across a distributed portfolio of properties is an expensive logistical nightmare. Battery life, network connectivity in basements, and physical damage are constant issues. The software is the easy part. The physical layer is the wallet-drainer.

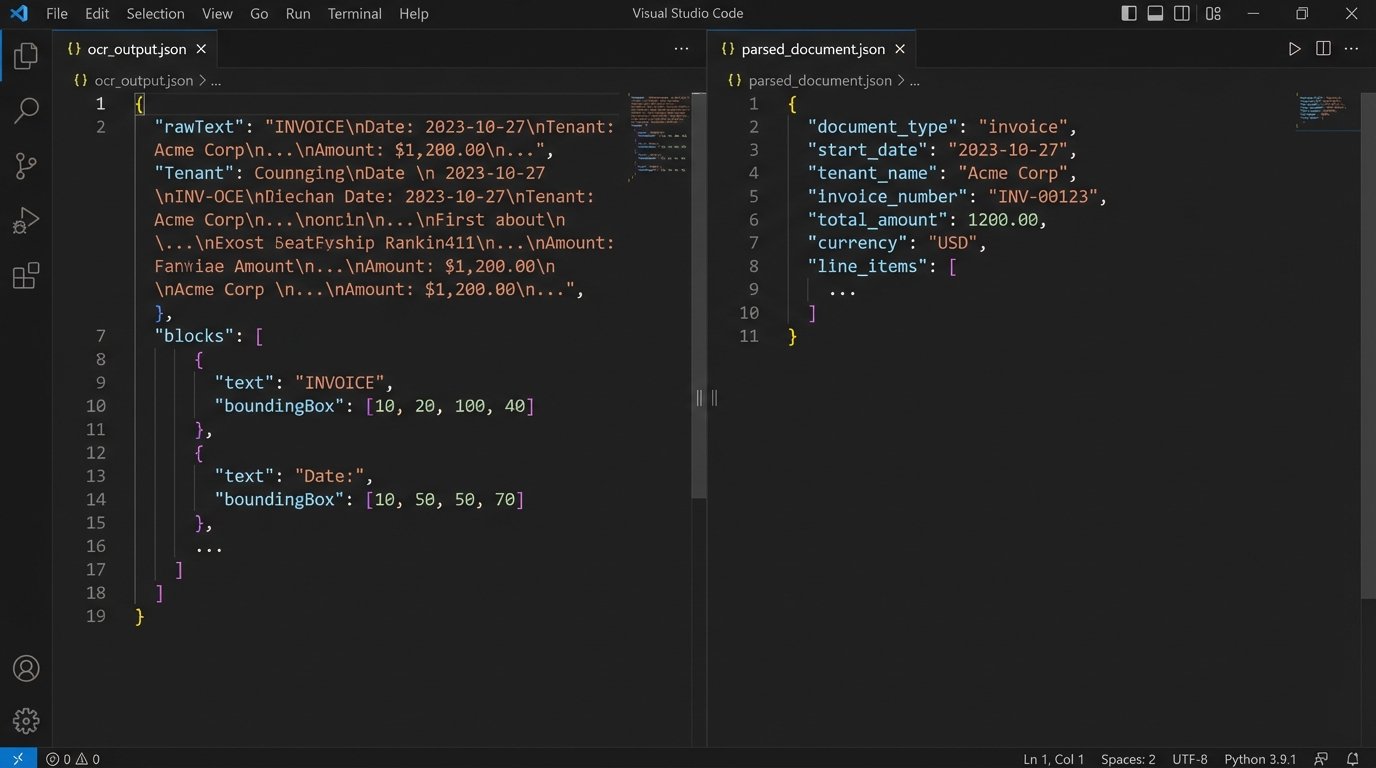

5. AI-Driven Document Abstraction and Validation

Real estate runs on PDFs: purchase agreements, leases, inspection reports, title commitments. Manually extracting key data points like names, dates, and financial figures is slow and error-prone. The trend is to use a combination of Optical Character Recognition (OCR) and Natural Language Processing (NLP) models to automatically parse these documents and structure the data into a usable format like JSON.

This is about speed and data integrity. An automated system can process a 50-page lease agreement in seconds, extracting every critical date and clause. It then injects this data directly into the property management or transaction system, eliminating typos. You can build workflows that automatically flag non-standard clauses or missing addenda, which is impossible at scale with manual review.

The accuracy is never 100%. A poorly scanned document or an unusual legal phrase can confuse the model. You cannot fully remove the human. The only safe architecture is a human-in-the-loop system where the AI makes the first pass and a paralegal or coordinator validates the extracted data. Anyone promising a fully autonomous “lights-out” document processing system is selling snake oil.

Here’s a simplified Python example of how you might force structure onto the chaotic output from a generic OCR service for a lease document.

import json

# Hypothetical raw JSON output from an OCR/NLP service

raw_ocr_output = {

"document_id": "lease_doc_8374",

"confidence_score": 0.89,

"blocks": [

{"type": "text", "content": "Lease Start Date: January 1, 2025"},

{"type": "text", "content": "Tenant: John Doe"},

{"type": "table", "content": "Monthly Rent: $2,500.00"},

{"type": "text", "content": "Security Deposit: $2,500.00"},

{"type": "text", "content": "Term: 12 months"}

]

}

def parse_lease_data(ocr_json):

"""

Strips key-value pairs from a messy OCR output.

This is intentionally simple. A real-world parser needs

regex, date normalization, and fuzzy matching.

"""

structured_data = {}

key_mapping = {

"lease start date": "start_date",

"tenant": "tenant_name",

"monthly rent": "rent_monthly",

"security deposit": "deposit_amount"

}

for block in ocr_json.get("blocks", []):

content = block.get("content", "").lower()

if ":" in content:

key, value = content.split(":", 1)

clean_key = key.strip()

if clean_key in key_mapping:

field_name = key_mapping[clean_key]

structured_data[field_name] = value.strip()

return structured_data

parsed_data = parse_lease_data(raw_ocr_output)

print(json.dumps(parsed_data, indent=2))

# Expected Output:

# {

# "start_date": "january 1, 2025",

# "tenant_name": "john doe",

# "rent_monthly": "$2,500.00",

# "deposit_amount": "$2,500.00"

# }

6. Generative Floor Plan Creation from 3D Scans

Creating accurate floor plans is a manual, time-consuming process. The new approach uses 3D scanning technology, often just from a modern smartphone’s LiDAR sensor, to capture a point cloud of a property’s interior. A generative adversarial network (GAN) or similar model is then trained to interpret this raw data and automatically generate clean, dimensionally accurate 2D floor plans.

The operational benefit is immense. An agent can scan a 5-bedroom house in 30 minutes and have a marketing-ready floor plan generated automatically within the hour. This bypasses the need for specialized drafters and third-party services, radically compressing the time it takes to get a new listing live. The consistency and accuracy of the output also exceed manual measurement.

This is computationally expensive. Training these generative models requires a massive dataset and significant GPU resources. Running the inference to generate a single floor plan isn’t trivial either. This is not something you run on a local machine. It’s a cloud-based, pay-per-use service that can become a serious cost center if you have high listing volume.

7. Composable Architectures Replacing Monolithic CRMs

The all-in-one real estate CRM is a dinosaur. It does ten things poorly instead of one thing well. The smart move is toward a composable, event-driven architecture. This involves using a lean, headless CRM as the core data store and bridging it with best-in-class microservices for specific functions: a dedicated email marketing platform, a separate showing scheduler, a specialized transaction manager. Everything is connected through a message queue like RabbitMQ or Kafka.

This approach gives you flexibility and power. You can swap out a tool that isn’t performing without having to rip out your entire tech stack. If a better lead routing engine comes along, you just remap the API calls and events. It’s the opposite of being locked into a single vendor’s sluggish, feature-bloated ecosystem. Bolting together a dozen different engine parts and expecting a race car sounds insane, but with a solid event bus, it’s more resilient than a monolithic black box.

The trade-off is complexity. You are now the system integrator. You are responsible for the uptime, security, and data consistency between all these disparate services. A single misconfigured webhook or a schema change in one service can create a cascade of failures. The “integration tax” is very real and paid with your engineering team’s time.

8. Automated Underwriting with Alternative Data Sources

Relying solely on a FICO score for mortgage or rental underwriting is an outdated model. The forward-thinking approach is to build underwriting models that ingest alternative data. This includes verified rental payment history from services like Plaid, utility payment records, and even cash flow analysis from bank account data. The system pulls this data via APIs and feeds it into a risk model.

The goal is to expand the pool of qualified applicants. Many creditworthy individuals are invisible to traditional credit bureaus. By analyzing actual cash flow and payment history, lenders and landlords can get a more accurate picture of risk and approve applicants who would otherwise be automatically rejected. This is a direct route to more closed deals.

Compliance and data privacy are a minefield here. You must navigate a complex web of regulations like the Fair Credit Reporting Act (FCRA). The data sources must be vetted, and the model’s decision-making process must be explainable to regulators. One wrong step can lead to crippling fines and lawsuits.

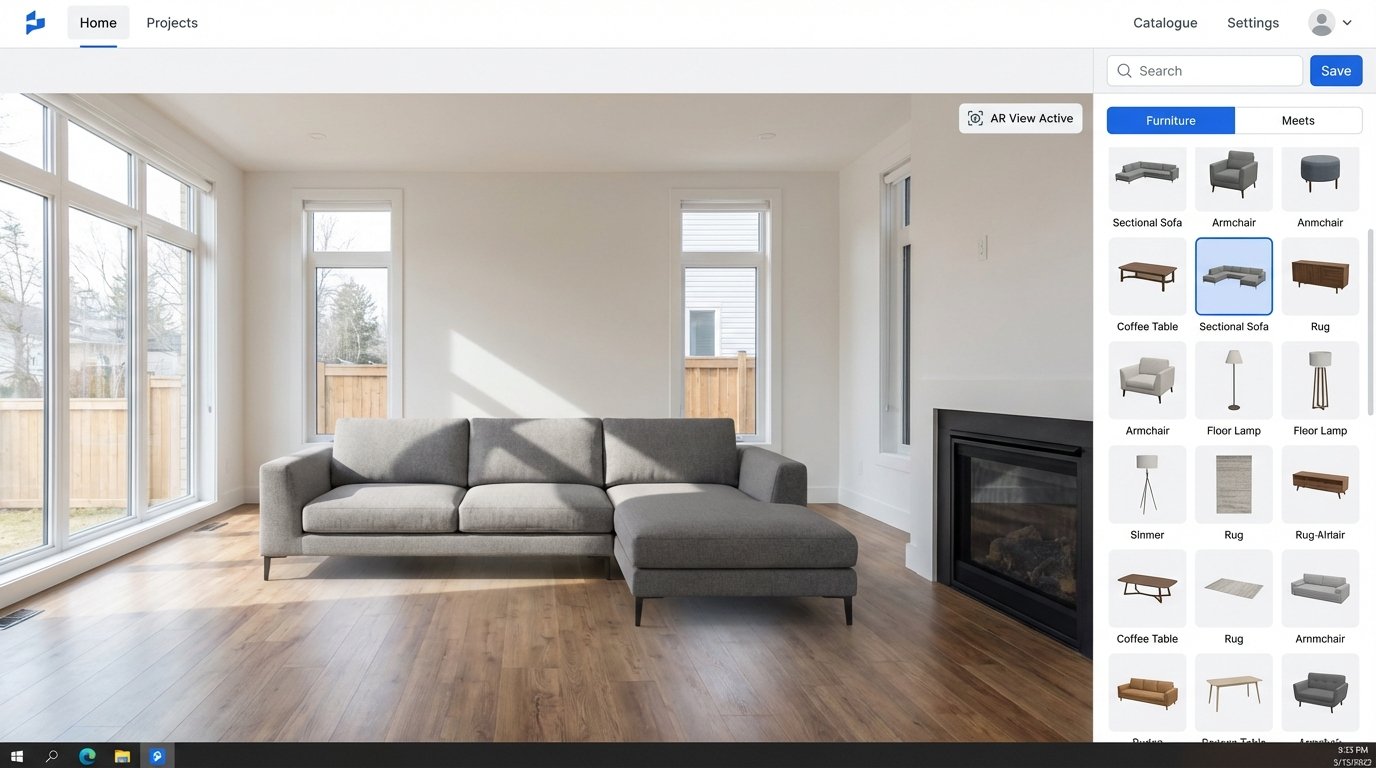

9. On-Demand Virtual Staging via AR APIs

Physically staging a home is expensive and slow. Virtual staging has been around, but it was a manual photoshop process. The trend is to automate this using Augmented Reality APIs. The system takes photos or a 3D scan of an empty room and allows a user to drag and drop 3D furniture models into the space in real-time. The system handles the lighting, shadows, and perspective automatically.

This is about marketing velocity. You can create a dozen different design styles for a single listing and A/B test which one gets more engagement. You can furnish a 20-unit apartment building for a marketing campaign without buying a single piece of furniture. It transforms a major capital expense into a minor operational one.

Performance is the bottleneck. Rendering photorealistic 3D models with accurate lighting in real-time over a web interface is demanding. It requires significant client-side processing power or a robust server-side rendering setup. The user experience on older devices or slow connections can be sluggish and clunky, defeating the entire purpose.

10. Smart Contracts for Title and Escrow Verification

The process of title verification and holding funds in escrow is a bastion of manual checks, paper documents, and centralized trust. The theoretical application of blockchain is to replace this with a self-executing smart contract on a distributed ledger. The contract holds the digital asset (the deed) and the funds, releasing them automatically when all pre-defined conditions, like inspection approval and funding confirmation, are met on-chain.

The theoretical benefit is a frictionless, transparent transaction. It removes the need for multiple intermediary institutions, each taking a cut and adding delays. All parties have visibility into the transaction’s status on an immutable ledger, which should reduce fraud and disputes. The process is governed by code, not by people.

The reality is a disaster. Public blockchain adoption is non-existent in the legal and financial institutions that underpin real estate. There is no standard for digitizing property titles, and the legal framework to recognize a smart contract as a binding deed transfer is missing in almost every jurisdiction. For now, this remains a solution in search of a problem that the existing legacy systems, for all their flaws, actually solve.