5 Ways Workflow Automation Improves Brokerage Coordination

Brokerage coordination fails when data handoffs are manual. An agent forgets to update the CRM, a compliance officer misses an email, and a multi-million dollar deal stalls because someone is on vacation. The root cause is almost always a broken chain of information custody. Workflow automation does not fix people, it bypasses the points where people are forced to perform low-value, repetitive data transfers between disconnected systems.

The goal is to build digital connective tissue between sales, legal, compliance, and accounting. This is not about buying a single platform that claims to do everything. It is about writing specific, trigger-based scripts and configuring API integrations that force systems to talk to each other without a human acting as a translator. What follows are five tactical applications of this principle.

1. Ingesting and Normalizing Inbound Client Data

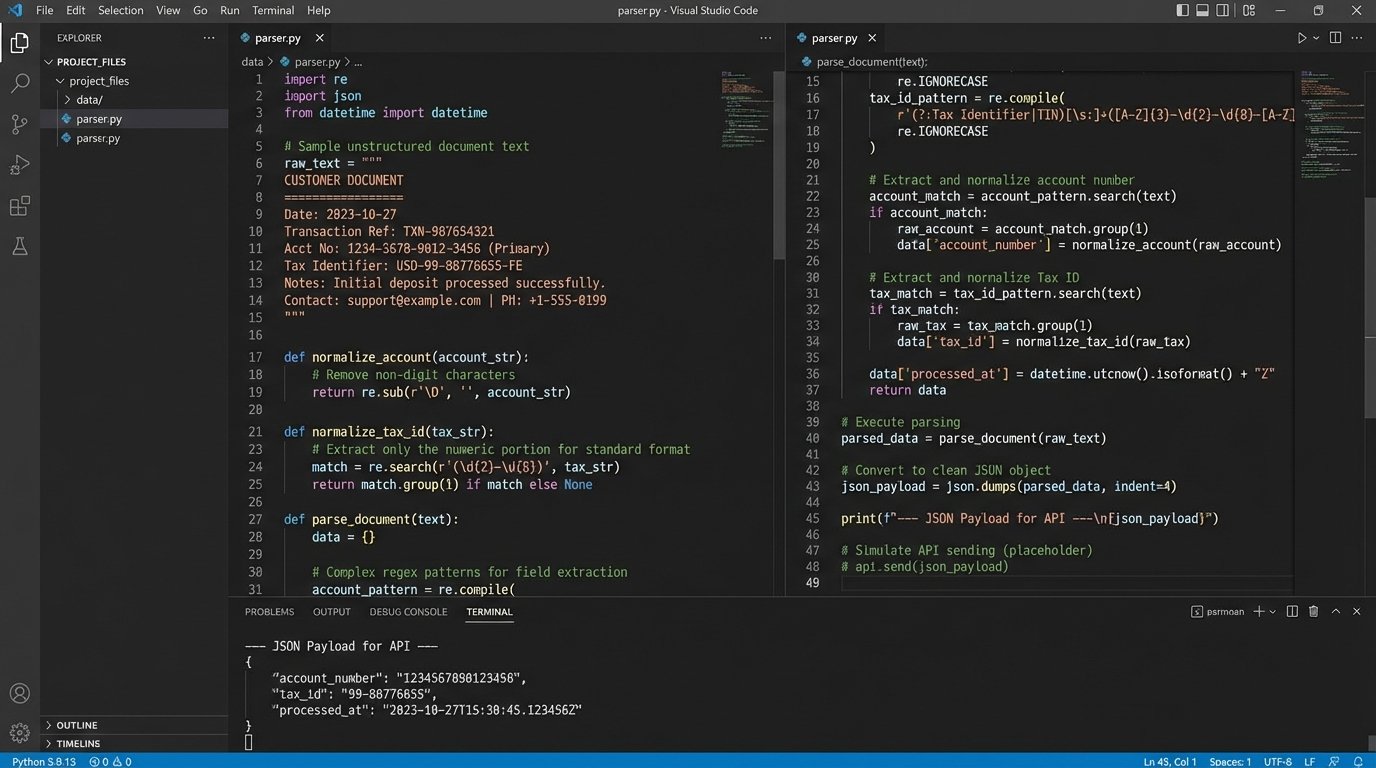

Client onboarding begins with a data dump. You receive PDFs, scanned documents, and spreadsheets filled with inconsistent formatting. Manually keying this information into a central system is a direct path to data corruption. Automation scripts can intercept these files from a dedicated email inbox or cloud storage folder, strip the relevant data, and inject it into the primary CRM or database with a consistent schema.

For PDF and image files, optical character recognition (OCR) libraries like Tesseract are the starting point. The script extracts the raw text, but the output is a mess. The real work involves applying regular expressions to find and isolate specific fields. We build patterns to locate account numbers, addresses, and tax IDs within the unstructured text block. This process is brittle. A small change in the source document’s layout will break the regex pattern, requiring a fix.

Once the data is extracted, it must be normalized. This involves standardizing date formats, capitalizing names, and validating state abbreviations against a master list. The script then packages the cleaned data into a JSON object and POSTs it to the CRM’s API endpoint for creating a new client record. The entire chain is logged, so if a record is created with bad data, we can trace it back to the specific source file and the script version that processed it.

This is the difference between a clean data pipeline and a garbage fire. It requires significant upfront development to build parsers for each unique document type you handle.

The trade-off is clear. You either invest engineering hours into building and maintaining these parsers, or you accept the ongoing operational cost of manual data entry and the inevitable errors that come with it. The automation route forces data discipline at the point of entry. It is less forgiving than a human, but it is also more consistent.

2. Executing Automated Compliance Logic Checks

Compliance teams are the brakes on a brokerage. Their job is to prevent regulatory breaches, which often involves tedious manual checklist reviews for every new client or deal. We can offload the first 80% of these checks to an automated rules engine. This system pulls newly created records and runs them through a battery of predefined logic checks before a human ever sees them.

The engine itself can be a simple script that runs on a schedule. It queries for records with a `compliance_status` of `pending` and executes a series of conditional statements. These checks can include:

- Geographic Restrictions: Does the client’s address fall within a sanctioned or restricted jurisdiction? This is a simple lookup against a maintained list.

- Risk Thresholds: Does the deal size or type exceed a certain pre-defined risk value? For example, any transaction over $1 million might get automatically flagged for senior review.

- Data Completeness: Are all mandatory fields populated? The script checks for null values in critical fields like Tax ID or Date of Birth before it can proceed.

- Internal Blacklist Cross-Reference: The system checks the new client’s name and associated entities against an internal database of previously flagged individuals or companies.

If a record passes all checks, its status is programmatically updated to `pre-approved`. If it fails any check, the status is changed to `review_required` and a notification is sent to the compliance team’s queue with the specific reason for the failure. This frees up the compliance officers to focus their attention only on the exceptions that require human judgment.

A basic rule might look like this in pseudo-code:

function runComplianceCheck(dealObject) {

let flags = [];

if (isCountrySanctioned(dealObject.client.country)) {

flags.push("CLIENT_IN_SANCTIONED_JURISDICTION");

}

if (dealObject.value > 1000000) {

flags.push("DEAL_VALUE_EXCEEDS_THRESHOLD");

}

if (!dealObject.client.taxId) {

flags.push("MISSING_CLIENT_TAX_ID");

}

if (flags.length > 0) {

updateDealStatus(dealObject.id, "NEEDS_MANUAL_REVIEW");

sendNotification("compliance_queue", {dealId: dealObject.id, flags: flags});

} else {

updateDealStatus(dealObject.id, "COMPLIANCE_PASSED");

}

}

This approach transforms compliance from a bottleneck into a validation layer. The audit trail is also perfect. Every automated decision is logged with a timestamp and the exact rule that was triggered.

3. Triggering Task Assignments and Escalations

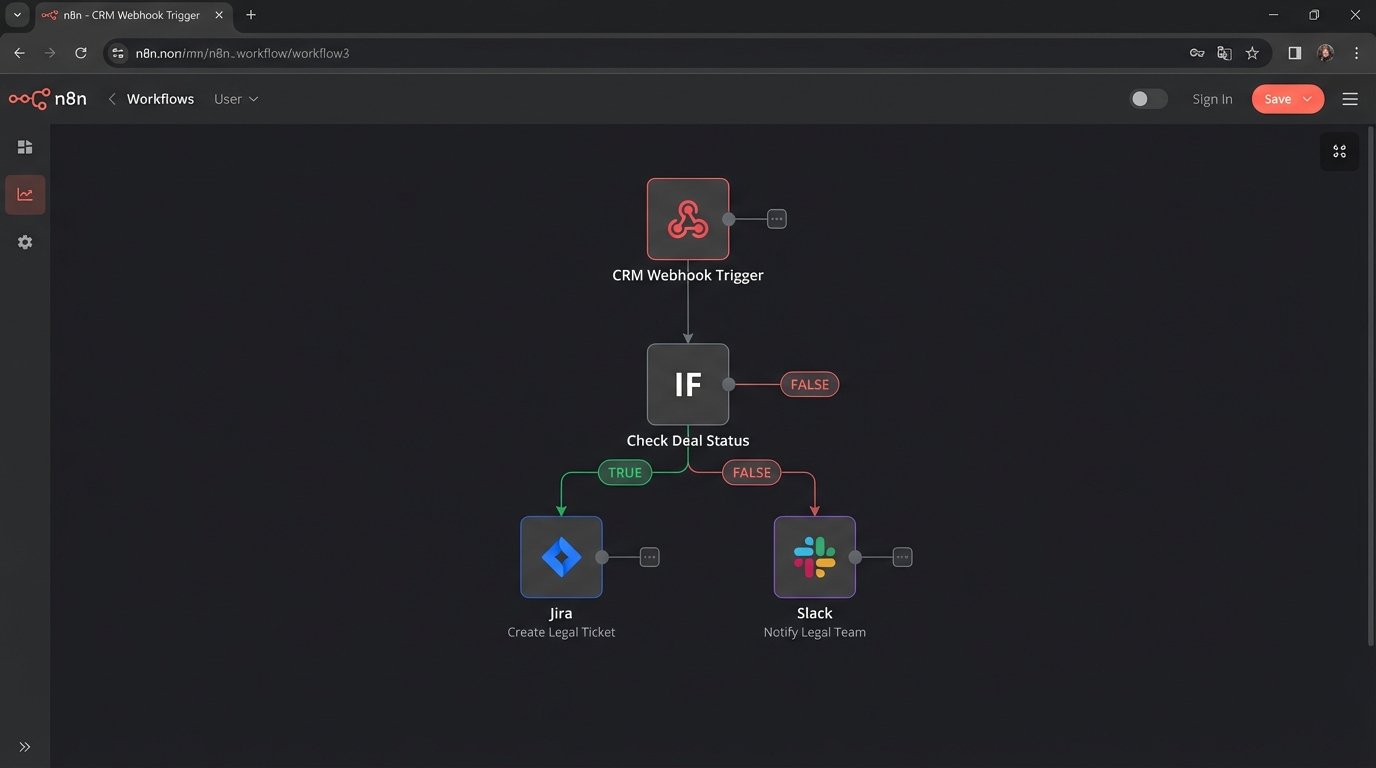

A deal moving from one stage to the next requires action from different teams. When a broker changes a deal’s status in the CRM from `Prospect` to `Contract Sent`, the legal team needs to be activated. Relying on the broker to send an email is unreliable. A workflow automation platform can watch for this specific status change and react immediately.

We configure a webhook in the CRM that fires whenever a deal record is updated. The webhook sends a payload of the deal data to a middleware endpoint, such as a serverless function on AWS Lambda or a flow in a tool like Zapier or Make. The middleware inspects the payload to identify the old status and the new status. If the condition (`status` changed to `Contract Sent`) is met, it triggers a sequence of API calls.

The first call might create a new task in the legal team’s project management system (e.g., Jira or Asana), automatically populating it with the client’s name, deal ID, and a link back to the CRM record. The second call could post a message to a dedicated Slack channel to notify the team. This event-driven architecture removes the lag and potential for human error in communication. Trying to consolidate this much data from so many sources can feel like shoving a firehose through a needle, but a middleware service is designed for exactly this kind of data routing.

The same logic applies to escalations. If that Jira ticket created for the legal team remains in the `To Do` status for more than 48 hours, a scheduled automation can detect its age. It can then trigger an escalation action, such as reassigning the ticket, adding a comment to tag a manager, or sending a high-priority email. This enforces Service Level Agreements (SLAs) without a human having to chase people down.

4. Generating and Distributing Standardized Reports

Generating periodic reports is pure mechanical work. Managers need weekly pipeline reports, and clients need monthly performance summaries. This task usually involves someone manually exporting data from three or four different systems, pasting it into a spreadsheet, and formatting it for presentation. It is a slow, error-prone process that is perfectly suited for automation.

A scheduled script, typically a cron job running on a server, is the core of this solution. The script executes at a set time, for example, every Monday at 5 AM. It makes a series of read-only API calls to pull the necessary data:

- CRM: Fetches all deals created in the last week, along with their current status and value.

- Accounting System: Pulls payment records and commission data related to closed deals.

- Market Data API: Gathers relevant market benchmarks or pricing information.

The script then aggregates and transforms this data in memory. It calculates totals, averages, and conversion rates. Using a library like `Pandas` in Python is common for this kind of data manipulation. Once the final data structure is ready, the script uses a templating engine (like Jinja2) and a PDF generation library (like WeasyPrint) to populate a pre-designed report template. The output is a clean, consistently formatted PDF.

The final step is distribution. The script connects to an email service provider’s API to send the generated PDF to a predefined list of recipients. The entire process, from data collection to email delivery, happens with zero human intervention. The value is not just the time saved, but the guaranteed consistency and timeliness of the reporting.

5. Synchronizing Deal Status Across Multiple Systems

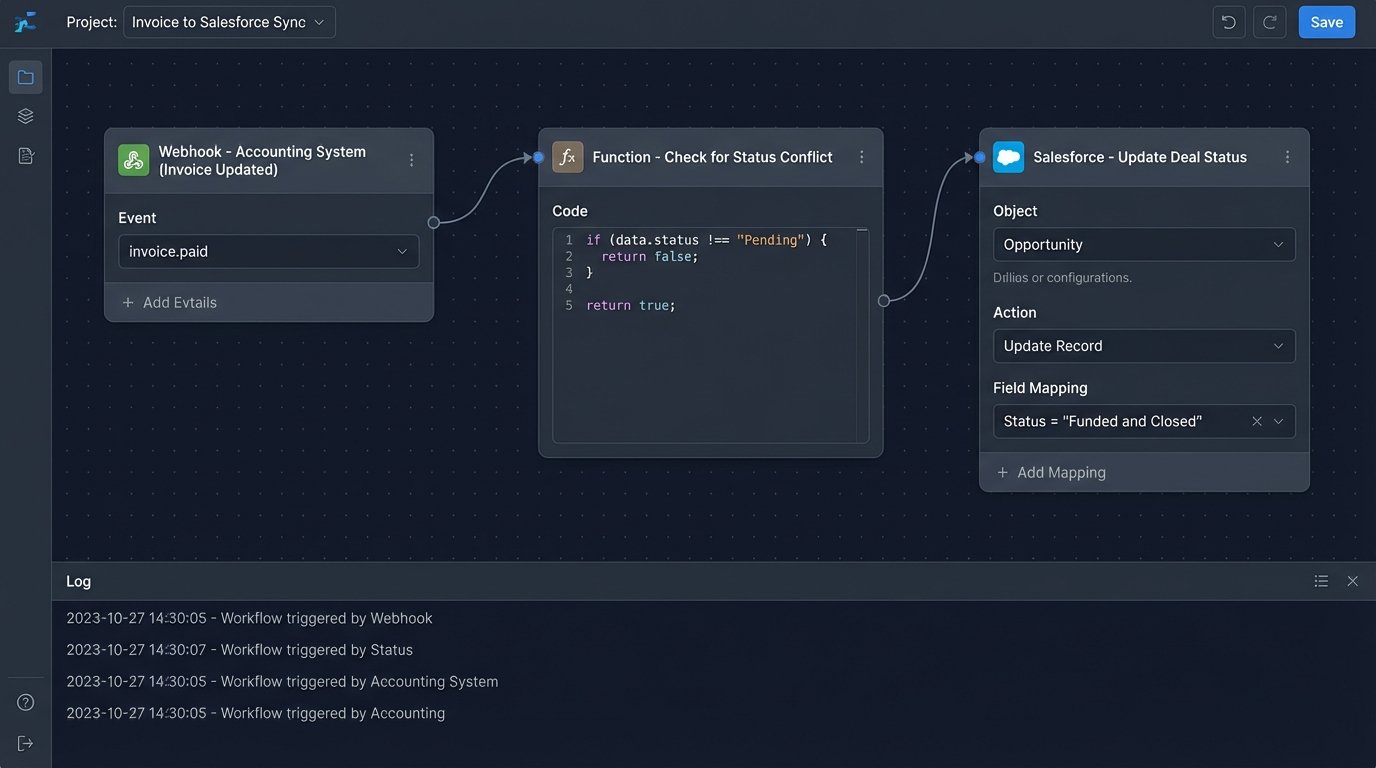

A single deal does not live in a single system. It exists as a record in the CRM, a folder in a document management system, a line item in the accounting software, and an entry in the compliance archive. When the deal’s status changes in one place, it needs to be reflected everywhere else. Manual updates are a recipe for data divergence, where the CRM says a deal is closed but accounting shows the invoice is still pending.

True synchronization requires building two-way data flows, often using webhooks as the primary triggers. For example, when an accountant marks an invoice as “Paid” in their system, that system should fire a webhook. A middleware service catches this webhook, extracts the deal ID, and makes an API call to the CRM to update the corresponding deal’s status to `Funded and Closed`.

This creates a closed-loop system where each department’s primary tool becomes a source of truth for its part of the process, and automation acts as the messenger that keeps all other systems informed. The main challenge here is preventing infinite loops. If the CRM update also triggers a webhook back to the accounting system, you could create a cycle of endless updates. The logic in the middleware must be intelligent enough to recognize and stop these loops, usually by checking if the data being pushed is actually different from the data already in the target system.

Building this level of integration is complex. It requires a deep understanding of the APIs for every system involved and careful state management. The alternative is a single source of truth that is constantly being undermined by outdated information in satellite systems, leading to confusion and operational friction. This automation forces a single, unified view of the deal lifecycle, even when the data is physically stored in a dozen different places.