Stop Drowning in PDFs: A Reality Check on Closing Automation

Automating a real estate closing isn’t about slapping an e-signature API onto a pile of documents. The process is a minefield of inconsistent data formats, state-specific legal requirements, and APIs that were clearly an afterthought. The goal isn’t a “paperless” office. The goal is to build a system that doesn’t collapse when a county clerk’s office still uses a fax machine or a lender changes their XML schema without notice.

Most platforms fail because they treat the closing process as a linear checklist. It’s not. It’s a state machine with a dozen failure points. A successful automation architecture anticipates these failures and builds in logic to handle them, routing exceptions to a human operator only when a machine cannot make a legally sound decision. Forget the marketing hype. This is about building resilient, auditable systems that survive contact with the real world.

Ingestion and Normalization: The Garbage Collection Phase

Every closing starts with a flood of data from disparate sources. Lender instructions, title commitments, purchase agreements, and surveyor reports arrive as unstructured PDFs, barely-readable scans, and occasionally, a well-formed API response. The first step is to accept that 90% of this input is garbage. Your system’s primary job is to be a brutally efficient garbage collector and data normalizer.

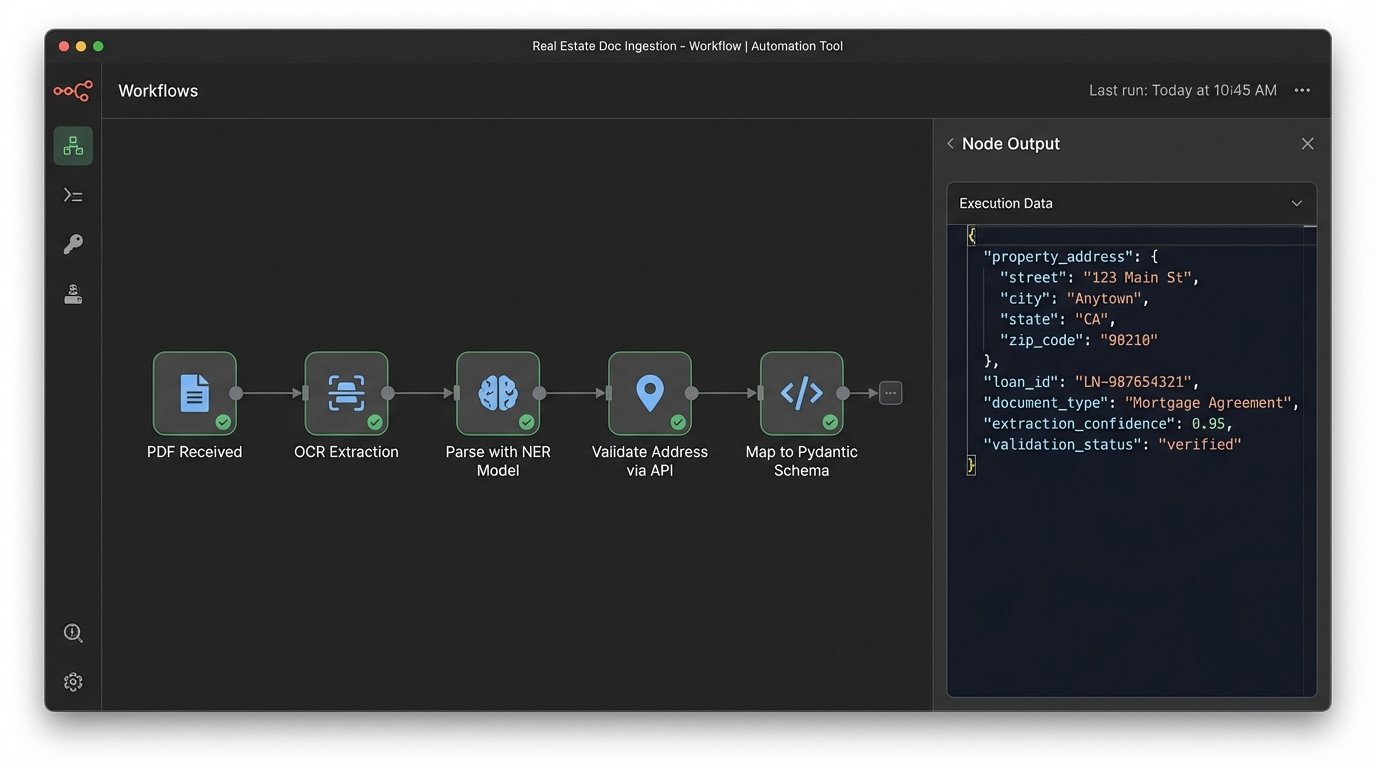

Optical Character Recognition (OCR) is the default tool here, but relying on it blindly is a rookie mistake. We use OCR to get a first pass, stripping text from documents. Then, we apply regex and named entity recognition (NER) models specifically trained on real estate documents to identify key values: property addresses, legal descriptions, buyer and seller names, and loan amounts. The goal is to convert a 50-page PDF into a structured JSON object. Every field must be validated against expected formats. An address isn’t just a string. It’s an object with street, city, state, and zip components that we can check against a service like the USPS API.

This entire ingestion pipeline is like trying to shove a firehose of unstructured data through the needle eye of a structured database schema. You have to build pressure-release valves, which in this case are exception queues for documents that fail parsing, so a human can manually fix the data without halting the entire system.

Data Schema Enforcement is Non-Negotiable

Once you’ve extracted the data, you have to force it into a canonical model. Every transaction, regardless of its origin, must conform to one rigid internal schema. If Lender A sends “LoanAmt” and Lender B sends “loan_amount_total,” your ingestion layer is responsible for mapping both to your internal `transaction.loan.total_amount`. This mapping logic is tedious to build but it’s the bedrock of the entire system. Without it, your document generation and reporting code becomes a tangled mess of conditional logic.

We use Pydantic models in our Python services to enforce this at the application layer. If incoming data from an API call doesn’t match the schema, it’s rejected immediately with a validation error. This prevents corrupted or incomplete data from ever touching the core transaction database.

from pydantic import BaseModel, Field, validator

from typing import Optional

class Address(BaseModel):

street_line_1: str

city: str

state_code: str = Field(..., min_length=2, max_length=2)

zip_code: str

@validator('state_code')

def state_code_must_be_uppercase(cls, v):

return v.upper()

class Transaction(BaseModel):

property_address: Address

loan_id: str

settlement_date: date

buyer_name: str

seller_name: str

...

This isn’t just good practice. It’s a defensive measure against the inevitable upstream data changes that nobody will tell you about.

Document Generation: Beyond Mail Merge

Generating the closing disclosure, deed, and other critical documents is where the normalized data pays off. Don’t build this with a series of hardcoded PDF templates and string replacements. That path leads to an unmaintainable disaster where changing one legal phrase requires a developer to deploy new code. You need a proper templating engine that can handle complex conditional logic and sub-templates.

We use a system that combines a JSON data model with templates written in a logic-aware language like Jinja2 or Handlebars. This lets us build logic directly into the templates. For example, a Texas property requires specific homestead disclosures that a New York property does not. Instead of having two separate code paths, the template itself contains the condition: `{% if transaction.property_address.state_code == ‘TX’ %} … include ‘texas_homestead_addendum.html’ … {% endif %}`. The legal team can update the addendum snippet without ever touching the application code.

Managing Template and Clause Variants

The real challenge is version control for these templates and legal clauses. You need a system, separate from your application code’s Git repository, to manage these document assets. We treat legal clauses as independent, versioned objects in a database. A document template is then constructed by assembling a series of these versioned clauses. When a law changes, the legal team creates a new version of the affected clause. New transactions automatically pick up the latest version, while in-flight transactions remain tied to the version that was active when they were initiated. This creates a full, point-in-time auditable history of which legal language was presented to the consumer.

This is critical for compliance. If you are ever challenged on a closing from six months ago, you must be able to reproduce the exact document, with the exact legal language, that was generated at that time. Simply re-generating it with the current template is not sufficient and will get you into serious trouble.

Third-Party Integrations: Assume Failure

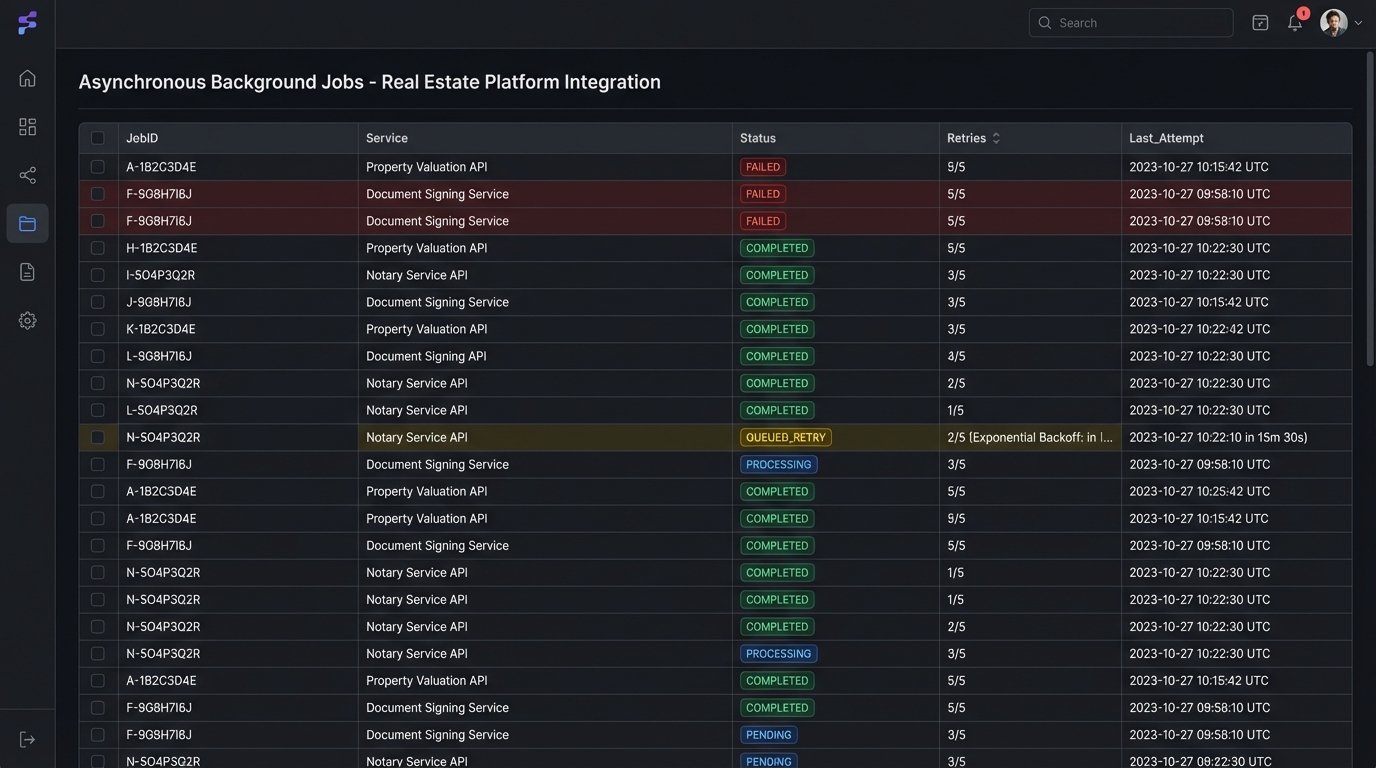

Your automation platform is a hub that must connect to a dozen external services: e-signature providers, notary services, county recording portals, and title insurance underwriters. Every one of these API endpoints is a potential point of failure. The documentation is often outdated, the schemas can change without warning, and rate limits are a constant threat.

Never make a direct, blocking API call from your main application thread. Every external call must be asynchronous and wrapped in a robust error-handling and retry mechanism. When an API call to a notary service fails, the system shouldn’t crash. It should log the error, increment a counter, and re-queue the job with an exponential backoff strategy. After a configurable number of retries, if it still fails, the system must then flag the transaction and assign it to a human for manual intervention.

Idempotency is Your Best Friend

Network connections will fail. Your service will crash and restart. You must design your API interactions to be idempotent. This means that making the same request multiple times has the same effect as making it once. For example, when submitting a document for recording, your API call should include a unique transaction ID generated by your system. If the request times out and you send it again, the county’s system should see the same transaction ID and recognize it as a duplicate, preventing a double-charge or duplicate recording.

If the external API doesn’t support this, you have to build an idempotency layer on your side. Before calling a non-idempotent endpoint, you write a record to your database indicating your intent (`status: PENDING_RECORDING`). After you get a successful response, you update that record (`status: RECORDING_CONFIRMED`). If your service restarts in the middle, it can check the database and know whether to re-send the request or not.

State Management and the Audit Trail

A real estate closing moves through distinct stages: `documents_generated`, `pending_buyer_signature`, `pending_seller_signature`, `funding_approved`, `recorded`, `closed`. This workflow is a state machine. You must model it as one in your system. A transaction can only move from one state to another based on specific, validated events. A transaction in `pending_buyer_signature` cannot jump to `recorded`. It must first transition through `pending_seller_signature` and `funding_approved`.

Using a formal state machine library or implementing this logic in your database with state transition tables prevents invalid or out-of-order operations. More importantly, every state transition must be logged to an immutable audit table. This log should record the timestamp, the user or system process that triggered the change, the old state, the new state, and any associated data. This isn’t just for debugging. It’s a legal record that is as important as the closing documents themselves. When a regulator asks why a document was changed on a specific date, you need to have a definitive, machine-generated answer.

Handling Exceptions and Human Handoffs

The “happy path” is easy to automate. The value of a good system is in how it handles the “unhappy path.” What happens when a title search comes back with a lien? What happens when the buyer’s e-signature session times out? The automation shouldn’t just stop. It should transition the transaction into an exception state, like `NEEDS_REVIEW_LIEN_FOUND`, and create a task in a queue for a human closing officer.

The key is to provide the human operator with all the context they need to resolve the issue. The task they receive should include a link to the transaction, a clear description of the problem, and the relevant data or documents. Once the human resolves the issue, they can manually trigger an event to place the transaction back into the automated workflow. This combination of machine execution and human exception handling is the only practical way to achieve high-volume automation.

Ultimately, automating real estate closings is an exercise in defensive programming. You are not building a simple web app. You are building a low-latency, high-reliability system to manage legally binding financial transactions. Assume every external dependency will fail, all input data is corrupt, and that you will be asked to prove every action the system took. Build your architecture on that foundation of paranoia.