Every lead qualification chatbot promises to filter signal from noise. The reality is most of them just become sophisticated garbage collectors, dutifully piping junk data into your CRM and creating cleanup work for the sales team. The fundamental flaw isn’t the concept, but the execution. We build them like simple Q&A bots when they should be architected like resilient, stateful applications.

Forget the marketing gloss. Let’s talk about building a qualification engine that doesn’t buckle under real-world conditions.

Model Flows as State Machines, Not Nested Ifs

The most common failure pattern is a massive, unmaintainable script of nested conditional logic. Answering “What’s your company size?” sends the user down a brittle branch of if-this-then-that questions. A minor change to the qualification criteria requires a developer to untangle a web of logic. This approach is fragile and scales poorly.

A state machine enforces structure. Each step in the conversation is a distinct state with defined transitions. The bot’s only job is to move the user from the current state to the next valid one based on their input. This decouples the flow logic from the bot’s core processing. Modifying the flow means editing a configuration map, not rewriting code.

Consider this simplified state definition for a B2B SaaS bot. It defines the question, the data point to capture (the entity), and the possible next states based on logic checks. This configuration lives outside the application code, making it manageable by non-engineers with proper tooling.

{

"state_name": "GET_COMPANY_SIZE",

"prompt": "How many employees are on your team?",

"entity_to_extract": "company_size",

"transitions": [

{

"condition": "company_size >= 500",

"next_state": "ROUTE_TO_ENTERPRISE_SALES"

},

{

"condition": "company_size < 500 && company_size >= 50",

"next_state": "SCHEDULE_DEMO"

},

{

"condition": "company_size < 50",

"next_state": "ROUTE_TO_SELF_SERVICE_DOCS"

},

{

"condition": "default",

"next_state": "HANDLE_INVALID_SIZE"

}

]

}

This approach forces you to think about every possible path, including failures. The spaghetti code version just hopes for the best.

Decouple Qualification Rules from Dialogue

Your chatbot's dialogue manager should be dumb. Its job is to manage conversation turns, extract entities, and classify intent. It should not be responsible for deciding if a lead is qualified. Hard-coding business logic like "leads from the EMEA region with over 1000 employees get high priority" directly into the conversation flow is a recipe for disaster. The sales team will change those rules quarterly.

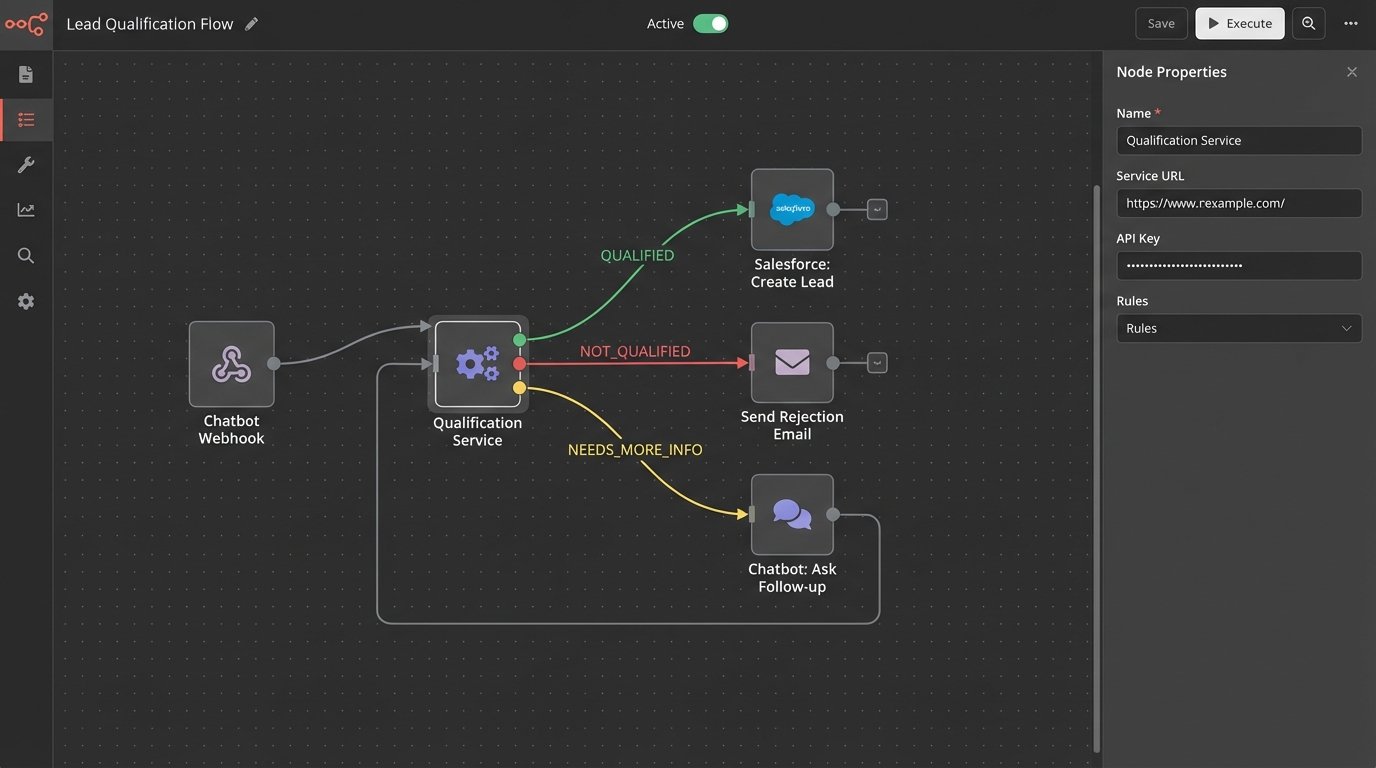

The qualification logic should be an independent service or module. The chatbot collects the necessary data points: email, company size, use case, etc. It then packages this data and passes it to a "Qualification Service." This service contains the business rules and returns a simple decision: `QUALIFIED`, `NOT_QUALIFIED`, `NEEDS_MORE_INFO`. This architecture allows the qualification criteria to evolve without requiring a full chatbot redeployment.

This is about creating clean boundaries. The chat interface handles the user interaction. The qualification service handles the business decision. One can change without breaking the other.

Enforce Aggressive Input Sanitization at the Edge

User input is a firehose of garbage. It will contain typos, special characters, weird formatting, and outright nonsense. Allowing this raw data to flow into your system is negligent. Every piece of user-provided text must be sanitized and validated before any business logic is applied. This isn't just about security, it's about data integrity.

For an email field, logic-check for the "@" symbol and a valid domain pattern. For a phone number, strip all non-numeric characters before storing it. For company names, trim whitespace and normalize casing. This cleaning process should happen immediately upon receiving the input, before it's even evaluated for intent. This is your system's immune response, killing pathogens at the entry point before they can infect downstream systems like your CRM.

Failing to do this is how you end up with three different CRM entries for "Acme Corp", "acme corp.", and "Acme Corporation". It's a slow-motion data corruption disaster.

Use Confidence Scores to Avoid False Positives

Natural Language Understanding (NLU) models are not magic. They make predictions and assign a confidence score. A common mistake is to act on the top-predicted intent, regardless of its score. If a user types "What is your pricing?" and the NLU returns the `REQUEST_PRICING` intent with 98% confidence, that's a safe bet. If a user types "banana stand" and the NLU returns `REQUEST_PRICING` with 35% confidence, acting on that is a failure.

Establish a confidence threshold. A common baseline is around 70-75%. Any intent classified with a score below this threshold should trigger a clarification state, not a business action. The bot should respond with, "I'm not sure I understand. Can you rephrase that?" or offer a few buttons with the most likely intents. Blindly trusting a low-confidence prediction creates frustrating, nonsensical user experiences.

This threshold is a tuning knob. Set it too high, and your bot will be overly cautious. Set it too low, and it will constantly misinterpret users. You need to monitor your logs to find the right balance.

Build Defensive API Integrations

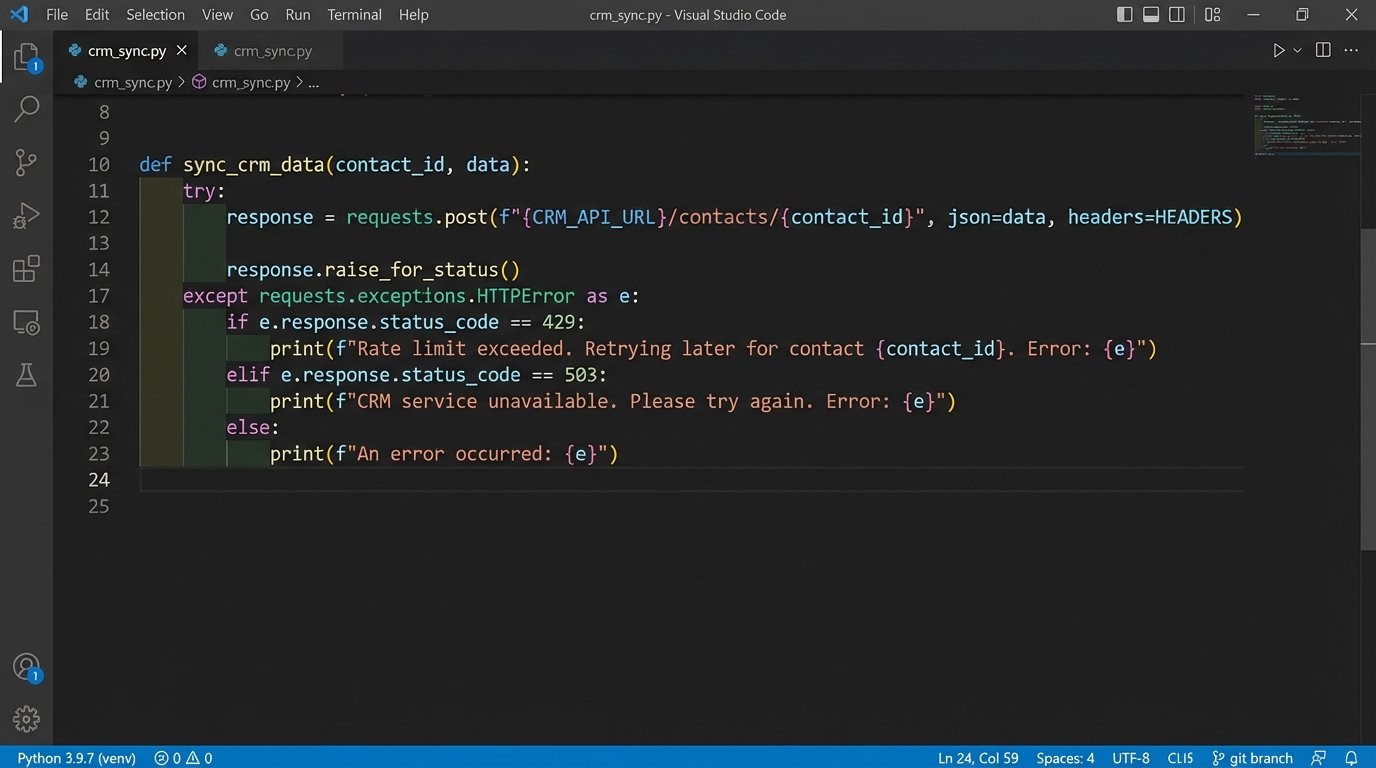

Your chatbot is a client to other services, most commonly a CRM like Salesforce or HubSpot. These APIs will fail. They will have rate limits. They will change their data schema with little warning. Your integration code must be built with this assumption of fallibility. A single failed API call should never terminate the user's conversation.

Implement retry logic with exponential backoff for transient network errors. Use a circuit breaker pattern to stop hammering a service that is clearly down. Your code should gracefully handle HTTP status codes beyond 200 OK. What happens on a 429 (Too Many Requests)? Or a 503 (Service Unavailable)? The bot should have a fallback state. It could tell the user, "Our system is a bit slow right now. I've saved your progress and a team member will reach out shortly."

Pushing the lead data to the CRM is like shoving a firehose through a needle. The data you have might not match the CRM's rigid schema, the API might be down, or you might hit a rate limit. Your code must anticipate these points of friction instead of crashing when they inevitably occur.

Isolate External Calls from the Main Thread

Never make a blocking API call for data enrichment while the user is waiting. When a user provides an email, it's tempting to immediately call an enrichment service like Clearbit to fetch company data. This introduces latency. If the enrichment API is slow, the user is stuck waiting for the bot to respond. This kills the conversational flow.

The solution is to perform these operations asynchronously. Capture the basic lead information first to secure the lead. End the conversation cleanly. Then, trigger a background job to handle the enrichment. This job can call multiple APIs, merge the data, and then update the lead record in the CRM. The user gets a fast experience, and you get enriched data without the conversational lag.

The trade-off is that the sales rep sees the lead appear in the CRM moments before the fully enriched data arrives. This is a far better problem than an abandoned chat conversation because your bot was too slow.

Engineer a Clear Human Handoff Path

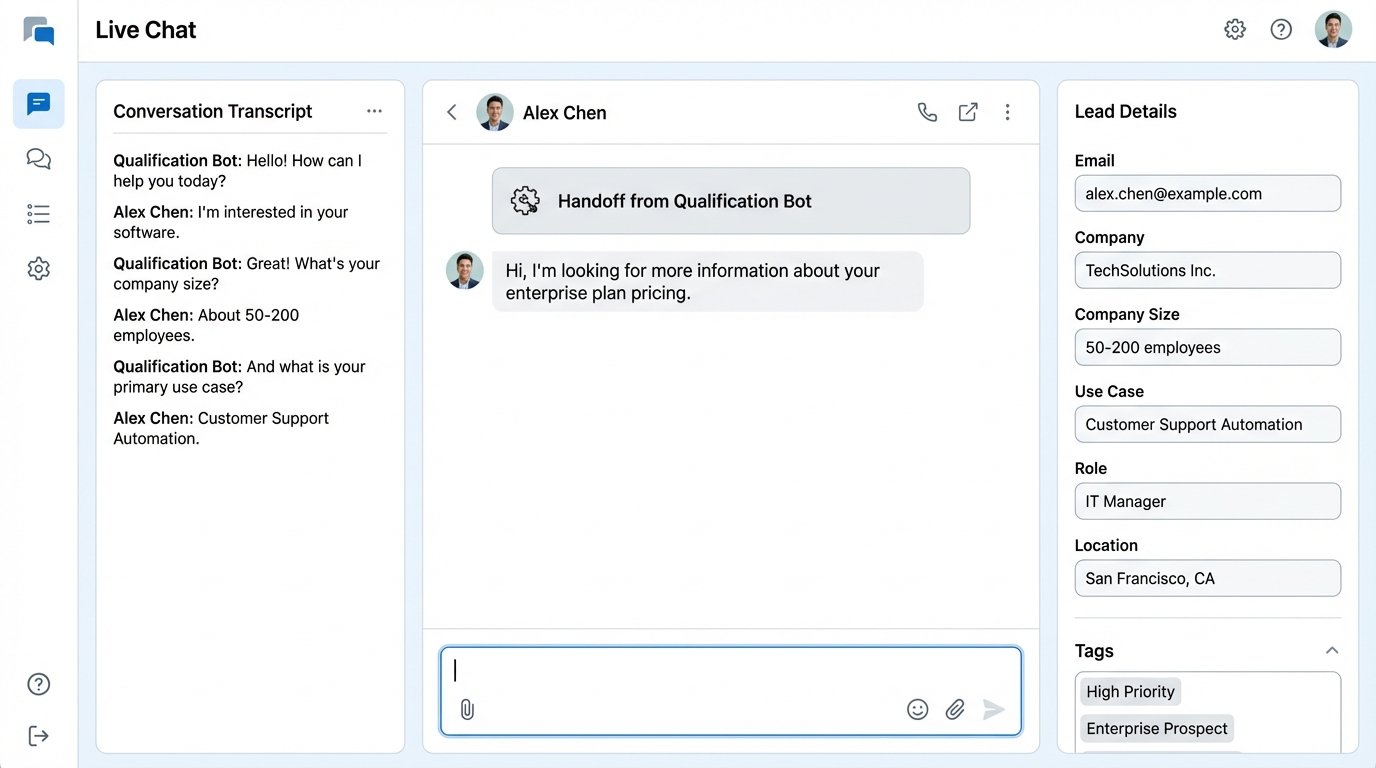

No chatbot can handle 100% of queries. You must design an "eject button" for when the automation reaches its limit. This isn't just a fallback, it's a critical feature. The triggers for handoff should be explicit and multi-faceted.

- User Request: The most obvious one. If a user types "talk to a human" or "speak with an agent," the bot must immediately initiate the handoff process.

- Repeated Failure: If the bot fails to understand the user's input two or three times in a row, it should stop trying and offer to connect them to a person. This prevents "I'm sorry, I don't understand" loops that infuriate users.

- High-Value Intent: Certain keywords or intents should trigger an immediate escalation. If a user mentions a competitor by name or uses terms like "legal notice" or "security vulnerability," bypass the rest of the qualification flow and get a human involved immediately.

When the handoff occurs, the bot must pass the entire conversation transcript and any extracted data to the human agent. Forcing the user to repeat themselves is the cardinal sin of chatbot design. The agent needs the full context to take over seamlessly.

Log for Debugging, Not for Vanity Metrics

Standard chatbot analytics dashboards are filled with useless metrics like "total conversations" or "engagement rate." These are vanity metrics for marketing decks. For an engineer, the logs are the ground truth. Your logging strategy should be built around answering one question: "Why did it break?"

Your logs must be granular. For every single turn in a conversation, you should log the raw user input, the sanitized input, the detected intent, the confidence score, all extracted entities, the current state of the state machine, and the bot's response. When you make an API call, log the full request and the full response, masking any PII. This level of detail feels like overkill until you're trying to debug a production issue at 2 AM.

These logs are the flight data recorder for your chatbot. Without them, you're just guessing what went wrong.