Stop Syncing. Start Streaming.

Most real estate brokerages think their core technical problem is getting their CRM and transaction management systems to talk to each other. They are wrong. The actual problem is that they are forcing two fundamentally different data models into a brittle, point-to-point synchronization that is guaranteed to fail. The result is data corruption, broken automations, and agents screaming that their commission data is wrong.

The industry is addicted to the easy fix: a no-code connector that promises a two-way sync. This approach is a technical debt factory. You are not building an integration. You are patching a leaky boat with chewing gum, waiting for the pressure of a schema change or an API rate limit to blow it wide open.

The Brittle Reality of Point-to-Point Connectors

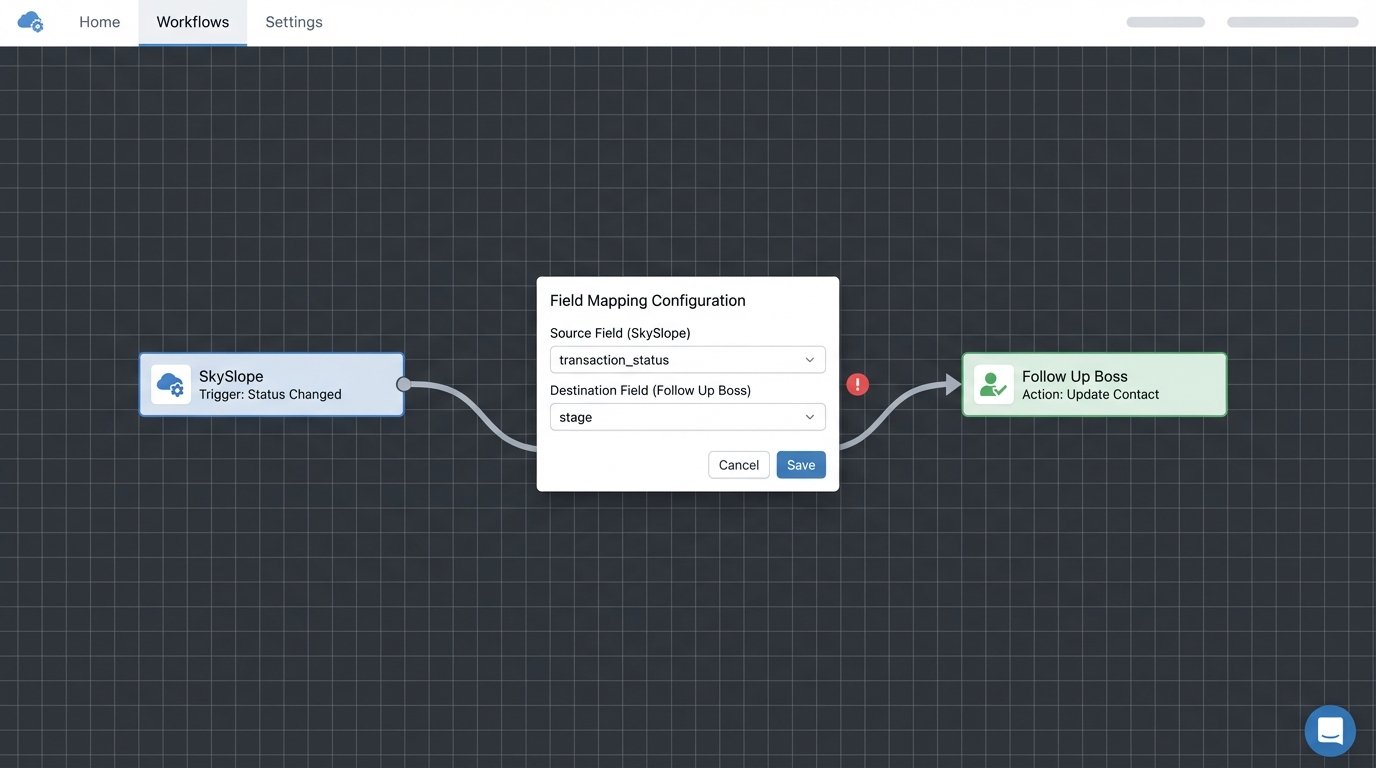

Consider the typical setup. A brokerage uses a CRM like Follow Up Boss and a transaction system like SkySlope. The goal is simple: when a deal moves to “Under Contract” in SkySlope, update the contact’s stage in Follow Up Boss. An off-the-shelf connector maps the `status` field from one system to the `stage` field in the other. This works fine for about a week.

Then the transaction system’s API developer decides to rename `status` to `transaction_status` in their v2.1 update. The connector, which was configured via a GUI with no version control or logging, breaks silently. No one notices until quarter-end reporting is a complete disaster. This is not a hypothetical. This is a Monday.

The core issue is coupling. The CRM integration is now tightly coupled to the transaction system’s specific API implementation. Any change on one side requires a manual, reactive fix on the other. This is not scalable, and it is certainly not resilient. It’s a series of single points of failure held together by a subscription fee.

Webhooks Are Not a Silver Bullet

The next level of sophistication is often a webhook-based integration. Instead of polling for changes, the transaction system sends a POST request to a defined endpoint when an event occurs. This seems more efficient, but it introduces its own set of problems. The payload of a typical webhook is often minimal, designed to be lightweight, not comprehensive.

For example, a `document_signed` event might only contain the `transaction_id` and the `document_id`. To get the context, like which contact signed it and what their role is, your integration logic must then make one or more subsequent GET requests back to the source API. You just traded a polling delay for a chain of network calls that can, and will, fail.

A failed callback means your system has to be smart enough to retry with exponential backoff. A slow response from the API means your webhook handler might time out, leaving the data in an inconsistent state. You have solved one problem by creating three more complex ones.

// A typical webhook handler that introduces chained dependencies

exports.handleTransactionUpdate = async (req, res) => {

// 1. Receive the initial lightweight webhook payload

const { transactionId, eventType } = req.body;

if (eventType !== 'STATUS_CHANGED') {

res.status(200).send('Event ignored.');

return;

}

try {

// 2. First dependent API call to get transaction details

const transactionDetails = await skySlopeApi.getTransaction(transactionId);

const { status, participants } = transactionDetails.data;

// 3. Second dependent API call to get primary client details

const primaryClient = participants.find(p => p.role === 'Buyer');

const contactDetails = await followUpBossApi.getContactByEmail(primaryClient.email);

// 4. Final API call to update the CRM

if (contactDetails && status === 'Under Contract') {

await followUpBossApi.updateContactStage(contactDetails.id, 'Contract');

}

res.status(200).send('Processing complete.');

} catch (error) {

// What's your retry logic? What if only the last call failed?

console.error(`Failed to process transaction ${transactionId}:`, error);

res.status(500).send('Internal Server Error.');

}

};

Look at that code. A failure at any of the `await` calls leaves the entire process in a questionable state. This is the architecture of anxiety.

The Schema Mismatch Nightmare

The most persistent problem is the data model impedance mismatch. A CRM thinks in terms of `Contacts`, `Leads`, and `Relationships`. A transaction system thinks in terms of `Transactions`, `Listings`, `Buyers`, `Sellers`, and `Checklists`. They are not the same objects. Forcing a transaction schema into a CRM schema is like trying to shove structured cabling through a plumbing pipe. The shapes are wrong, and you will eventually cause a blockage that halts the flow of data.

Whose data is the source of truth? If an agent updates a client’s phone number in the transaction system, should that overwrite the number in the CRM? What if the CRM number was updated more recently? Without a clear data mastering strategy and a system to handle these conflicts, you are just duplicating inconsistent data across your stack.

This leads to a complete erosion of trust in the data. Agents stop using the CRM because “the data is always wrong,” and management cannot pull accurate reports to forecast revenue. The expensive tech stack becomes a liability instead of an asset.

A Better Architecture: The Centralized Event Bus

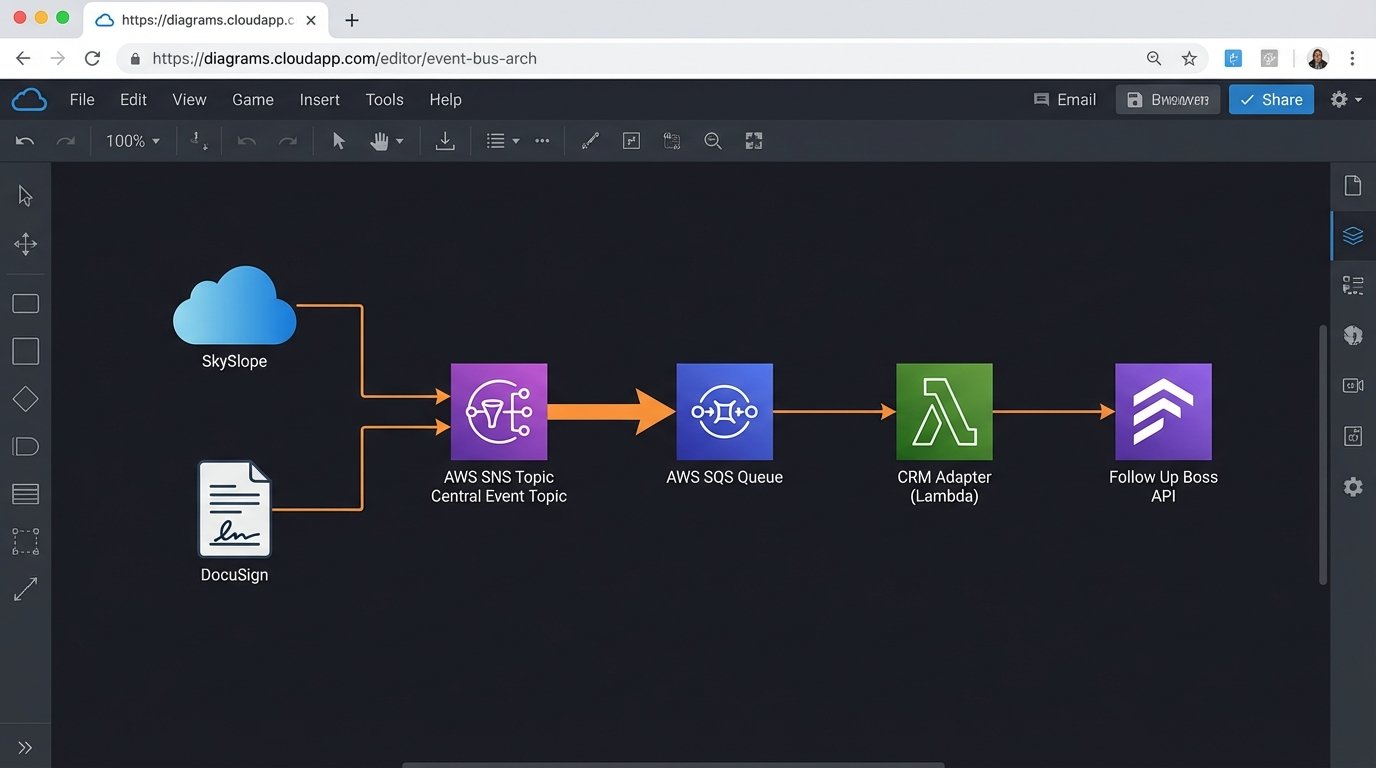

The solution is to stop thinking about point-to-point synchronization entirely. Brokerages must architect their data flow around a central, asynchronous event bus. In this model, systems do not talk directly to each other. They publish events to a central pipeline, and other systems subscribe to the events they care about. This decouples the applications entirely.

When a transaction status changes in SkySlope, it does not call the Follow Up Boss API. It publishes a standardized `TransactionStatusChanged` event to the bus. The payload of this event is rich. It contains all the necessary context: the transaction details, the participants, their roles, and their contact information. It is a self-contained unit of work.

A separate, dedicated service, which we can call the “CRM Adapter,” subscribes to these events. Its only job is to translate the standardized event payload into an API call that the CRM understands. If the CRM API changes, you only update this one adapter. The transaction system is completely unaffected. If you add a new accounting system, it can also subscribe to the same event stream without anyone having to modify the original integration.

Building the Bus: Technology Choices

This is not a hypothetical architecture. The tools to build this are readily available and are not cost-prohibitive. For a mid-sized brokerage, a combination of AWS SNS (Simple Notification Service) for publishing topics and SQS (Simple Queue Service) for durable subscriptions is a battle-tested and cheap solution. For high-volume national franchises, something more robust like Apache Kafka or Google Cloud Pub/Sub provides the necessary throughput and replay capabilities.

The key is standardizing the event schema. Before writing a single line of code, define the canonical structure for your core business events. Use a format like JSON Schema to enforce this structure. An event for a document signature should always look the same, regardless of whether it came from DocuSign or dotloop.

- Event Publisher: The source system (e.g., the transaction platform). Its only responsibility is to fire a well-formed event into the bus.

- Event Bus: The transport layer (e.g., SNS, Kafka). It routes events from publishers to subscribers.

- Event Consumer (Adapter): A small, stateless service that subscribes to events and executes logic. This is where you house the logic to call the destination system’s API.

This model converts your data flow from a tangled web of dependencies into a clean, linear, and observable pipeline.

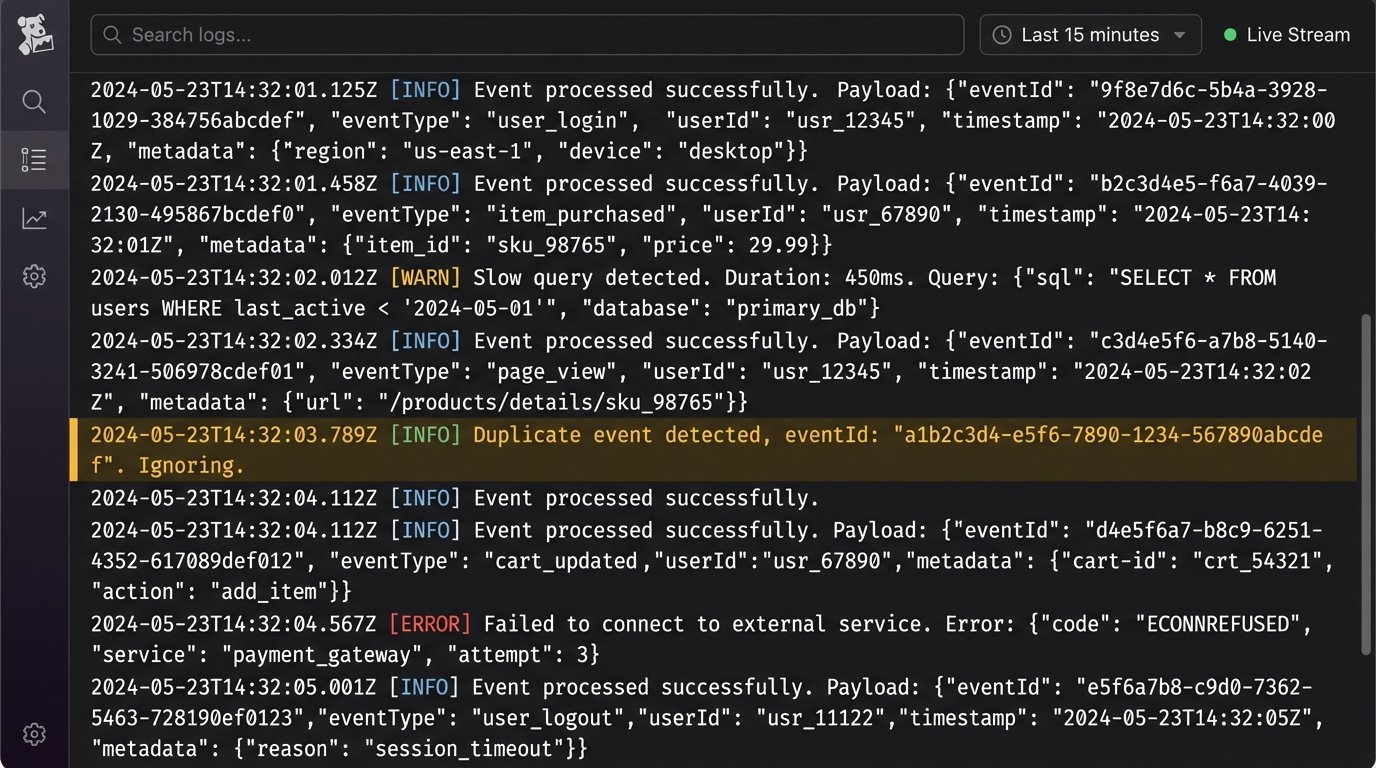

The Non-Negotiable: Idempotency

In a distributed system, you must assume that events can be delivered more than once. Your consuming services must be idempotent. This means that processing the same event multiple times has the same effect as processing it just once. If you receive a `ContactCreated` event twice, you should not create two contacts in your CRM.

The standard way to handle this is with an idempotency key. The publishing system generates a unique identifier for every event (e.g., a UUID). The consumer service maintains a short-term cache of the keys it has already processed. Before processing a new event, it checks if the key is in the cache. If it is, the event is discarded. If not, it is processed, and the key is added to the cache.

// Simplified idempotent consumer logic

const processedEventIds = new Set();

function processEvent(event) {

const { eventId, payload } = event;

if (processedEventIds.has(eventId)) {

console.log(`Duplicate event ${eventId} detected. Ignoring.`);

return;

}

// Actual business logic here

updateCrmRecord(payload);

processedEventIds.add(eventId);

// In a real system, this Set would be a Redis cache or similar

// with a TTL to prevent it from growing indefinitely.

}

Building for idempotency from day one prevents a whole class of data duplication bugs that are maddening to debug in a production environment.

Ownership Comes at a Price

This architecture is not a free lunch. It requires engineering talent to build and maintain. It is more complex upfront than signing up for a no-code connector. You are taking ownership of your data pipeline. That means you are also responsible for its monitoring, logging, and uptime.

The trade-off is control and resilience versus simplicity and fragility. The point-to-point connector is cheap and easy until it breaks at 2 AM on a Saturday. The event bus architecture is a capital investment in infrastructure that pays dividends in reliability and scalability. It allows you to swap out components of your tech stack without a full-scale rewrite of every integration.

Brokerages need to decide what business they are in. If you are a technology-forward company that views data as a core asset, you must own the pipeline. Relying on third-party connectors for mission-critical data flow is an abdication of that responsibility.

Your data pipeline is either a strategic asset or a ticking time bomb. There is no middle ground.