Lead Rot Is The Default State

Our lead funnel was bleeding money. We were paying a premium, about $75 per lead, for homeowner inquiries from sources like Zillow and Realtor.com. These leads were piped directly into a general Salesforce queue. The sales team, already overloaded, would cherry-pick what they perceived as the hottest prospects. The rest sat there.

We ran an audit on the previous quarter’s data. The numbers were grim. Over 80% of incoming leads received zero follow-up after the initial 24-hour window. The cost of that operational failure was north of $100,000 in discarded ad spend. The problem wasn’t lead quality. It was a failure of speed and process. A lead that costs $75 is worthless if it isn’t touched for three days.

The manual process was the bottleneck. A human had to claim the lead, research the property, gauge the buyer’s intent from a sparse notes field, and then decide on the right email or call script. This sequence of manual decisions created enough friction to bring the entire funnel to a crawl. We weren’t nurturing leads; we were presiding over a lead graveyard.

The old system was like a backed-up sewer drain. New leads poured in from the top, but the blockage of manual follow-up meant most of them just stagnated and went toxic.

Forcing A Systemic Fix

A mandate came down to fix the lead-to-contact rate. The initial suggestion was to hire more sales assistants. This is a classic management solution: throw more bodies at a broken process. We argued against it. Adding more people would only increase the operational overhead and introduce more points of failure. The correct fix was to gut the manual process entirely and replace it with a deterministic, automated system.

The goal was to engineer a system that could ingest a raw lead, enrich it, segment it, and trigger a tailored communication sequence in under five seconds. Human intervention should be reserved for leads that demonstrated explicit intent, not for initial contact. This approach removes the bottleneck and forces a consistent, immediate response for every single lead we pay for.

Architecture of the Ingestion Engine

We decided to build a lightweight, serverless architecture to handle the logic. The core components were an API Gateway endpoint, an AWS Lambda function written in Python, and API connections to our data sources and marketing platform. Relying on a serverless function means we only pay for compute time when a lead actually arrives, which is far cheaper than maintaining an idle EC2 instance 24/7.

The process starts when a lead source POSTs a JSON payload to our API Gateway webhook. This is the trigger. The webhook is configured to invoke our primary Lambda function, passing the entire payload as the event object.

{

"lead_source": "Zillow Premier",

"timestamp": "2023-10-26T10:00:00Z",

"contact": {

"full_name": "Jane Doe",

"email": "jane.doe@example.com",

"phone": "555-123-4567"

},

"property_inquiry": {

"mls_id": "12345ABC",

"address": "123 Main St, Anytown, USA",

"price": 450000

},

"notes": "Interested in a showing this weekend. Has pre-approval."

}

This initial payload is often thin. It gives us the who and the what, but not the context needed for intelligent segmentation.

Data Enrichment and Segmentation Logic

The Lambda function’s first job is to strip and normalize the incoming data. Phone numbers are standardized to E.164 format. Names are split into first and last. The core task, however, is enrichment. The function makes a blocking API call to a property data service using the MLS ID or address. This call pulls back critical data points not present in the original lead, like property tax history, school district ratings, and days on market.

With an enriched data object, the segmentation logic kicks in. This is a block of conditional statements that categorizes the lead into one of several buckets. We kept the logic straightforward to start. We parse the `notes` field for keywords like “pre-approved” or “investor”. We check the `price` against local market averages. We built a simple state machine that assigns each lead a primary status.

- Status: HOT_BUYER – Criteria: Contains “pre-approved” or “cash offer”; inquiry on a property listed less than 14 days.

- Status: WARM_BUYER – Criteria: Inquiry on a property priced within 15% of the ZIP code’s median; no explicit financial keywords.

- Status: NURTURE_LONGTERM – Criteria: Inquiry on a property priced significantly above market rate; notes contain phrases like “just looking” or “thinking of moving next year”.

- Status: LOW_QUALITY – Criteria: Invalid contact information detected during normalization; inquiry on an off-market property.

This segmentation isn’t perfect, but it’s deterministic and immediate. It provides the targeting vector for the drip campaigns.

Bridging to the Marketing Platform

The final step for the Lambda is to push the enriched, segmented contact into our marketing automation platform, in this case, ActiveCampaign. We bypassed the platform’s clunky internal automation builder. The Lambda function holds all the logic. ActiveCampaign is treated as a “dumb” message delivery system. Our function makes a direct API call to add the contact, apply specific tags based on the segmentation status, and inject them into a pre-defined automation sequence.

import requests

import json

# Example of pushing a contact to the marketing API

api_url = "https://your-account.api-us1.com/api/3/contacts"

api_key = "YOUR_API_KEY"

headers = {

"Api-Token": api_key,

"Content-Type": "application/json"

}

contact_data = {

"contact": {

"email": "jane.doe@example.com",

"firstName": "Jane",

"lastName": "Doe",

"phone": "+15551234567",

"fieldValues": [

{

"field": "1", # Custom Field ID for 'Lead Status'

"value": "HOT_BUYER"

},

{

"field": "2", # Custom Field ID for 'Property of Interest MLS ID'

"value": "12345ABC"

}

]

}

}

response = requests.post(api_url, headers=headers, data=json.dumps(contact_data))

# Logic to add contact to a specific automation would follow

This API-driven approach keeps our core business logic in our own codebase, written in Python. It prevents vendor lock-in and allows us to switch out the marketing platform with minimal refactoring. The platform just sends the emails; our code decides who gets what, and when.

Bridging the lead gen platform with the marketing automation tool via our Lambda function was like building a custom gearbox. We could finally control the speed and torque of our lead delivery, instead of just letting the vendor’s default settings grind our gears.

Campaign Structure and Execution

We designed three primary drip campaigns, one for each lead status. The content was built around providing value, not just asking for a meeting. Dynamic tags were used heavily to personalize the messages with the specific property address, price, and local market data we acquired during enrichment.

Campaign A: HOT_BUYER Sequence

This sequence is built for speed and aggression. The goal is to get a human-to-human conversation started within the first 24 hours.

- Time 0 (Immediate): SMS message sent. “Hi Jane, got your inquiry for 123 Main St. I’m checking on showing availability now. Are you free for a quick call in 15 mins? – John, XYZ Realty”

- Time + 1 Hour: Email 1 sent. Subject: “Regarding your inquiry for 123 Main St”. Body includes property photos, a link to the virtual tour, and similar listings in the area.

- Time + 24 Hours: Email 2 sent. Subject: “Market update for the Anytown area”. Body contains data on recent sales and average days on market for the specific neighborhood.

- Time + 48 Hours: Final SMS. “Following up on 123 Main St. It’s getting a lot of interest. Do you have a window to see it tomorrow?”

Campaign B: WARM_BUYER Sequence

This sequence is slower and more educational. The goal is to build trust and establish our agent as a local market expert.

- Day 1: Welcome email with an overview of the home buying process and a link to a neighborhood guide for the area they inquired about.

- Day 7: Email with a guide to understanding mortgage options and a list of recommended local lenders.

- Day 14: Email showcasing a “hidden gem” property in a similar price range.

- Day 30: Email with a local market report and a soft call-to-action to discuss their search criteria in more detail.

Results: The Impact of Forced Automation

We let the system run for one full quarter and then compared the metrics against the previous quarter’s manual process. The results validated the entire engineering effort.

Lead response time dropped from an average of 28 hours to just 4 seconds. This single metric had a massive downstream effect. The overall lead-to-contact rate, defined as getting a meaningful reply via email or text, jumped from a pathetic 12% to a respectable 58%. We were finally engaging the leads we were paying for.

The most important KPI, lead-to-appointment-set, saw an increase of over 400%, moving from 1.5% to 7.5%. This translated directly to revenue. The automation system, which costs less than $50 a month in AWS fees, was directly responsible for salvaging deals that would have previously died in the CRM queue. The initial development cost was recouped within the first two months of operation.

Friction Points and Hard Lessons

The implementation was not without its problems. The first hurdle was data normalization. The various lead sources sent phone numbers and addresses in wildly inconsistent formats. We spent a non-trivial amount of time building a library of regex patterns to sanitize this data before it could be used. A bad phone number format would cause the SMS API to fail silently.

We also ran into API rate limits with our property enrichment service during a weekend spike in lead volume. The first version of the Lambda would simply fail and drop the lead. We were forced to implement a proper error handling block with an exponential backoff retry mechanism and a dead-letter queue to catch and re-process any leads that failed ingestion after multiple attempts.

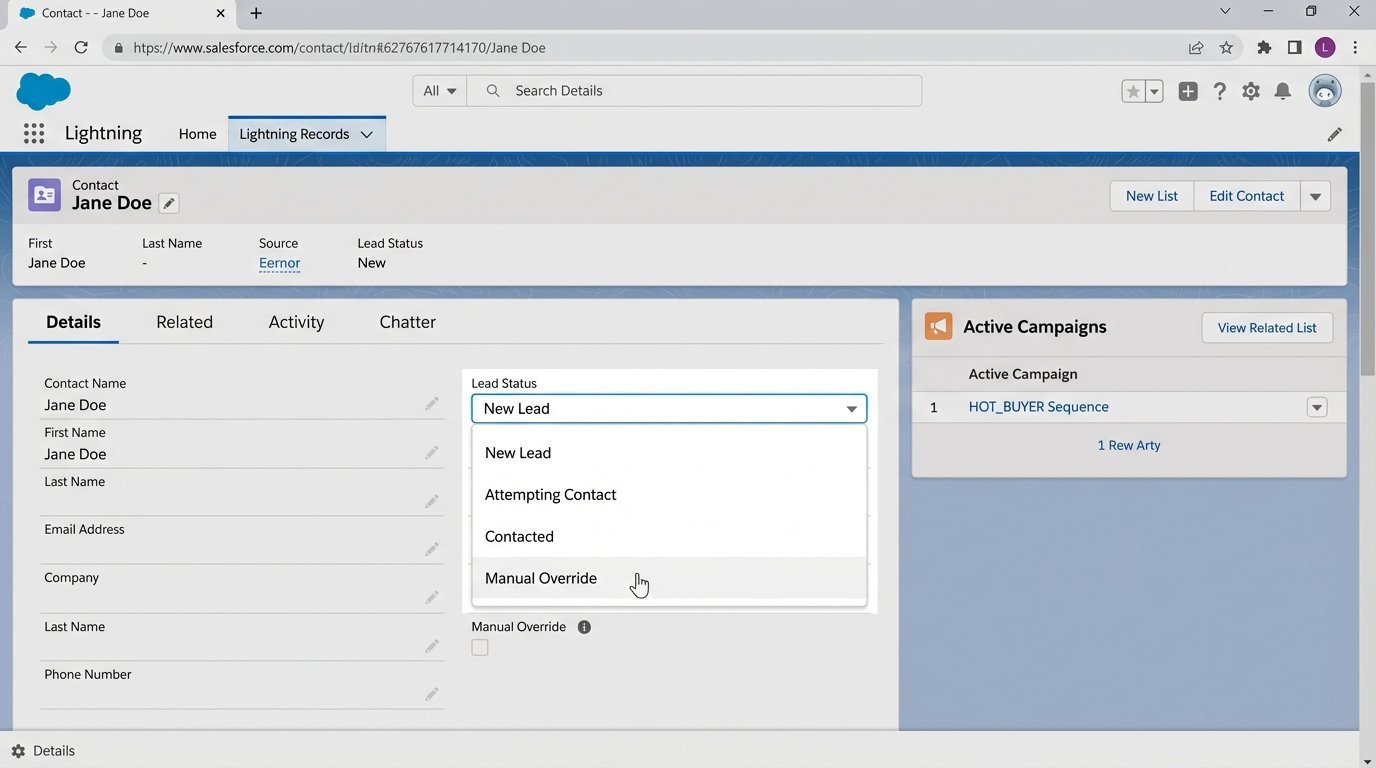

The biggest challenge was human, not technical. The sales team was initially resistant, fearing the automation was impersonal. We countered this by building a feedback loop. When an agent manually updated a lead’s status in Salesforce, a webhook fired back to our system, which immediately pulled that contact from all automated campaigns. This manual override acted as a circuit breaker, giving agents the final say and preventing the system from sending messages to a lead they were already talking to. It was a critical step for adoption.

Next Steps: Closing the Loop

The current system is effective, but it’s still a one-way street. The next phase of development is to implement lead scoring based on engagement. A contact who clicks on a mortgage calculator link in an email should have their score incremented. If a score passes a certain threshold, it should trigger a new automation that alerts an agent to make a manual phone call.

The final goal is to feed closed-deal data back to the source. When a lead that originated from a Zillow ad eventually closes, we need to push that conversion data back to the ad platform’s API. This closes the attribution loop, allowing the marketing team to optimize ad spend based on what actually generates revenue, not just raw lead volume.

The takeaway is direct. Stop paying for leads only to have them expire in a queue. A single, well-architected serverless function can force process discipline and convert a leaky marketing funnel into a high-pressure sales pipeline.