Let’s get one thing straight. This title is a business school platitude. Choosing mission-critical software has nothing to do with romance and everything to do with irreversible architectural dependencies. The comparison isn’t just flimsy; it’s dangerous. It implies you can fix a bad choice with counseling. You can’t. You fix it with a six-figure data migration project and a year of lost productivity.

The real commitment isn’t emotional; it’s structural. It’s about data gravity, API handcuffs, and vendor lock-in that makes a prenuptial agreement look like a handshake deal. Forget the courtship. We need to talk about the technical divorce.

The Monolithic Lie: Deconstructing the ‘All-in-One’ Chimera

Every major real estate tech conference pushes the same dream: a single platform that elegantly handles your CRM, transaction management, marketing automation, and agent onboarding. This is a fantasy constructed by marketing departments. The reality is a Frankenstein’s monster of bolted-together acquisitions, each with its own legacy database schema and contradictory logic.

The result is a system at war with itself. A “Contact” entity in the CRM module has a different set of fields and validation rules than the “Client” entity in the transaction module. They might sync, but they rarely reconcile. We’re forced to write brittle, intermediate logic to bridge these gaps, creating a maintenance nightmare that breaks every time the vendor pushes a silent update. This isn’t a platform; it’s a collection of squabbling tenants in the same building.

You end up with data integrity issues that are nearly impossible to trace. Is the incorrect phone number coming from the lead import parser, the transaction coordinator’s manual entry, or the broken sync job that runs at 2 AM? Good luck finding out from the support desk.

Data Schizophrenia in Practice

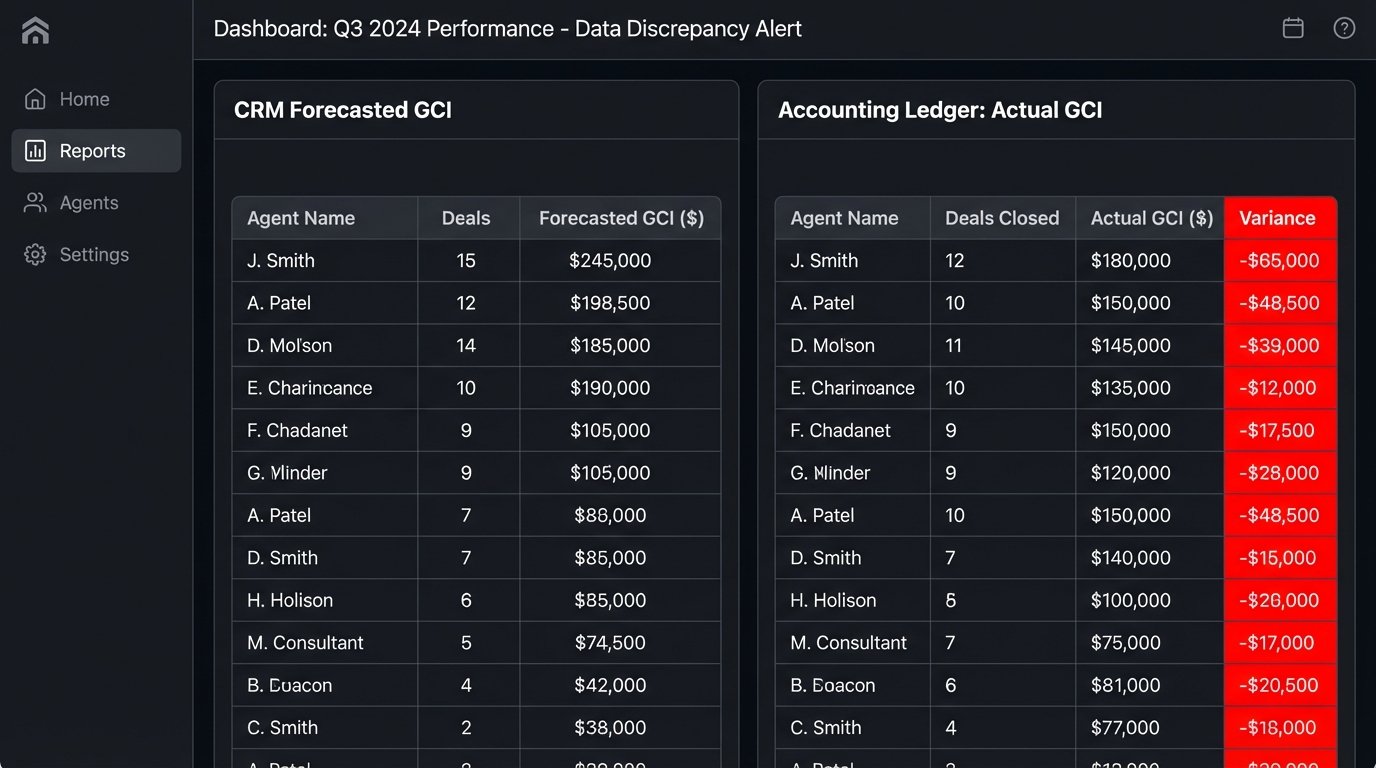

Consider the simple task of tracking an agent’s gross commission income (GCI). The CRM side calculates potential commission based on sales price and a standard percentage. The transaction side calculates actual commission based on the final closing statement, which includes splits, referrals, and concessions. These two numbers will never match without a purpose-built reconciliation layer.

An all-in-one system will often just show you both numbers in different reports, forcing your accounting team to spend days manually untangling the mess. The platform’s inability to maintain a single source of truth for its most critical data points is a fundamental design failure. It’s a wallet-drainer disguised as a convenience.

Data Gravity: The Hostage Situation No One Talks About

Once you pour five years of transaction history, client data, and agent records into a single platform, you’ve created a center of data gravity. All your other tools, processes, and business logic are now caught in its orbit. Leaving is no longer a simple matter of exporting a CSV file. That’s a myth perpetuated by the sales team.

A true data extraction requires a deep understanding of the platform’s object model. To export a single “Transaction,” you must recursively pull related objects: properties, clients, cooperating agents, documents, commission disbursements, and activity logs. This often requires hundreds of API calls per transaction, slamming you right into the vendor’s undocumented rate limits. Trying to pull a decade of data this way is like trying to drain a lake with a teaspoon.

The vendor knows this. They make it easy to inject data but punishingly difficult to extract it at scale. They are not incentivized to give you back your own data in a clean, portable format. Your data is their leverage.

The Technical Reality of an Export

Let’s say you want to pull all communications related to a transaction. An API might give you an endpoint like GET /api/v1/transactions/{id}/communications. But what does that return? A list of comment strings? Or a list of IDs that you then have to use to query a separate /api/v1/emails/{id} and /api/v1/text_messages/{id} endpoint? Each of those subsequent calls counts against your rate limit.

Here’s a trivial Python snippet to illustrate the chain reaction. This isn’t a migration script; it’s the first five minutes of realizing how deep the rabbit hole goes.

import requests

import time

API_KEY = 'YOUR_SECRET_KEY'

HEADERS = {'Authorization': f'Bearer {API_KEY}'}

BASE_URL = 'https://api.megacorp.re'

transaction_ids = [101, 102, 103] # Imagine 10,000 of these

all_transaction_data = []

for trans_id in transaction_ids:

# First call: get basic transaction data

trans_resp = requests.get(f'{BASE_URL}/transactions/{trans_id}', headers=HEADERS)

transaction = trans_resp.json()

# Second call: get associated client IDs

clients_resp = requests.get(f'{BASE_URL}/transactions/{trans_id}/clients', headers=HEADERS)

client_ids = [c['id'] for c in clients_resp.json()]

# N more calls: get details for each client

transaction['clients_full'] = []

for client_id in client_ids:

client_resp = requests.get(f'{BASE_URL}/clients/{client_id}', headers=HEADERS)

transaction['clients_full'].append(client_resp.json())

time.sleep(0.5) # Pray this is enough to avoid the rate limiter

all_transaction_data.append(transaction)

This code is simplistic and already making five API calls for three transactions. Now scale that to 50,000 closed deals. The project shifts from data export to a complex, multi-threaded orchestration job that will run for days and fail constantly.

The API Integration Charade

Every modern platform claims to have a “rich API” and a “vibrant partner ecosystem.” This is usually code for a handful of poorly maintained REST endpoints and a Zapier connector that handles three basic triggers. The promise is interoperability. The reality is a minefield of authentication issues, breaking changes, and critical business functions that are mysteriously absent from the API documentation.

True interoperability isn’t just about having an API. It’s about having a *predictable* and *stable* contract between systems. I have seen APIs where updating a contact’s email address via a PUT request silently wiped out their mailing address because the developer failed to implement a proper PATCH method. This isn’t an integration; it’s data vandalism with an API key.

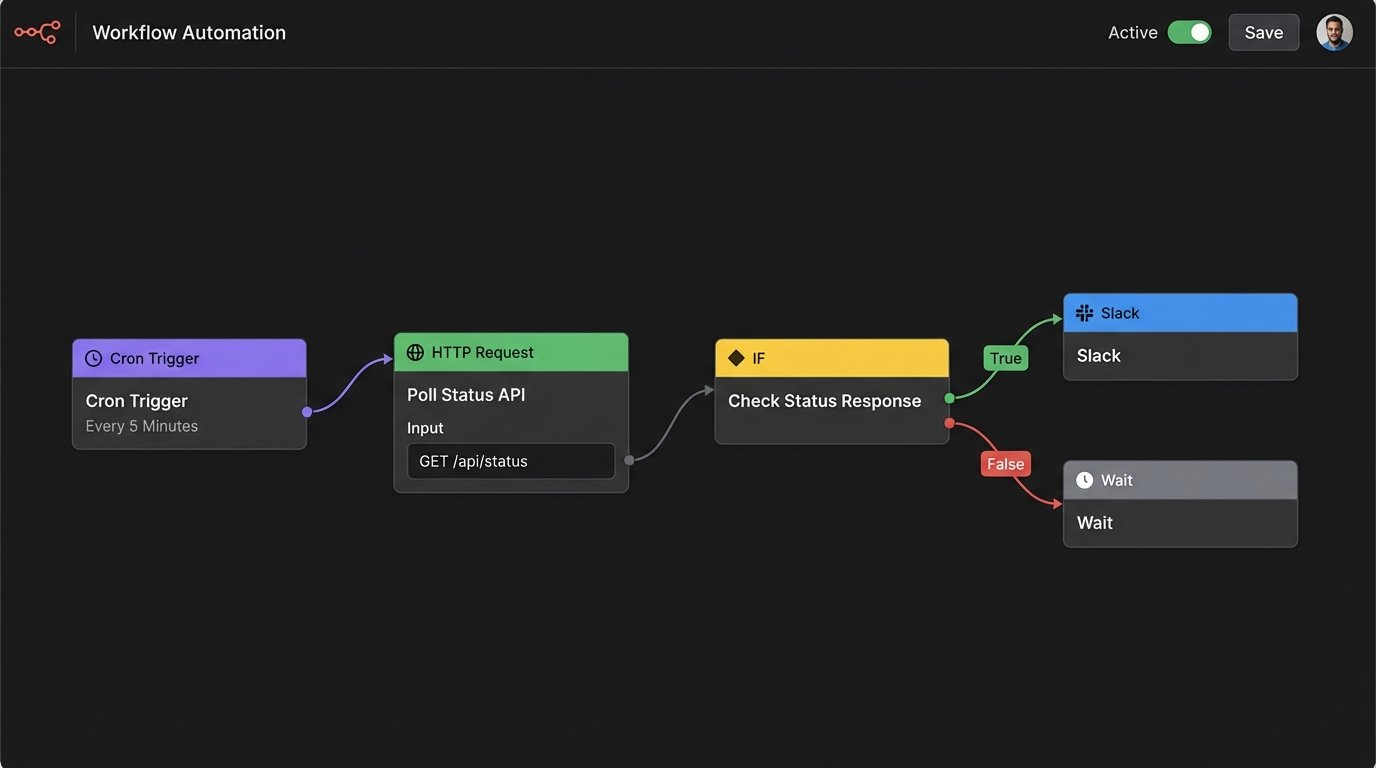

The most common failure is the lack of webhooks for critical events. You want to trigger a workflow the moment a document is signed? Too bad. The platform has no webhook for that event. Your only option is to build a polling mechanism that hammers their API every five minutes, begging for an update. This architecture is inefficient, slow, and fragile. It’s the technical equivalent of repeatedly asking “Are we there yet?” on a road trip.

A Better Way: The Composable Brokerage Architecture

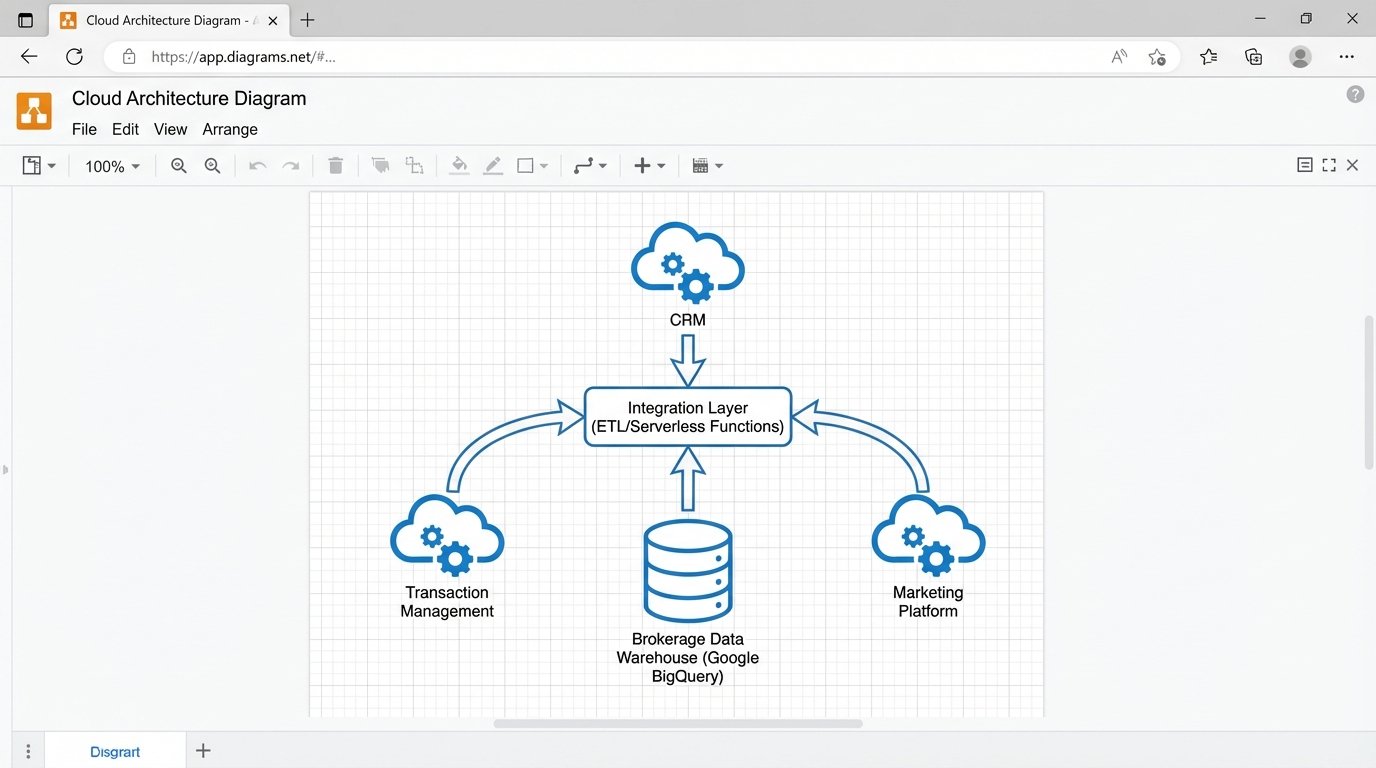

Stop looking for a software spouse. Start architecting a business. The goal is not to find a single monolithic system to rule them all. The goal is to assemble a stack of best-in-class tools that you control, not the other way around. This means treating your own data warehouse as the sun and the vendor platforms as orbiting planets, not the other way around.

The architecture is straightforward. You select the best CRM for your lead generation process. You pick the best transaction management system for compliance. You choose the best marketing tool for your campaigns. The key is that none of these systems are the “source of truth” for your combined business intelligence. They are spokes on a wheel.

The hub of this wheel is your own data store, something as simple as a Google BigQuery or AWS Redshift instance. Each vendor platform pushes data to this central hub via an integration platform like Workato or a set of custom serverless functions. Your reports, commissions, and analytics are all run from *your* database. You own the schema. You own the data. This is the only way to build a future-proof tech stack. You’re not just buying software; you’re building an asset.

Implementing the Hub-and-Spoke Model

The first step is to establish your own canonical data model. Define what a “Contact,” “Agent,” and “Transaction” looks like for your business, independent of any vendor’s definition. This becomes your internal schema. When you onboard a new tool, the task is to map its data model to yours. The integration layer is responsible for this transformation.

This model forces you to evaluate software on more important criteria. Instead of asking “Does it do everything?” you ask “How good is its API for exporting the specific data I need?” You prioritize vendors that provide robust webhooks, clear documentation, and fair rate limits. You are selecting a component, not a dictator.

Swapping out a component becomes trivial. If a better CRM comes along, you just build a new “spoke” that maps its data to your central hub. The core of your business operations, your reporting, and your historical data remain untouched. You can fire a vendor without burning down your business. That’s real operational freedom.