The real estate industry operates on a mountain of data but processes it with a teaspoon. Agents are sold software that promises efficiency but delivers vendor lock-in and bloated UIs. The fundamental operational model is broken because it treats a data-processing job as a sales job. It is not. The job is to manage an event-driven pipeline, and the agents who fail to grasp this will be automated into irrelevance.

A developer does not see a new client lead as a person to call. They see an incoming JSON payload. It has predictable fields, data types, and required values. The entire client lifecycle, from first contact to closing, is a state machine. Every step is a function that takes an input, processes it, and produces an output. Thinking this way is not dehumanizing. It is the only logical way to scale an operation that is fundamentally about data management.

Deconstructing the Job into System Calls

An agent’s daily workflow, when stripped of its industry jargon, is just a series of system calls against various databases and APIs. The problem is that agents execute these calls manually, one at a time. This is the equivalent of a human toggling bits in memory instead of running a compiled program. It is slow, error-prone, and does not scale.

Lead Ingestion as an API Endpoint

A lead from Zillow or a website form is not a “hot prospect.” It is a POST request to your personal API. This payload contains structured data: `name`, `email`, `phone`, `property_of_interest`, and `timestamp`. A developer’s first instinct is to validate this data. Is the email format correct? Is the phone number valid? Does the property ID exist in the MLS database? An agent does this validation manually through a phone call. An automated script can do it in milliseconds.

The manual process introduces latency and is a single point of failure.

Client Qualification as a Validation Script

The initial client conversation is not about building rapport. It is a synchronous information-gathering process to populate required fields in a database record. The agent asks: What is your budget? Are you pre-approved? What is your timeline? These are just variables in a qualification script. A developer would represent this as a simple conditional logic check.

function is_client_qualified(client_data):

if client_data['pre_approved'] == False:

return {'qualified': False, 'reason': 'No pre-approval'}

if client_data['budget'] < 500000:

return {'qualified': False, 'reason': 'Budget too low for target market'}

if client_data['timeline_months'] > 6:

return {'qualified': False, 'reason': 'Timeline too long'}

return {'qualified': True, 'reason': None}

Executing this logic through a form or a chatbot before a human ever gets involved filters out noise. It lets the agent focus on pre-validated entities, not waste cycles on data that will fail the first logic gate. Your time is a finite resource; stop allocating it to unqualified leads.

Property Search as a Database Query

The MLS is a database. It is often a poorly designed, sluggish one with an archaic interface, but it is a database nonetheless. An agent searching for properties is executing a database query through a clunky web UI. They click filters for bedrooms, bathrooms, and square footage. A developer sees this for what it is: a `SELECT` statement with a `WHERE` clause.

Why click the same twenty filters every morning for five different clients? A developer would script this. They would write a query that runs automatically, compares the results to a list of previously sent properties, and emails only the new listings to the client. This is a trivial task for a script, but a mind-numbing manual chore for a human.

Stop being the UI for a database.

The Current Tech Stack is a Liability

Agents are convinced they need a specific “real estate CRM.” They pay hundreds of dollars a month for systems that are functionally identical to more generic, flexible platforms. These systems are often closed ecosystems designed to trap your data and your process. They are a liability, not an asset, because they prevent true automation and integration.

CRMs as Wallet-Draining Monoliths

Systems like Follow Up Boss or LionDesk are monoliths. They try to do everything and end up doing most of it poorly. Their APIs, if they exist at all, are often rate-limited, poorly documented, and locked behind a higher subscription tier. The business model is to hold your data hostage so you keep paying the monthly fee. A developer would never willingly architect a system that gives a third party that much control over their core business data.

You do not own your business if you do not own your data pipeline.

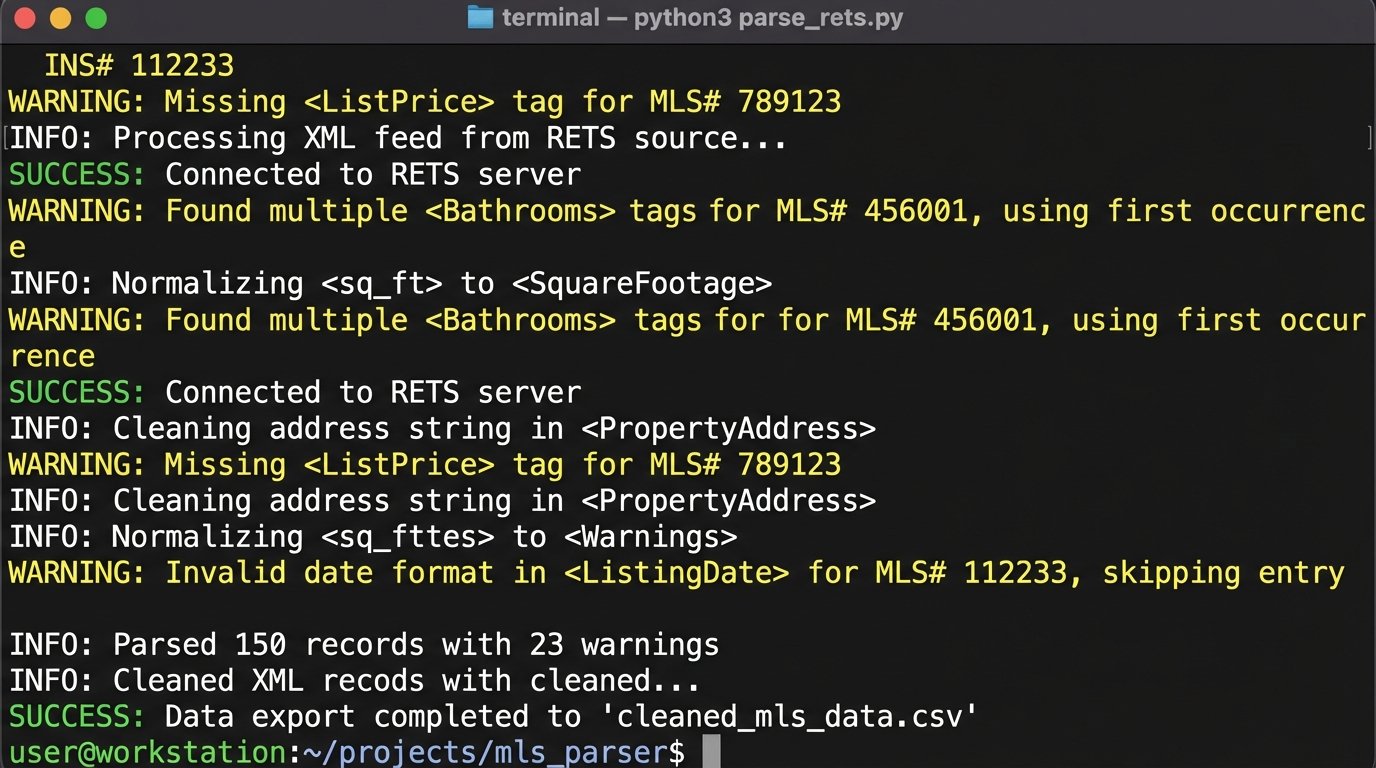

MLS Data Feeds: The Legacy Bottleneck

Getting data out of the MLS is the primary bottleneck for any serious automation. Most MLS boards still rely on the Real Estate Transaction Standard (RETS). RETS is an old, XML-based protocol that is painful to work with. It requires special libraries to parse, and the data is often inconsistent. It’s a relic from an era before REST APIs became standard.

Pulling and normalizing this data is the technical equivalent of trying to shove a firehose of unstructured data through the needle of a well-defined database schema. It’s a messy, constant battle to clean and map fields that have no enforced consistency. A developer accepts this as the cost of getting the raw material, while most agents just accept the crippled, delayed data their CRM vendor feeds them.

Adopting the Developer Mindset: A Practical Architecture

Thinking like a developer does not mean every agent needs to learn to code. It means they need to think in terms of systems, data flow, and automation logic. It means choosing tools based on their interoperability, not their marketing copy. The goal is to build a modular, owned system that works for you, not for a SaaS vendor.

Build a Central Data Warehouse

The first principle is to own your data. This means all data from all sources, leads, MLS listings, client communications, transaction documents, should be piped into a central database that you control. This could be a simple PostgreSQL or MySQL database running on a cheap cloud instance. It is your single source of truth.

Connect your lead sources (web forms, Zillow API) to this database. Use a script to pull from the MLS RETS feed and dump the raw data here. Export your contacts from your phone and your email. Once the data is in one place, you can build anything on top of it. You are no longer limited by your CRM’s reporting features. You can run complex SQL queries to find correlations no off-the-shelf software would ever look for.

Automate Lead Enrichment and Routing

When a new lead hits your database, it should trigger a workflow. A script can take the lead’s name and address and perform automated enrichment.

- Public Records API: Pull property tax history and last sale date.

- Google Maps API: Get commute times to the lead’s stated place of work.

- Internal Data: Check if the contact or address already exists in your database from a past interaction.

This enriched data is then written back to your database. Another script can then perform routing logic. If the lead’s budget is over a certain threshold, send a high-priority alert. If the property of interest is in a specific neighborhood, assign a task to follow up with a neighborhood-specific market report. All of this happens automatically, within seconds of the lead arriving.

import requests

def enrich_lead(lead_data):

# Hypothetical API calls

tax_api_url = f"https://api.countyrecords.com/v1/property?address={lead_data['address']}"

tax_info = requests.get(tax_api_url, headers={'X-API-KEY': 'YOUR_API_KEY'}).json()

maps_api_url = f"https://maps.googleapis.com/maps/api/distancematrix/json?origins={lead_data['address']}&destinations={lead_data['work_address']}&key=YOUR_GOOGLE_API_KEY"

commute_info = requests.get(maps_api_url).json()

enriched_data = {

'last_sale_price': tax_info.get('last_sale_price'),

'commute_minutes': commute_info['rows'][0]['elements'][0]['duration']['value'] / 60

}

# Update the lead record in the central database

update_database_record(lead_data['id'], enriched_data)

return True

This is not complex code. It is a set of simple, logical steps that provide immense value and context before you even make the first phone call.

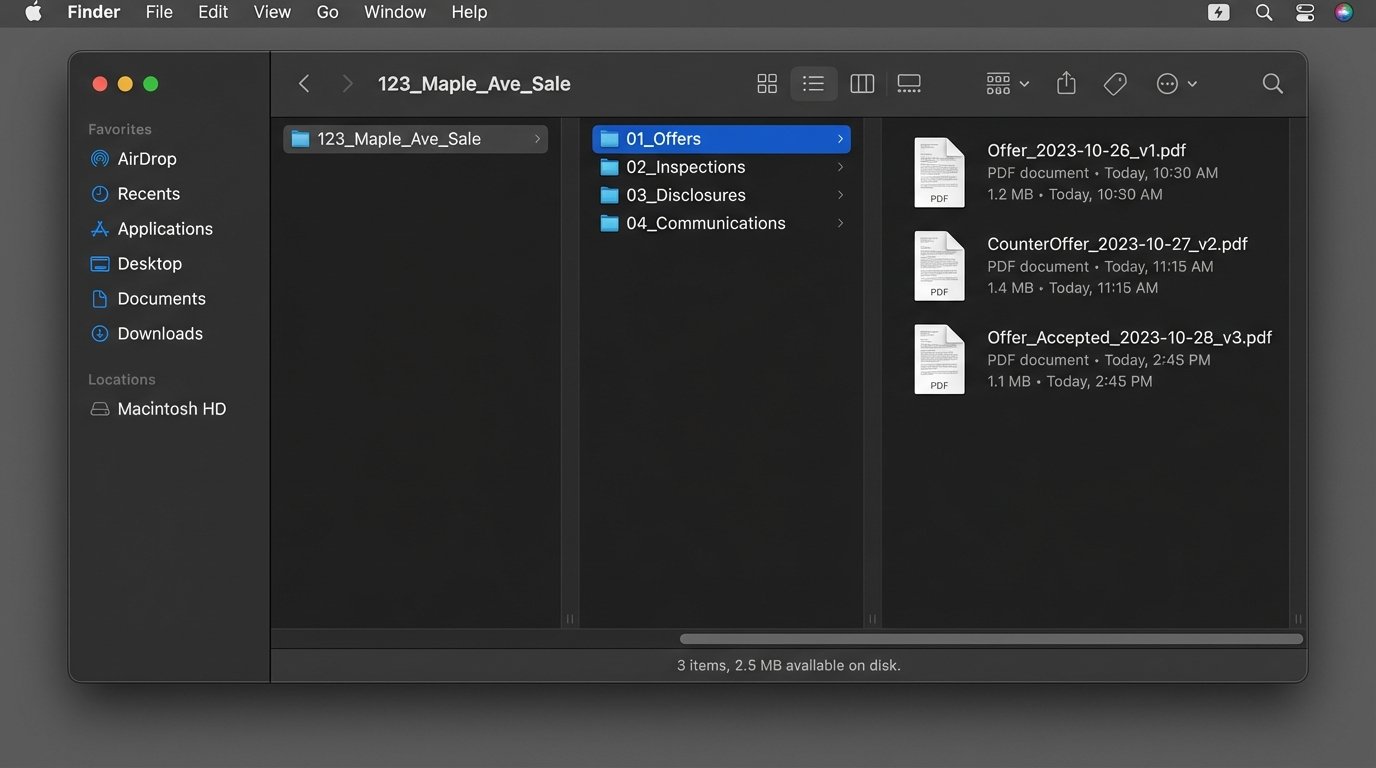

Version Control Your Transactions

Developers use version control systems like Git to track every change to a codebase. It provides a complete, auditable history. The same principle applies to a real estate transaction. Every document, every counter-offer, every client communication is a “commit” in the transaction’s history. Instead of digging through chaotic email threads, you have a structured timeline of events.

This does not mean using actual Git. It means structuring your data storage to reflect this logic. Create a master folder for each transaction, with subfolders for documents, communications, and inspections. Use a consistent file naming convention that includes dates and version numbers (`Offer_2023-10-28_v3.pdf`). This creates a self-documenting audit trail.

The Inevitable Trade-Offs

This approach is not a silver bullet. It introduces its own set of complexities and requires a different kind of work. Developers understand that every architectural decision is a trade-off. There is no perfect system.

Upfront Time Investment vs. Long-Term Efficiency

Building these systems takes time. Setting up a database, writing the first scripts, and debugging API connections is a significant upfront investment. The payoff is in the long-term compounding efficiency. The script you spend 10 hours writing might save you 30 minutes every day, forever. After 20 days, you break even. After a year, you have saved over 180 hours of manual, soul-crushing work.

Most people are not willing to make that initial investment.

Control vs. Convenience

Using an off-the-shelf CRM is convenient. You sign up, and it works. But you sacrifice control. When you build your own system, you have complete control, but you also have complete responsibility. If your server goes down, it is your problem to fix. If an API you rely on changes, it is your job to update your code. This is the core trade-off: you are trading the convenience of a managed service for the power and flexibility of an owned platform.

The agent of the future is a systems integrator. They will not be replaced by technology. They will be the ones who architect and manage the technology that replaces their competitors. The job is shifting from manually executing tasks to designing the automated workflows that execute those tasks. Those who continue to operate as manual processors in an automated world are writing their own pink slips.