The manual process of A/B testing social ad campaigns is fundamentally broken. It’s a low-leverage activity that forces engineers and marketers into a cycle of exporting CSVs, eyeballing performance metrics in a spreadsheet, and then manually pausing underperforming ad sets. This approach does not scale. It introduces human bias and catastrophic delays between data collection and tactical action.

You cannot outpace a platform’s delivery algorithm by clicking buttons in an ads manager. The feedback loop is too slow, and the volume of variables (creative, copy, audience, placement) creates a combinatorial explosion that no team can manage manually. The result is wasted budget and inconclusive tests that get abandoned after a week.

The Core Failure: Data Latency and Human Bottlenecks

The central problem is latency. By the time a human analyst downloads a report, identifies a poor-performing ad variant, and pauses it, hundreds or thousands of dollars have been spent serving impressions to the wrong audience. The platform’s algorithm has already gathered significant data, but your reaction time is measured in hours or days, not seconds.

This manual workflow also lacks statistical rigor. Decisions are often made on gut feelings or incomplete data sets, leading to the premature termination of potentially successful ads. The process mistakes random variance for a definitive trend, a classic rookie error that automation is built to prevent.

Diagnosing the Architectural Flaw

The failure is not in the strategy, but in the execution architecture. Relying on a human to be the control system for a high-velocity, high-spend machine is a design flaw. You have a system generating terabytes of performance data, and you are trying to analyze it with tools built for quarterly financial reports. It’s an impedance mismatch of a colossal scale.

A proper solution removes the human from the tactical decision loop. The human role shifts to setting the strategic boundaries, defining the rules of engagement, and providing the creative inputs. The machine handles the high-frequency execution, analysis, and optimization.

The Fix: Architecting a Closed-Loop Automation System

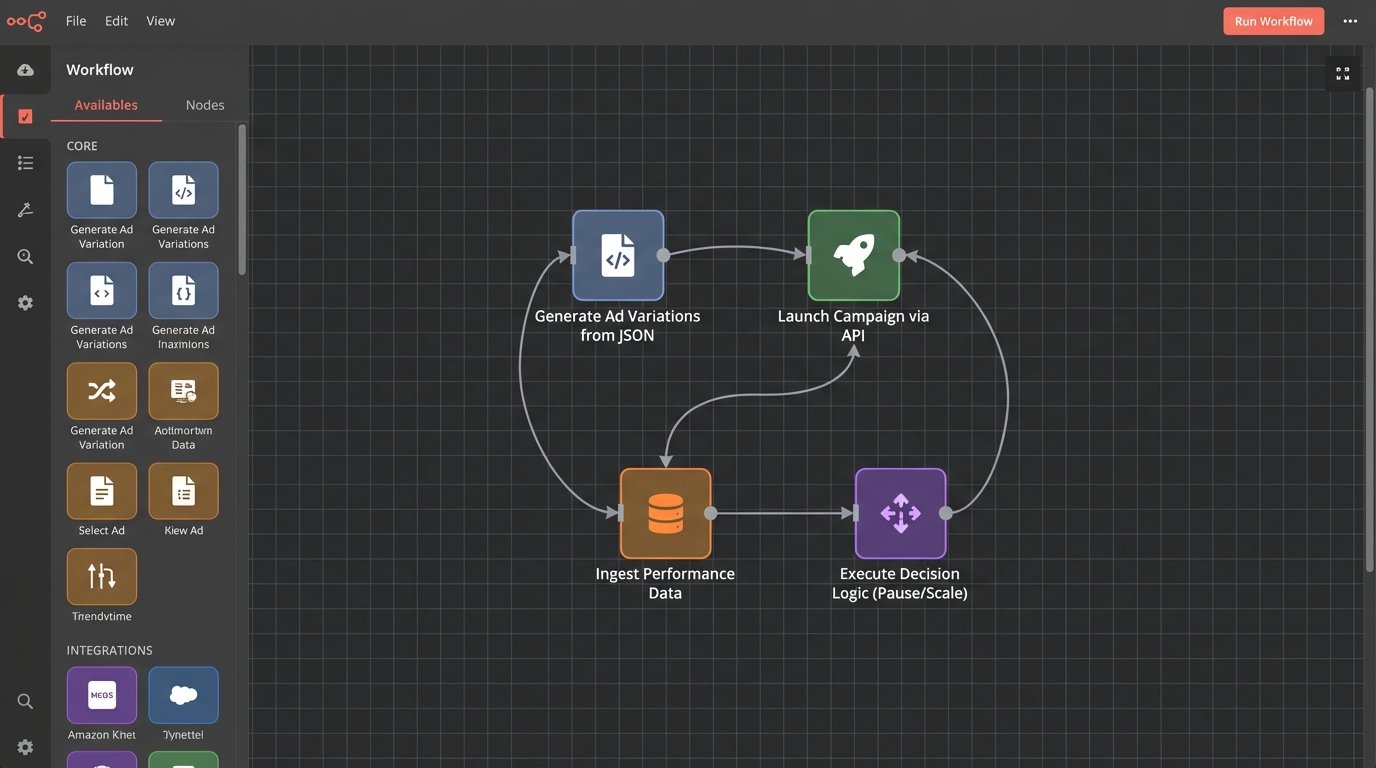

The fix is a closed-loop system that programmatically creates, launches, monitors, and optimizes ad campaigns. This system directly interfaces with the ad platform APIs, bypassing the UI entirely. It operates on a simple, relentless cycle: deploy, measure, analyze, and cull. No spreadsheets, no manual pauses.

This architecture is composed of four primary components: a variation generator, an API campaign launcher, a performance data ingestion engine, and a decision logic core. Each component must be built for failure, with robust logging and error handling. If one part fails, the entire system can burn budget without oversight.

Component 1: The Ad Matrix Generator

The first step is to stop thinking about ads as individual units. Instead, treat them as a matrix of components: headlines, body copy, images, videos, and calls-to-action. These components are stored in a database or even a structured file format like JSON or YAML. A script then generates every possible permutation based on a predefined campaign structure.

This programmatic approach allows you to generate hundreds of ad variations in seconds. The output isn’t a spreadsheet for a human to upload. It’s a structured object ready to be posted directly to the ad platform’s campaign creation endpoint. This completely eliminates manual setup errors.

Component 2: The Campaign Launcher API Bridge

With a set of ad variations generated, the launcher component takes over. This is a service that speaks the language of the target platform’s API, be it Meta’s Marketing API, Google’s Ads API, or another platform’s equivalent. Its job is to translate your internal ad object into a series of authenticated POST requests that create the campaigns, ad sets, and ads.

This is where things get ugly. You will fight with opaque error messages, undocumented field requirements, and strict rate limits. You must code defensively, implement exponential backoff for failed requests, and log every single API call and response. Assume the documentation is out of date and that any call can fail for arbitrary reasons.

The launcher’s success depends on meticulous management of API tokens and permissions. A single expired OAuth token can bring the entire launch process to a halt, which is always fun to discover at the start of a major sales event.

Component 3: The Performance Data Ingestion Engine

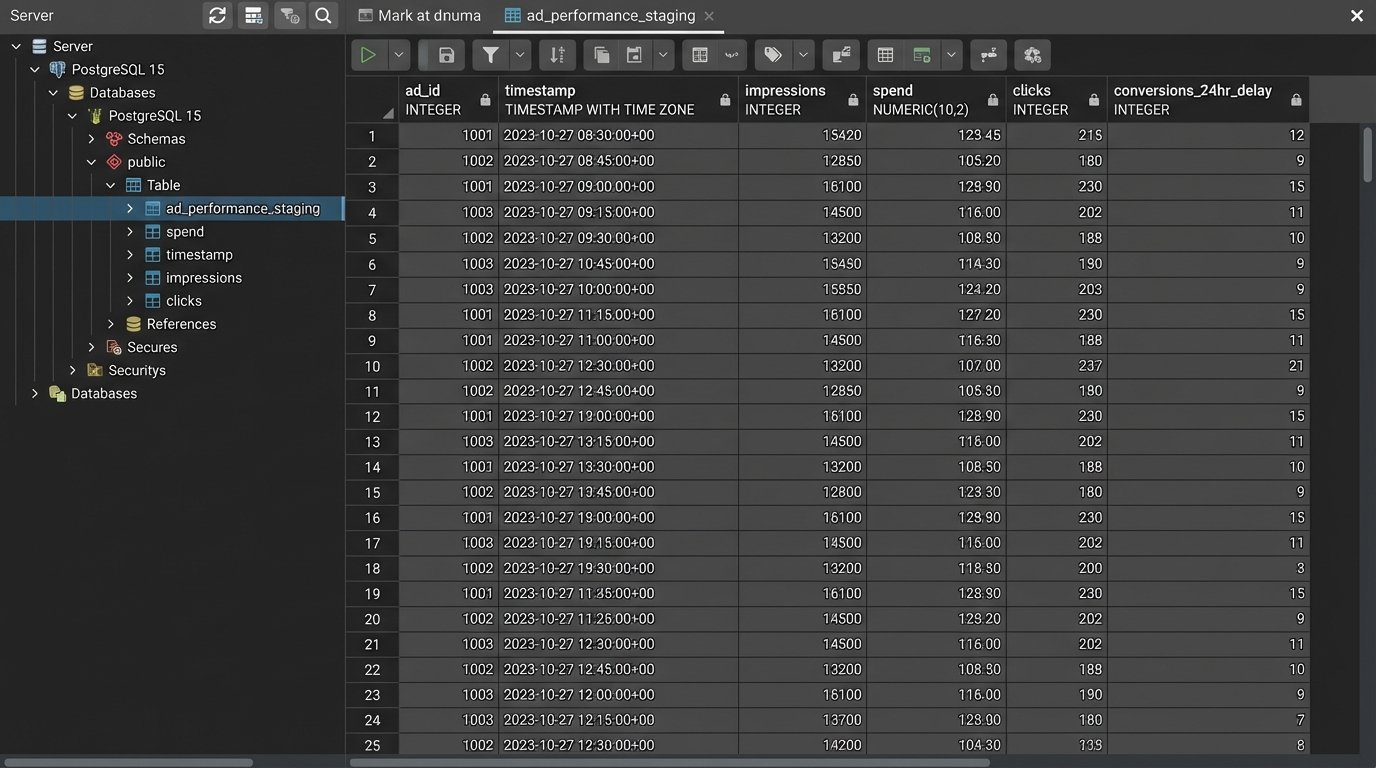

Once campaigns are live, the system needs to pull performance data back. This is not a one-time export. The ingestion engine must poll the API at a regular interval, requesting key metrics like impressions, clicks, spend, and conversions for each ad ID. The frequency of these pulls is a balancing act between needing fresh data and staying under the API’s rate limits.

Raw data from the API is often messy and needs to be cleaned, normalized, and stored in a staging database (e.g., PostgreSQL, BigQuery). Attempting to process this data in-memory is a fool’s errand. You need to persist it to track performance over time and handle the inevitable delays in attribution reporting. Trying to analyze a live stream of API data is like trying to catalog individual droplets while a fire hydrant is pointed at your face.

Webhooks can be an alternative to constant polling if the platform supports them, but they introduce their own set of problems. You need a publicly accessible endpoint, and you must handle retries and potential data loss if your endpoint goes down.

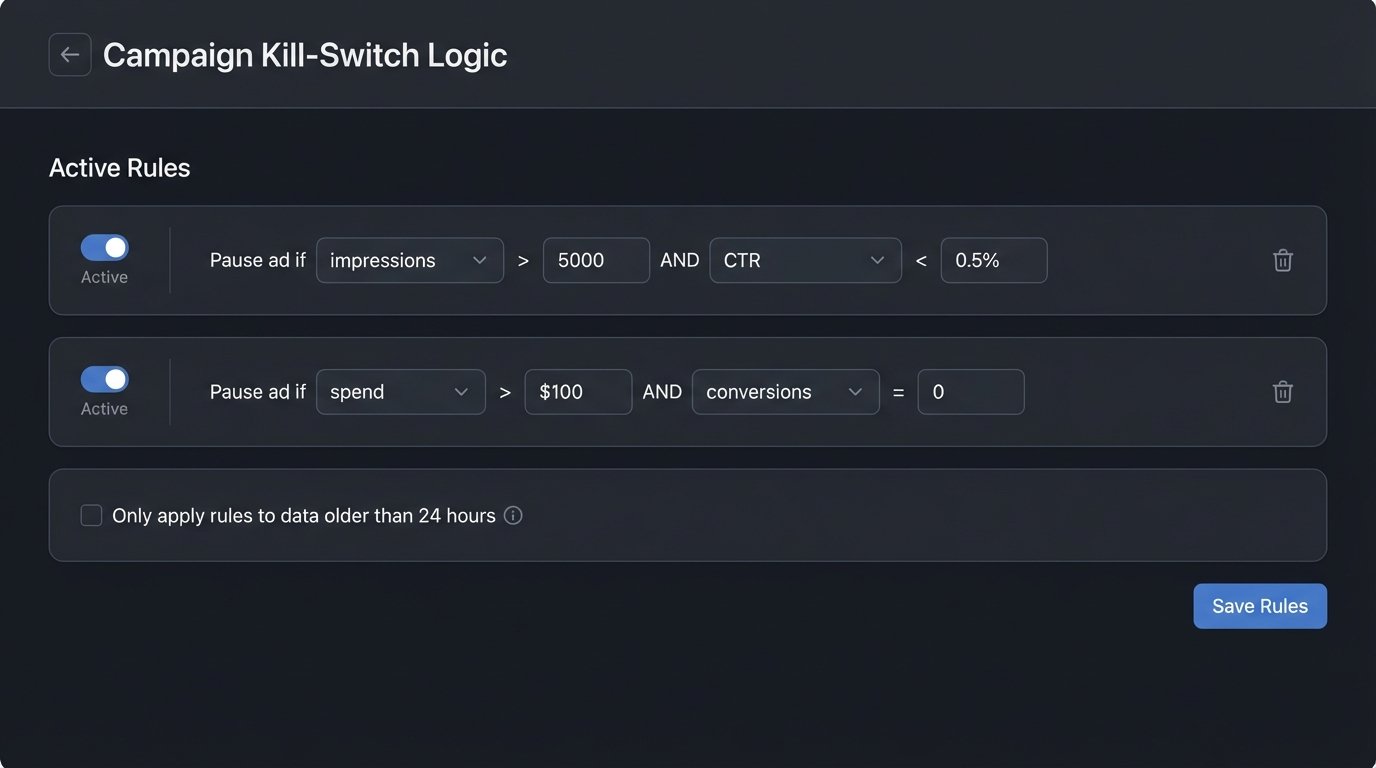

Component 4: The Decision Logic Core

This is the brain. The decision engine runs on a schedule, querying the normalized data from your staging database. It executes a set of predefined rules to determine the fate of each ad. The logic can start simple and grow in complexity.

A basic ruleset might look like this:

- Rule 1 (Probation): If an ad has fewer than 5,000 impressions, take no action. It needs more data.

- Rule 2 (Underperformer): If an ad has more than 5,000 impressions and a Click-Through Rate (CTR) below 0.5%, pause it.

- Rule 3 (Budget Burner): If an ad has spent more than $100 without a single conversion, pause it.

- Rule 4 (Winner): If an ad has a Cost Per Acquisition (CPA) below the campaign target and a positive Return on Ad Spend (ROAS), consider increasing its budget (within safe limits).

These rules are executed by the engine, which then generates a list of actions (e.g., `PAUSE ad_id_123`, `UPDATE ad_set_456 set budget=50`). These actions are then fed back to the API bridge component to be executed. This completes the closed loop.

Practical Hurdles and Unspoken Truths

Building this system is not a simple project. The conceptual architecture is straightforward, but the implementation is littered with traps that can cost real money.

Your Budget Is the Ultimate Kill Switch

An automated system can spend money with terrifying efficiency. A bug in your decision logic or a misconfigured budget rule can exhaust a monthly budget in a few hours. You must build multiple layers of protection. Set hard-coded budget caps in the code itself, configure campaign-level lifetime budget limits via the API, and set up billing alerts on the ad platform.

Never trust a single source of control. Your code, the platform settings, and your billing alerts are the three legs of a stool. If one fails, the other two should prevent a total collapse.

API Authentication Is a Recurring Nightmare

OAuth 2.0 is the standard, and it is a persistent source of pain. User-level access tokens expire. You need to build a robust process for handling token refreshes and securely storing the new tokens. This process has to be automated, because tokens always expire at 3 AM on a Saturday.

A simple Python script using a library like `requests-oauthlib` can handle the refresh flow, but it needs a secure place to store client secrets and refresh tokens, like a dedicated secrets manager (e.g., AWS Secrets Manager, HashiCorp Vault). Storing them in a config file is asking for trouble.

import requests

import os

# WARNING: For demonstration only. Do not hardcode credentials.

# Use a secrets manager in a real application.

CLIENT_ID = os.environ.get("AD_PLATFORM_CLIENT_ID")

CLIENT_SECRET = os.environ.get("AD_PLATFORM_CLIENT_SECRET")

REFRESH_TOKEN = os.environ.get("AD_PLATFORM_REFRESH_TOKEN")

TOKEN_URL = "https://api.adplatform.com/oauth/token"

def get_new_access_token():

"""

Refreshes an OAuth 2.0 access token.

"""

payload = {

'grant_type': 'refresh_token',

'client_id': CLIENT_ID,

'client_secret': CLIENT_SECRET,

'refresh_token': REFRESH_TOKEN

}

try:

response = requests.post(TOKEN_URL, data=payload)

response.raise_for_status() # Raises an HTTPError for bad responses (4xx or 5xx)

new_token_data = response.json()

new_access_token = new_token_data.get('access_token')

# Here you would persist the new access token securely

print(f"Successfully refreshed access token.")

return new_access_token

except requests.exceptions.RequestException as e:

# Log the error immediately. This is a critical failure.

print(f"Error refreshing token: {e}")

# Trigger an alert for manual intervention.

return None

# This function would be called by a scheduler before making API calls.

new_token = get_new_access_token()

Data Integrity and Platform Lag

Ad platforms do not report conversions in real time. There is an attribution window, and data can continue to be updated for hours or even days after the click. Your decision engine must account for this lag. Pausing an ad based on its first-hour performance is premature. It might have generated conversions that simply have not been reported yet.

A common solution is to build a “data maturity” check into your decision logic. The system should only make decisions on data that is at least 24-48 hours old to allow for attribution lag. This slows down the optimization cycle, but it prevents the system from making terrible decisions based on incomplete information.

Beyond A/B Testing: Autonomous Campaign Management

This architecture is more than just an A/B testing tool. It is the foundation for autonomous campaign management. Once the core loop is stable, you can add more sophisticated logic. Instead of just pausing ads, the system can reallocate budget from underperforming ad sets to winning ones. It can even trigger workflows to alert a creative team that a specific image or headline is fatiguing across multiple campaigns.

The goal is to elevate human operators from tactical button-pushers to strategic supervisors of an automated system. You define the rules, you provide the creative, and you let the machine handle the relentless, high-frequency churn of ad optimization.