Your brokerage spends six figures on a new CRM. The marketing deck promised a single pane of glass for everything from lead generation to closing. Six months post-launch, adoption rates are abysmal. Agents are still managing their leads in personal spreadsheets and Google Contacts. Management’s diagnosis is always the same: the agents need more training. This is a fundamental misreading of the problem.

The issue is not a lack of training. It’s friction. The new platform, for all its advertised features, forces agents to manually bridge data gaps that shouldn’t exist. They have to copy-paste lead information from Zillow emails, manually look up MLS data for a new contact, and then re-type client details into a transaction management system. Each manual step is a point of failure and a reason to abandon the tool.

Diagnosing the Core Failure: Disconnected Workflows

The root cause of low adoption is a disconnect between the tool’s architecture and the agent’s actual workflow. Agents operate on speed and opportunity. A system that requires ten minutes of data entry for every new lead is a system that will be bypassed. The promise of a centralized database means nothing when the cost of populating it is too high for the end-user.

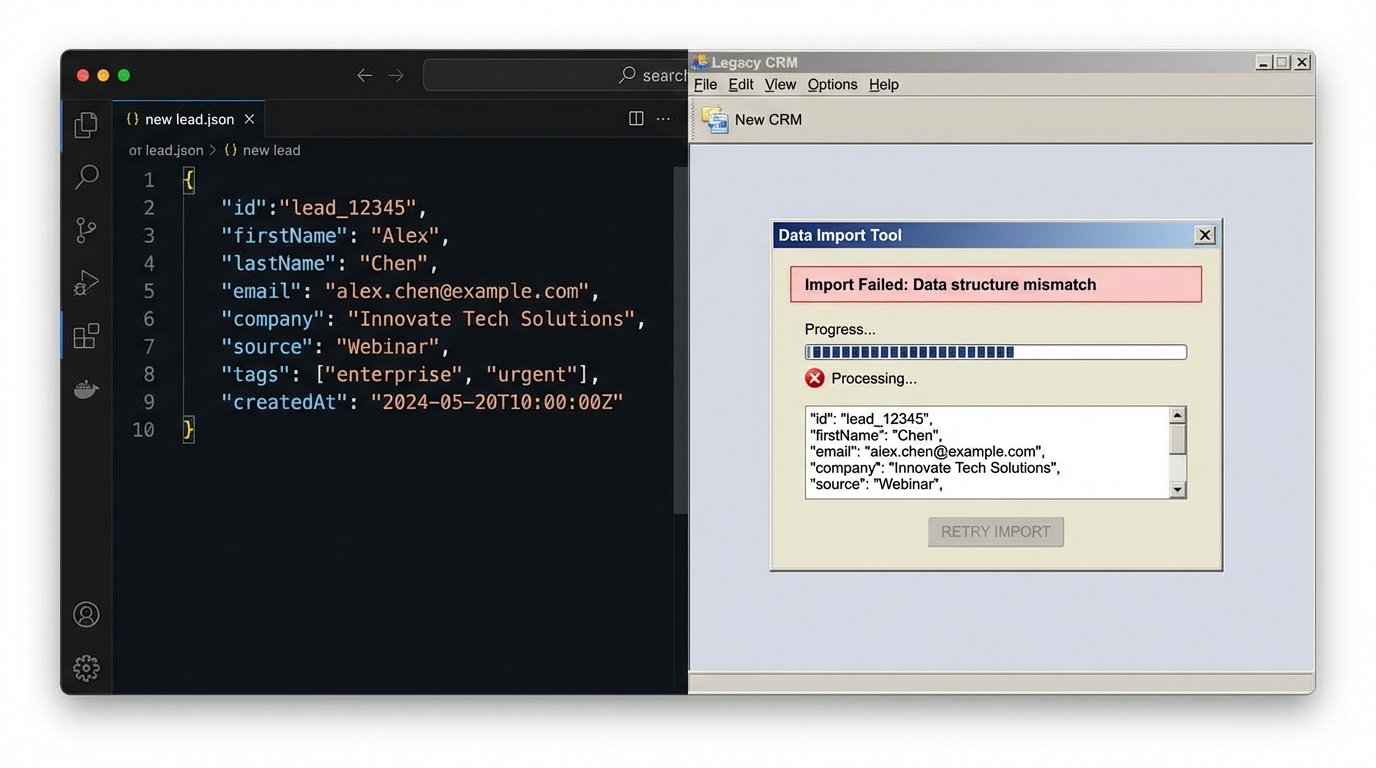

We see this pattern constantly. A platform is chosen for its reporting capabilities, which appeal to management, but it offers a hostile user experience for the agents who must feed it data. Trying to force-feed a modern JSON payload from a lead source into a legacy system’s clunky import module is like trying to shove a firehose of data through a needle. The pressure is all wrong and the data integrity is what gets soaked.

The Myth of the “All-in-One” Solution

Many brokerages fall for the allure of the monolithic “all-in-one” real estate platform. These systems are marketed as the single source of truth, capable of handling CRM, transaction management, marketing automation, and more. In reality, they are often a collection of poorly integrated modules acquired over years of mergers. They do many things, but none of them particularly well.

The APIs are often an afterthought, poorly documented and rate-limited into uselessness. Exporting data is intentionally difficult, creating a vendor lock-in that stifles any attempt to build efficient, external automations. The result is a wallet-drainer of a platform that actively resists integration and forces the exact manual work it was supposed to eliminate.

The Fix: An Automation Layer That Respects the Workflow

The solution isn’t another round of training or threatening memos from leadership. The fix is to build a lightweight, intelligent automation layer that works behind the scenes. This layer’s only job is to bridge the data gaps and eliminate manual entry. It respects the agent’s need for speed by making the “correct” workflow the path of least resistance. Instead of forcing the user to adapt to the software, we force the software to adapt to the user.

This means abandoning the search for a single perfect tool. Instead, we select best-in-class tools for each job, with one non-negotiable requirement: a well-documented, usable API. We pick the best lead-gen platform, the best transaction coordinator, and the best CRM, and then we write the connective tissue that makes them operate as a single, cohesive system.

Architecture Component 1: Inbound Lead Processing

The first point of friction to gut is lead entry. Agents receive leads from dozens of sources: Zillow, Realtor.com, their personal website, social media. Manually transcribing this data is slow and error-prone. A proper automation architecture bypasses this entirely.

We configure a service that ingests leads from any source. Most modern lead sources offer email parsing hooks or, even better, direct webhook integration. A webhook provides a real-time JSON payload the moment a lead comes in. Our automation endpoint catches this payload, strips and standardizes the data, and then injects it directly into the CRM via its API. The agent doesn’t lift a finger. They just get a notification that a new, fully populated lead record is ready for them.

A simple serverless function, like an AWS Lambda function triggered by an API Gateway, is perfect for this. It costs fractions of a penny per execution and scales automatically. Here is a conceptual skeleton in Python for processing a webhook payload and pushing it to a hypothetical CRM.

import json

import requests

def lambda_handler(event, context):

# Extract lead data from the incoming webhook payload

lead_data = json.loads(event['body'])

# Strip and transform data to match CRM schema

crm_payload = {

"firstName": lead_data.get("first_name", ""),

"lastName": lead_data.get("last_name", ""),

"email": lead_data.get("email_address", ""),

"phone": lead_data.get("phone_number", ""),

"source": "Zillow Webhook",

"notes": f"Inquiry on property: {lead_data.get('property_address', 'N/A')}"

}

# Logic-check for duplicates before injection

if not crm_payload["email"]:

return {

'statusCode': 400,

'body': json.dumps('Missing email, cannot create contact.')

}

# API endpoint and auth for the target CRM

CRM_API_URL = "https://api.somecrm.com/v1/contacts"

API_KEY = "your_secret_api_key"

headers = {

"Authorization": f"Bearer {API_KEY}",

"Content-Type": "application/json"

}

# Inject the clean data into the CRM

response = requests.post(CRM_API_URL, headers=headers, json=crm_payload)

if response.status_code == 201:

# Optionally, trigger a notification to the assigned agent

# send_slack_notification(f"New lead created: {crm_payload['firstName']}")

pass

return {

'statusCode': response.status_code,

'body': response.text

}

This script removes the agent from the data entry process entirely. The lead appears in their system instantly and accurately. That’s how you drive adoption.

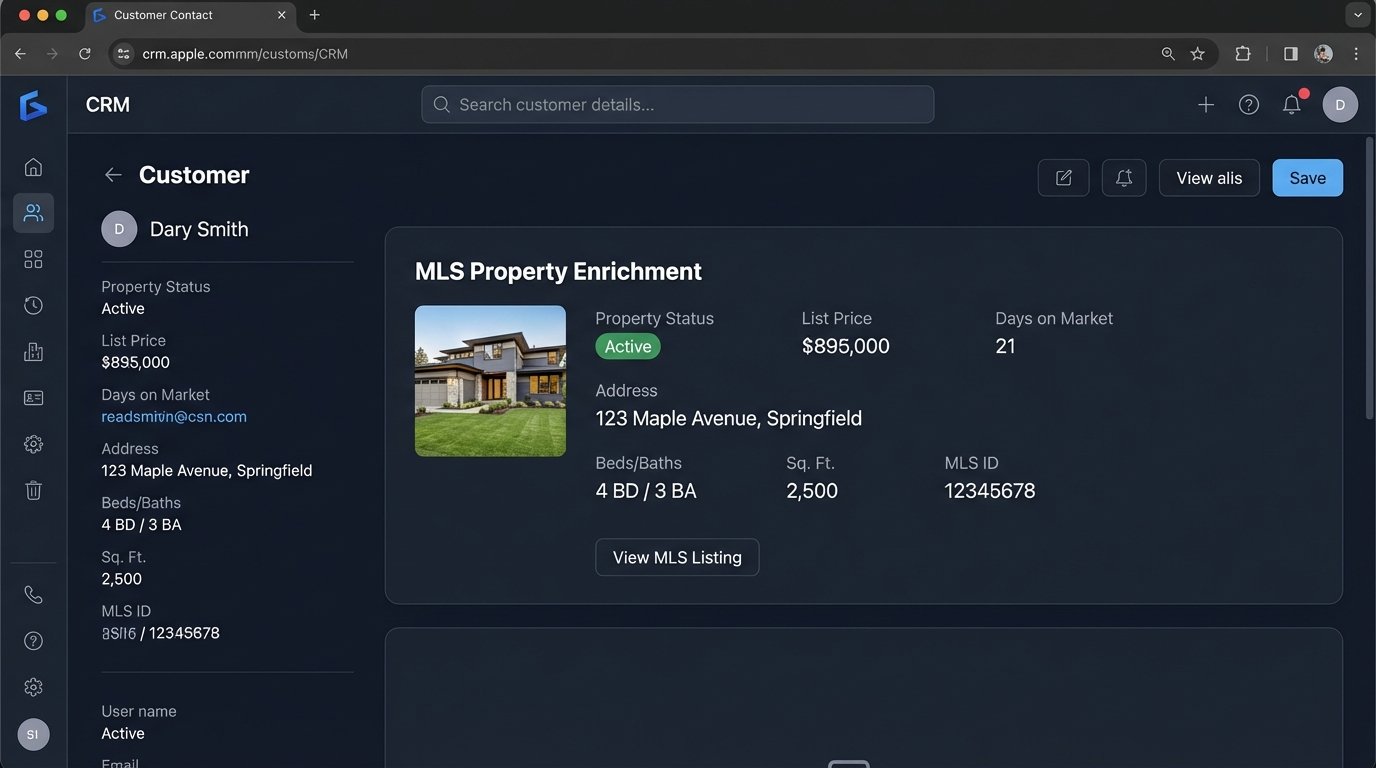

Architecture Component 2: Data Enrichment from MLS

A lead is just a name and an email. Real value comes from context. Once a contact is in the CRM, our automation layer should enrich it. If the lead inquired about a specific property, the system should automatically pull key data about that property from the MLS and attach it to the contact record.

Using an MLS API (via a provider like CoreLogic’s Trestle or Zillow’s Bridge API), we can fetch property status, price history, days on market, and photos. This information is appended to the contact’s record as a note or in custom fields. When the agent opens the record, they have immediate context without having to switch tabs and run a separate search. We’ve saved them three minutes and given them critical information for their first call.

Scaling the Solution: Custom Scripts vs. iPaaS Platforms

There are two primary paths to building this automation layer: using an Integration Platform as a Service (iPaaS) like Zapier or Make, or writing custom code hosted on a cloud provider. iPaaS tools are excellent for simple, linear workflows. You can connect a webhook to a CRM create action in minutes without writing a line of code.

Their limitations appear when you need complex conditional logic, error handling, and multi-step data transformations. They become a tangled mess of graphical flowcharts that are difficult to debug. Worse, their pricing models are often based on task execution, which can become prohibitively expensive as your lead volume scales. They are scaffolding, not a foundation.

The Case for Controlled Code

Custom scripts, like the Python example above, offer total control. You can build sophisticated logic to handle duplicate contacts, route leads based on price point or location, and implement robust retry mechanisms if an API call fails. You are not at the mercy of the platform’s pre-built connectors.

Hosting these on serverless platforms like AWS Lambda, Google Cloud Functions, or Azure Functions is operationally cheap. You pay only for the compute time you use, and the first million requests per month are often free. This approach requires development resources upfront but delivers a far more resilient and cost-effective system in the long run. It is the professional’s choice for building business-critical infrastructure.

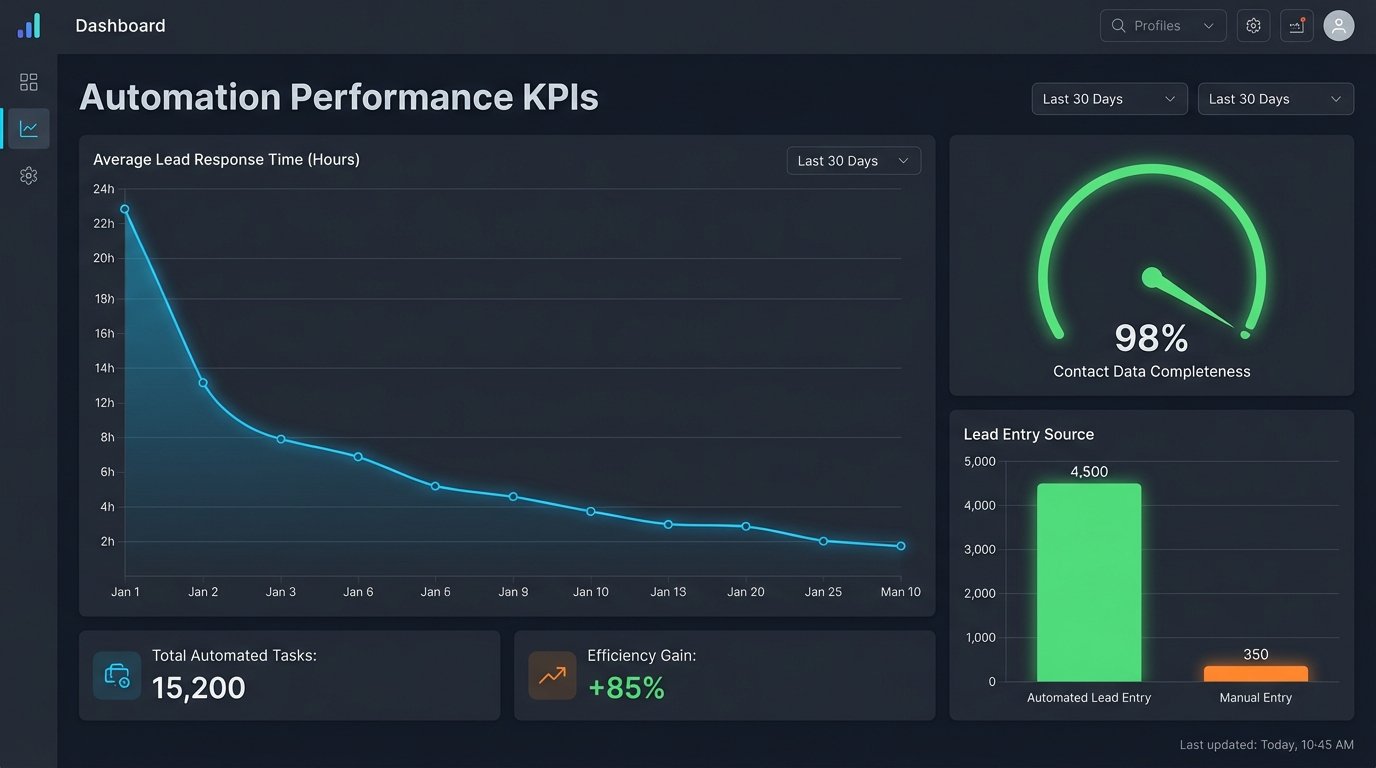

Measuring Success Beyond Login Counts

The goal of this automation is not just to get people to log into the CRM. It’s to make them more effective. We measure the success of this architecture with hard metrics that prove the friction has been removed.

- Lead Response Time: We track the time from lead creation (the webhook timestamp) to the first logged activity by an agent. This number should drop significantly when leads appear instantly and are properly enriched.

- Data Completeness: We can query the CRM database to measure the percentage of contacts that have key fields populated. Automation should drive this number toward 100% for source, property of interest, etc.

- Reduction in Manual Data Entry: A successful implementation will show a dramatic decrease in the number of new contacts created manually by users. The system should be doing the work for them.

These KPIs provide undeniable proof that the investment in automation engineering delivered a real return, not just another piece of shelfware.

A Shift in Procurement Strategy

This approach requires a fundamental shift in how real estate companies buy technology. Stop looking for the one platform that does it all. That product does not exist. Instead, prioritize tools that do one thing exceptionally well and, most importantly, have an open, well-supported API.

The real value of your tech stack is not in the user interface of any single application. It’s in the invisible, automated workflows that connect them. Invest in the engineering talent to build these bridges. An in-house developer or a competent consultant who can write scripts to connect APIs is more valuable than any enterprise software license. They build a system that works for your agents, not against them, and that is the only way to solve the user adoption problem for good.