The Initial State: A Cascade of Technical Debt

We walked into a local brokerage, Apex Realty, that was running on inertia. Their core tech stack was a patchwork of off-the-shelf software from a decade ago, stitched together with manual data entry and a shared Gmail inbox. Their revenue was flatlining not because their agents were bad, but because their infrastructure was actively working against them. The initial audit revealed three critical failure points that were bleeding money and morale.

Problem 1: Data Integrity Was a Myth

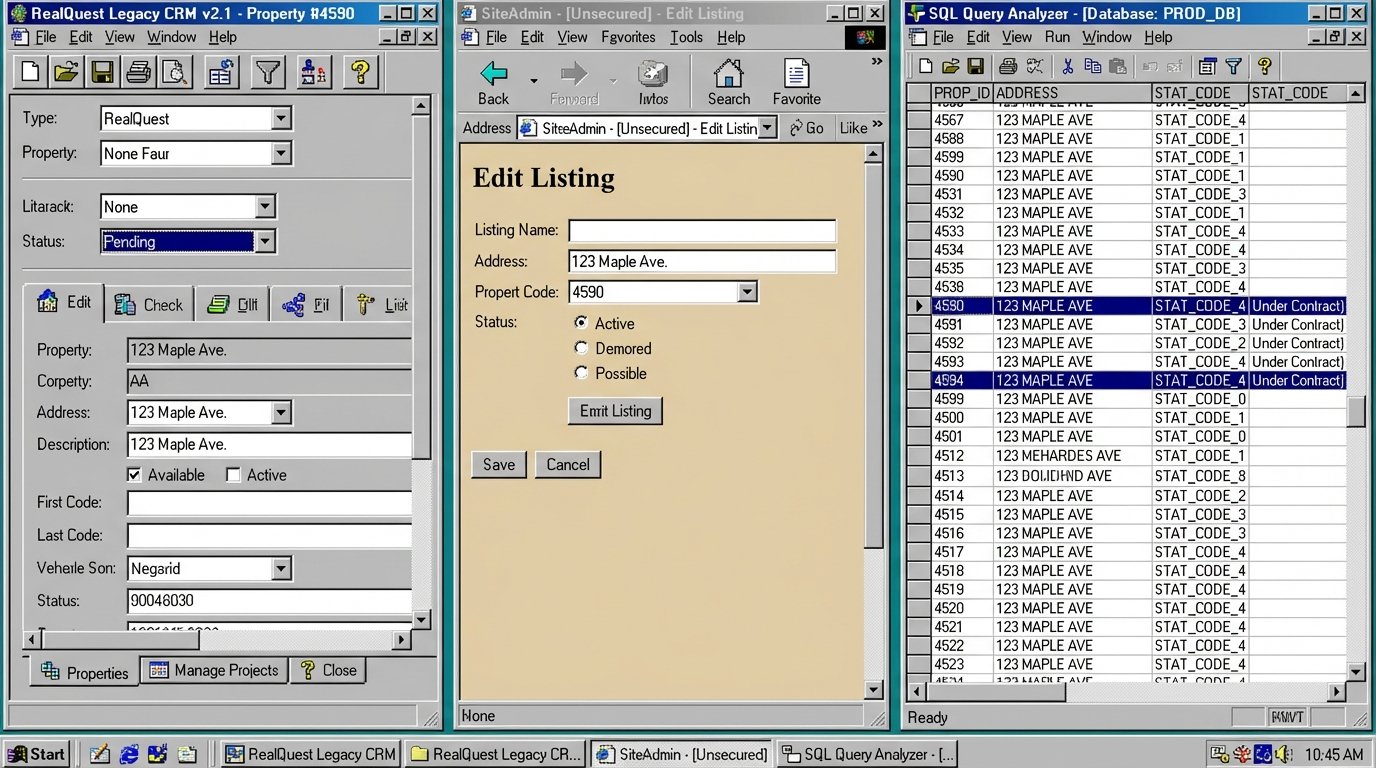

Apex’s biggest client-facing issue was stale listing data. Their connection to the local MLS feed was a nightly batch job that exported a CSV file to an FTP server. A junior admin would then manually import this into their WordPress-based website. The entire process took between 24 and 72 hours, depending on weekends and human error. Listings that were sold or pending were appearing as active for days. This didn’t just look unprofessional; it generated angry calls from buyers and crippled agent credibility before they even spoke to a lead.

The root cause was a brittle, one-way data flow with no validation logic. There was no mechanism to check for status changes, price adjustments, or withdrawn listings outside of the overnight file dump. Their internal CRM, a desktop application from 2008, had its own separate property database that was perpetually out of sync with both the MLS and the public website. Agents were working with three different versions of the truth. It was a complete mess.

Problem 2: The Black Hole of Lead Management

Lead acquisition is the lifeblood of any brokerage. Apex was spending five figures a month on portals like Zillow and Realtor.com, yet their lead conversion rate was below 1%. The reason was simple. All incoming leads, regardless of source, were forwarded to a single email address: leads@apexrealty.example.com. There was no routing, no assignment, and no tracking. Agents were expected to monitor the inbox and “claim” leads on a first-come, first-served basis. This created a toxic internal culture and an abysmal customer experience.

The average lead response time was over eight hours. By then, the prospect had already talked to three other agents. We pulled the server logs and saw that over 30% of leads received zero response. They were simply lost in the clutter of out-of-office replies and internal chatter. There was no accountability loop. Management had no visibility into which agent was working which lead or what the outcome was. They were pouring gasoline on a fire and hoping some of it would hit the engine.

Problem 3: Onboarding Was a 90-Day Ordeal

The operational chaos directly impacted agent retention. A new agent at Apex faced a three-month learning curve just to navigate the broken systems. They had to learn the clunky CRM, the manual process for updating the website, and the spreadsheet-based method for running comparative market analyses (CMAs). Training was tribal knowledge passed down from frustrated senior agents. This high cognitive load meant new agents spent their first quarter wrestling with software instead of closing deals.

This directly translated to high agent churn. The brokerage was a revolving door, constantly spending resources to recruit and train people who would leave for a competitor with a functioning tech stack within a year. The cost of a failed agent hire, including recruitment, training, and lost opportunity, was estimated at over $50,000. They were burning through talent and capital for no reason.

The Architecture of the Fix

Our solution wasn’t to throw out everything and buy a million-dollar all-in-one platform. That approach trades one set of problems for another and requires massive retraining. Instead, we decided to build a lightweight integration layer to force their existing tools to communicate and automate the manual work that was causing the primary issues. The strategy was to inject logic and data sanity where none existed.

Solution 1: Building a Real-Time Data Bridge

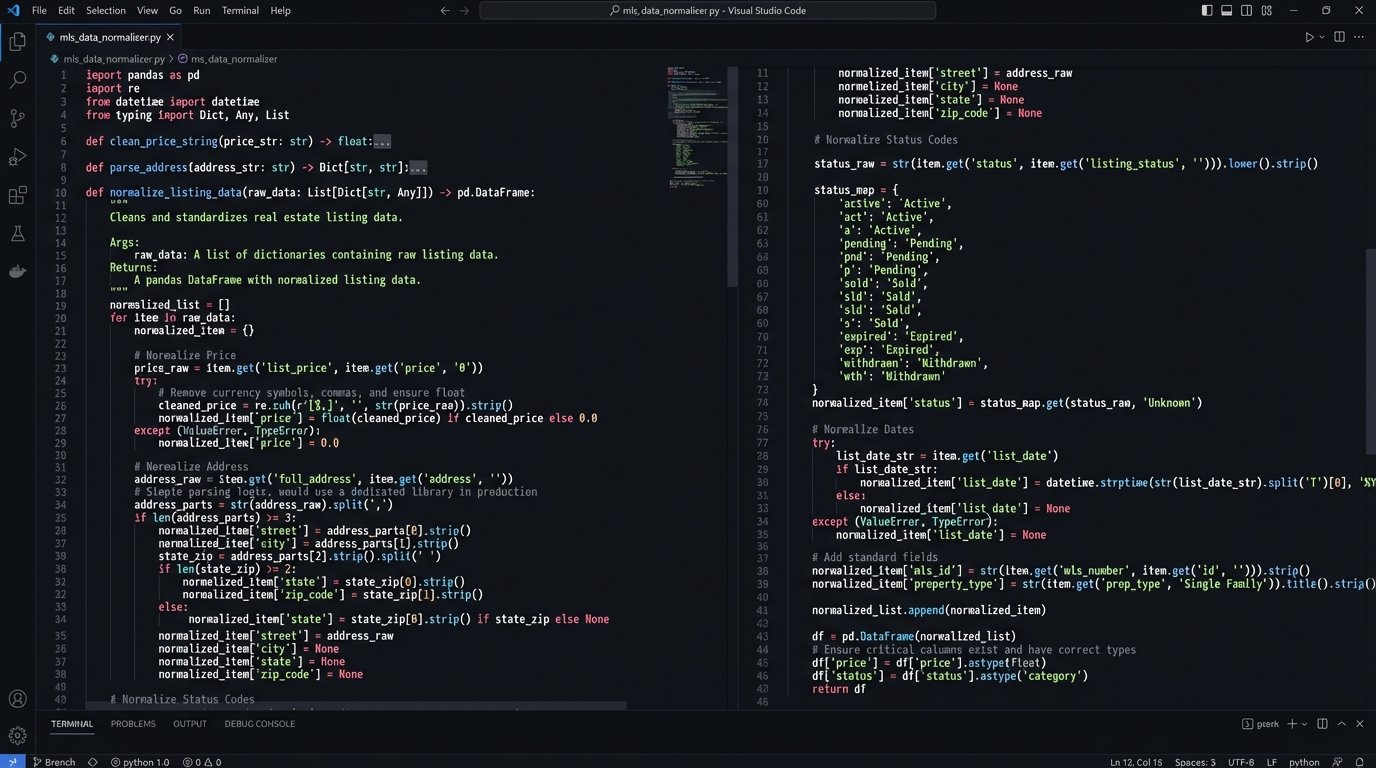

We first addressed the stale listings. The nightly CSV dump was scrapped entirely. We bypassed their old system and connected directly to the MLS board’s RETS feed, which provides near-real-time updates via a structured API. We built a small Python service using FastAPI, deployed on a simple cloud server, to act as a persistent data poller. Every five minutes, this service queries the RETS feed for any changes to listings within Apex’s service areas. The service ingests the raw data, which is usually a mess of inconsistent field names and formats.

The core of this service is a normalization function. It strips and standardizes addresses, formats prices as integers, and maps cryptic status codes like ‘PCHG’ or ‘BOMK’ to human-readable statuses like ‘Price Change’ or ‘Back on Market’.

def normalize_listing_data(source_a_listing, source_b_listing):

"""

A simplified example of merging and normalizing data from two sources.

The real version handles dozens of fields and edge cases.

"""

normalized_record = {}

# Logic-check for the primary key (MLS Number)

mls_id = source_a_listing.get('MLSId', source_b_listing.get('mls_num'))

if not mls_id:

return None # Reject record if no identifier exists

# Strip currency symbols and force price to integer

price_str = source_a_listing.get('ListPrice', '0').replace('$', '').replace(',', '')

normalized_record['price'] = int(float(price_str))

# Standardize address components

street_address = f"{source_a_listing.get('StreetNumber', '')} {source_a_listing.get('StreetName', '')}"

normalized_record['full_address'] = ' '.join(street_address.strip().upper().split())

normalized_record['zip_code'] = source_a_listing.get('ZipCode', '')

# Map inconsistent status flags to a standard set

status_map = {'A': 'Active', 'P': 'Pending', 'S': 'Sold', 'C': 'Contingent'}

raw_status = source_b_listing.get('status_flag', 'U')

normalized_record['status'] = status_map.get(raw_status, 'Unknown')

return normalized_record

Once normalized, the data is pushed simultaneously to the website’s database via a direct SQL connection and to the old CRM’s API. The CRM’s API was barely documented and incredibly slow, so we had to build in retry logic and rate-limiting. Getting data out of their old MS-SQL server was like trying to siphon gas with a wet noodle. But forcing data *in* was manageable. The result was that all three systems, MLS, website, and CRM, were now synchronized within minutes.

Solution 2: Engineering an Automated Lead Router

To fix the lead leakage, we shut down the shared inbox. We configured all lead sources, Zillow, the company website, etc., to send leads via webhook to a new endpoint on our FastAPI service. This immediately gave us structured data instead of unpredictable email bodies. The service parses the incoming JSON payload from the webhook, extracts the prospect’s information, and enriches it with property data from our newly centralized database.

The routing logic itself is where the value is. We implemented a rules engine that assigns leads based on a cascade of criteria:

- Location: The lead is first offered to agents who specialize in that specific zip code or neighborhood.

- Price Point: The lead is matched with agents experienced in that price bracket, preventing a new agent from getting a multi-million dollar inquiry.

- Round-Robin: Within a qualified group of agents, leads are distributed in a round-robin fashion to ensure fairness.

- Claim Timeout: An agent has 15 minutes to accept the lead via a click in their mobile app. If they don’t, the lead is automatically reassigned to the next agent in line.

This created a closed-loop system. The lead is sent, an agent is notified, they accept, and the CRM is automatically updated with the assignment. Management now has a dashboard showing every lead, who it’s assigned to, the response time, and its current status. The black hole was gone.

Solution 3: A Unified Interface for Core Tasks

We didn’t replace the CRM or the document management system. Instead, we built a simple, web-based dashboard that acted as a facade for the legacy systems. This dashboard provided a single pane of glass for an agent’s most common tasks. It showed them their assigned leads from the new routing system, their active listings pulled from our synchronized database, and links to pending documents. We used the APIs of the existing tools to pull this data together. Most of the APIs were terrible, but they were good enough for read-only operations.

For new agents, this was a massive improvement. Instead of learning three clunky interfaces, they learned one clean one. All the critical information was in one place. For complex or rare tasks, they could still log into the old systems, but 90% of their daily work could be done from the new dashboard. This drastically cut down the cognitive load and made the onboarding process about learning sales, not fighting software.

The Measured Results: From Stagnation to Scalability

The project was not without friction. Some veteran agents resisted the new lead system, preferring the old “shark tank” inbox. The CRM’s API went down twice, forcing us to build a more resilient queuing system. But the data shows the overhaul was a success. We tracked the core KPIs before and after the implementation, and the results were stark.

Quantifiable KPI Improvement

The numbers speak for themselves. We didn’t just improve workflows; we directly impacted the bottom line by plugging leaks and creating efficiencies.

- Listing Sync Latency: Dropped from an average of 48 hours to under 5 minutes. This eliminated client complaints about stale data entirely.

- Average Lead Response Time: Decreased from over 8 hours to an average of 12 minutes. The automated routing and timeout rules forced rapid engagement.

- Lead Conversion Rate: Increased from a dismal 0.9% to 3.5% within the first six months. Faster responses to the right agent simply converted more business.

- New Agent Time-to-First-Close: Reduced from an average of 95 days to 38 days. The simplified dashboard allowed them to focus on revenue-generating activities immediately.

- Agent Churn: Annual agent churn rate fell from 40% to 15% in the year following the rollout. Better tools led to happier, more productive agents.

Calculating the Return on Investment

The initial project cost was significant, involving development hours and API subscription fees. However, the ROI became clear quickly. The increased lead conversion rate alone generated an estimated $400,000 in additional gross commission income in the first year. The reduction in agent churn saved the company an estimated $250,000 in recruitment and training costs for five failed hires they avoided. The brokerage went from stagnating to having its best year on record.

This wasn’t about buying the fanciest “proptech” solution. It was about identifying the specific points of operational failure and applying targeted automation and system design to fix them. They now have a scalable, observable platform they can build on, rather than a fragile collection of parts that could break at any moment.