Success Story: Using AI Scheduling to Fill Every Listing Open House

The Problem: Manual Follow-up is a Data Sink

Before this project, our lead funnel was a mess. New open house inquiries from Zillow, Realtor.com, and our own site would land in a shared inbox. Agents were expected to jump on them, but “jumping on them” meant manually dialing, texting from personal phones, and tracking interactions in a spreadsheet that was perpetually out of date. The average time-to-first-contact was north of an hour, and a third of leads never received a response at all.

The core failure was a logistics bottleneck, not a lack of agent effort. Each lead required a context switch. Agents had to stop what they were doing, look up the property details, check their own calendar, and then initiate a conversation. This manual process was brittle, unscalable, and guaranteed that high-intent leads would go cold waiting for a human to finish their last meeting.

The Architecture: A State Machine Bridged to External APIs

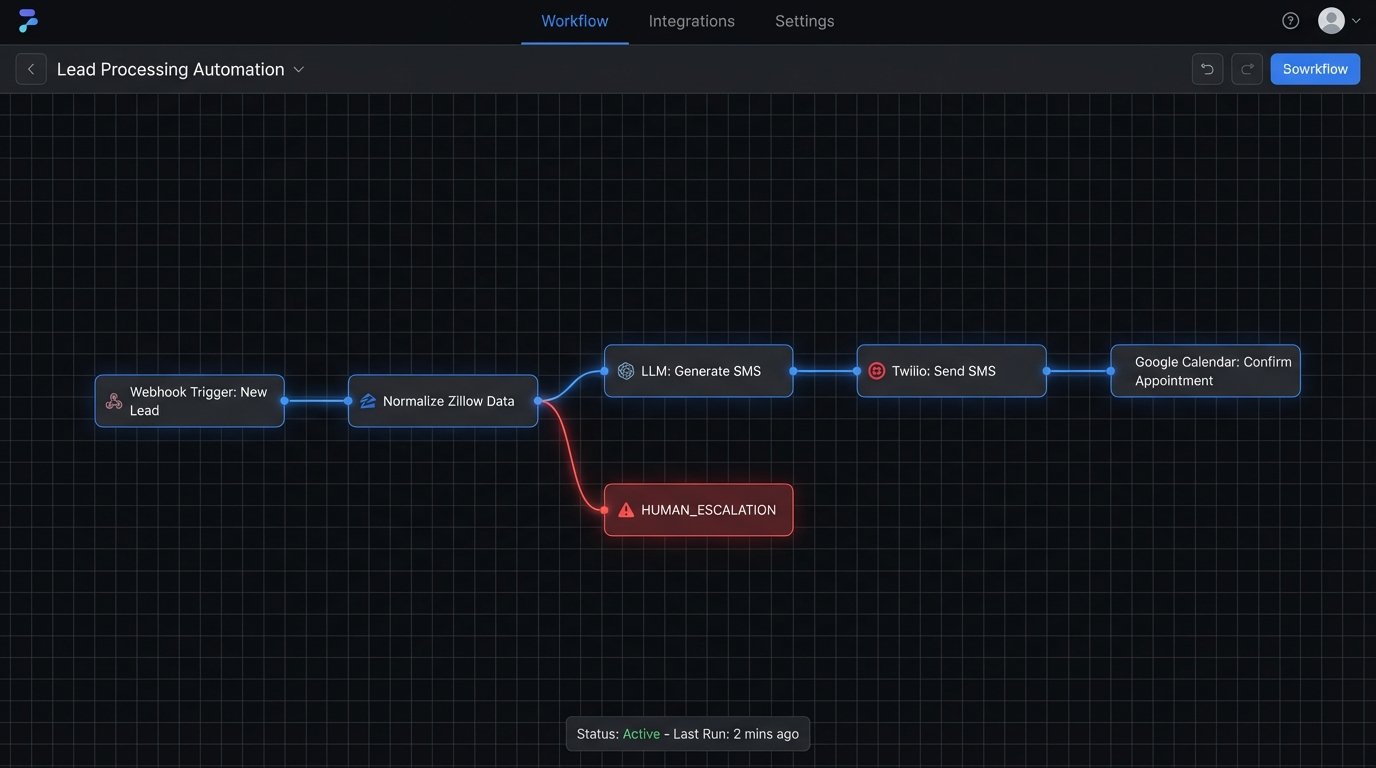

We rejected off-the-shelf booking tools. Most are glorified form-fillers that create more work by simply dumping appointments onto a calendar without any qualification. Our goal was to automate the initial qualification and scheduling conversation itself. The solution was built on a state machine that manages the lifecycle of a lead from initial inquiry to a confirmed calendar event.

The system is triggered by a webhook. When a new lead comes in, the source platform POSTs a JSON payload to our endpoint. The first job is to normalize this data. A lead from Zillow has different field names than a lead from our own Next.js frontend, so a normalization layer strips the incoming data and maps it to a standard internal format: `contact_info`, `property_id`, `source`, and `timestamp`.

Once normalized, the lead enters the `NEW_LEAD` state. This triggers the first action: an immediate SMS via the Twilio API. We chose SMS over email for the initial contact because the open rates are orders of magnitude higher. The message isn’t a generic template. It uses an LLM, fed with the property data, to generate a personalized opener. It confirms the property the user inquired about and asks for their availability for the upcoming open house.

The conversation logic is governed by the state machine. Here is a simplified version of the states:

- NEW_LEAD: The initial state. The system triggers the first outbound SMS.

- AWAITING_RESPONSE: The system has sent a message and is now waiting for an inbound reply. A timer is set; if no response is received within a set period (e.g., 2 hours), it might send a polite follow-up.

- NEEDS_CLASSIFICATION: An inbound message is received. The LLM parses the user’s intent. Is it a confirmation? A question? A request to reschedule? A negative response?

- TIME_PROPOSED: The system has proposed available slots after checking the agent’s calendar.

- CONFIRMED: The user agreed to a time. The system creates the calendar event via the Google Calendar API, invites the lead, and sends a final confirmation text.

- HUMAN_ESCALATION: The LLM’s confidence score for intent classification is below our threshold (e.g., the user asks a complex question about property taxes). The conversation is flagged and routed to a human agent’s dashboard.

Implementation Details and The Inevitable Friction

Connecting these pieces was not a clean process. The first major hurdle was calendar integration. We needed real-time visibility into each agent’s schedule to avoid double-booking. We used service accounts with delegated domain-wide authority in Google Workspace to read calendar data. This required getting permissions right, a process that is always more complicated than the documentation suggests.

The system polls for free/busy information but relies on webhooks from Google for real-time updates when an agent manually adds or deletes an event. This prevents race conditions where our system offers a slot that an agent just filled seconds ago on their phone. It’s a constant battle for state consistency.

Another significant challenge was parsing user responses. Humans do not communicate like APIs. A response of “Sure, that works” is easy. A response like “I can’t do Saturday morning, what about later in the day?” is harder. Prompt engineering became critical. We had to structure our prompts to the LLM to force it to return a structured JSON object indicating intent and extracted entities (like dates and times), not just a conversational string.

Trying to force unstructured human text into a rigid data model is like shoving a firehose through a needle. You will lose some data and misinterpret some intent. The escape hatch, our `HUMAN_ESCALATION` state, was the most important feature we built. It admits the machine is not perfect.

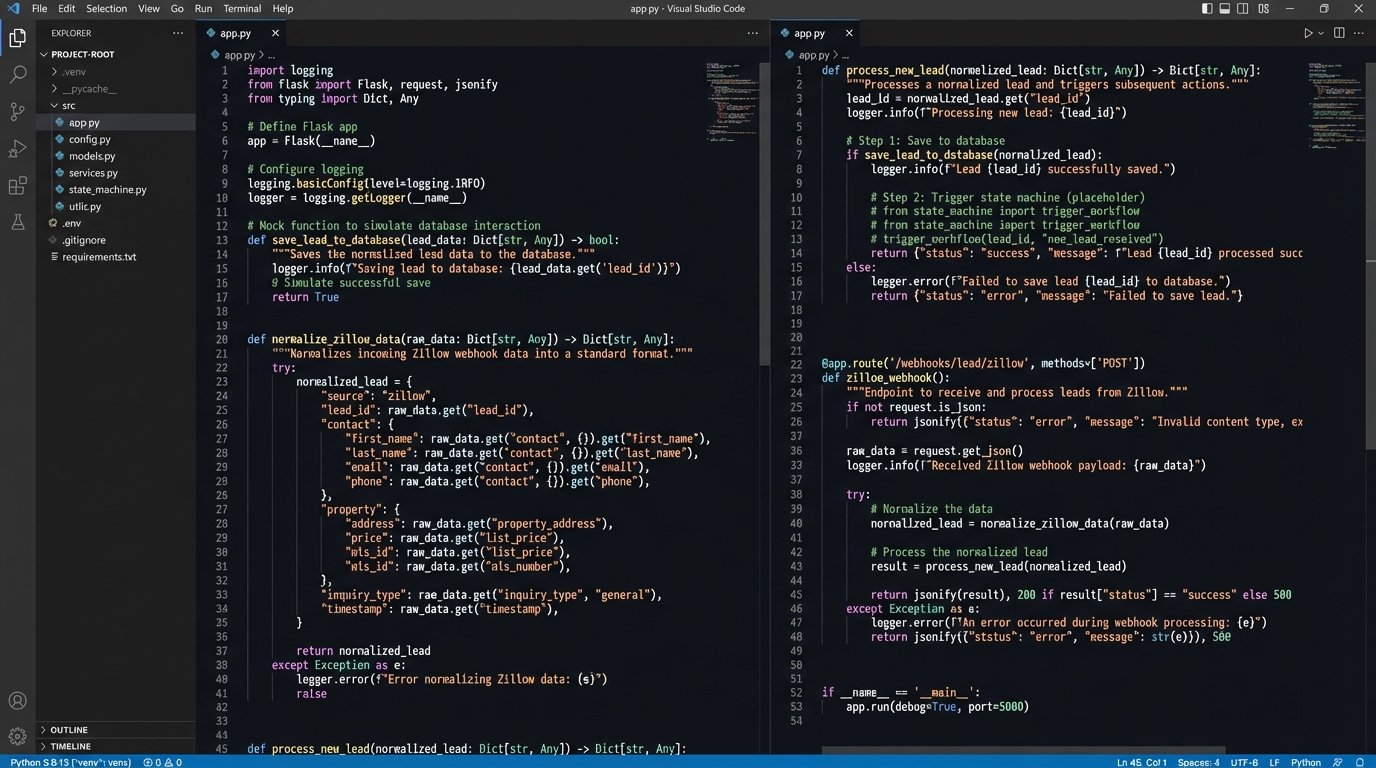

Here is a basic Python code example using Flask to show what the initial webhook ingestion point looks like. It receives the lead, normalizes it, and passes it to the state machine handler.

from flask import Flask, request, jsonify

app = Flask(__name__)

def normalize_zillow_lead(data):

# Logic to extract and rename fields from Zillow's payload

return {

'full_name': data.get('contact_name'),

'phone': data.get('phone_number'),

'property_id': data.get('listing_id'),

'source': 'Zillow'

}

def process_new_lead(lead_data):

# This would trigger the state machine and send the first SMS

print(f"Processing new lead for {lead_data['full_name']} for property {lead_data['property_id']}")

# ... call to StateMachineManager.handle_event('NEW', lead_data)

pass

@app.route('/webhooks/lead/zillow', methods=['POST'])

def handle_zillow_webhook():

if not request.json:

return jsonify({'status': 'error', 'message': 'Invalid payload'}), 400

raw_data = request.json

normalized_data = normalize_zillow_lead(raw_data)

# Push to a background queue to avoid blocking the webhook response

# For simplicity, calling directly here

process_new_lead(normalized_data)

return jsonify({'status': 'received'}), 200

if __name__ == '__main__':

app.run(debug=True)

This is a simplified version. The actual implementation uses a message queue like RabbitMQ to decouple the webhook receiver from the processing logic. This makes the system more resilient to processing spikes and downstream API failures.

The Results: Quantified and Unambiguous

The metrics shifted almost immediately after deployment. We stopped talking about anecdotal evidence and focused on hard numbers pulled from the system’s logs and our CRM.

Time-to-First-Contact: This dropped from an average of over 60 minutes to a median of 12 seconds. The automation sends the first SMS the instant the webhook is received.

Appointment Booking Rate: The percentage of inbound leads that resulted in a confirmed open house appointment increased from 18% to 45%. We were converting more than double the number of raw inquiries into actual opportunities.

Agent Efficiency: We calculated that this system saved each agent on the pilot team an average of 4 hours per week. This was time previously spent chasing down leads, checking calendars, and sending repetitive confirmation texts. It freed them up for higher-value tasks, like handling escalated conversations and preparing for the now-packed open houses.

Open House Attendance: The number of registered attendees per open house tripled, from an average of 6 to over 20. The system sends an automated reminder 24 hours and 2 hours before the scheduled time, which drastically cut down the no-show rate.

Learnings and The API Tax

This system is not a “set it and forget it” solution. It requires monitoring. API keys expire, upstream data formats change without warning, and the LLM can occasionally produce bizarre output that needs to be reviewed. The cost is also a factor. Every SMS segment, every API call to the LLM, and every calendar check incurs a small fee. This “API Tax” is real and must be budgeted for. It’s a wallet-drainer if you don’t track it.

The biggest lesson was the importance of the human-in-the-loop architecture. The goal was never to build a fully autonomous agent. The goal was to build a powerful filter that handles 80% of the repetitive conversational overhead, allowing human agents to focus on the 20% of conversations that require genuine expertise and nuance.

Future iterations will involve deeper integration with property data. The system should be able to answer simple questions like “Is there a garage?” by pulling structured data from the MLS instead of escalating to a human. We are also exploring more advanced lead scoring models to prioritize follow-up for users who ask buying-intent questions versus those who are just casually browsing.