The Lie of the Unified Real Estate Platform

The average agent’s tech stack is a liability. It is a fragile collection of expensive, siloed SaaS products, each promising to be the single source of truth. The CRM doesn’t sync properly with the marketing automation tool. The IDX feed provider has an API from 2009 that times out under load. Everything is stitched together with brittle Zapier workflows that break if a field name changes.

We’ve been sold a bad bill of goods. The industry’s obsession with buying pre-packaged “solutions” has created a dependency on rigid systems that cannot handle the most valuable data type in real estate: unstructured text. Client emails, showing feedback, and the public remarks field in an MLS listing contain the highest-value signals, yet they are treated like inert metadata by almost every platform.

Large language models are not another app to add to this teetering pile. They represent a foundational layer to process that unstructured text. Stop thinking of ChatGPT as a marketing copy generator. Start thinking of it as a universal data parser and a reasoning engine you can command with plain English.

The goal isn’t to buy another tool. It’s to build a logic layer that makes your existing data actually work.

A Practical First Step: Structuring MLS Descriptions

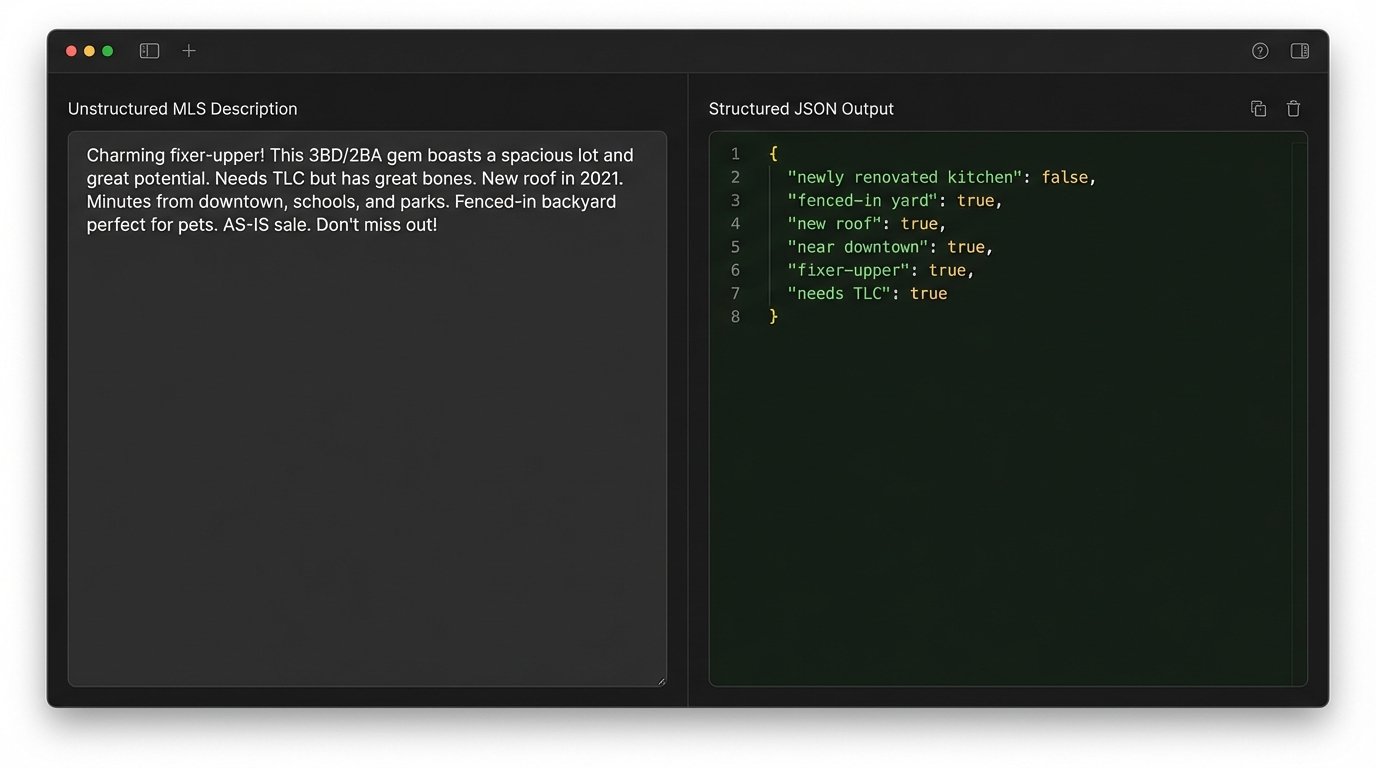

Every MLS listing has a “public remarks” field. It is a chaotic blob of text written by agents, filled with abbreviations, typos, and marketing fluff. Inside that mess are concrete, valuable features: “fenced-in yard,” “newly renovated kitchen,” “mother-in-law suite,” “impact windows.” Extracting these features programmatically has always been a regex nightmare that fails on edge cases.

An LLM can shred this problem in seconds. You can feed it the raw text blob and instruct it to return a structured JSON object containing only the specific features you care about. This transforms a useless string into a queryable dataset. Now you can run queries for clients that your IDX provider’s search filters could never handle, like “show me all houses with a Sub-Zero fridge and a screened-in lanai.”

This isn’t theory. You feed the model a description and a target schema. The model forces the text to conform to the structure.

From Text Blob to Key-Value Pairs

The operation is simple. Pull the listing data from your MLS feed, isolate the description string, and then make a single API call to a model like GPT-4. The magic is in the prompt, where you define the exact output structure you need. You are not asking it a question. You are giving it an instruction to perform a data transformation.

Consider this Python function using the OpenAI library. It takes a raw description and a list of desired features, then forces the model to return a boolean flag for each. This is about control, not creativity.

import openai

openai.api_key = 'YOUR_API_KEY'

def extract_property_features(description: str, features: list) -> dict:

"""

Uses an LLM to extract a predefined list of features from a property description.

:param description: The raw text from the MLS listing.

:param features: A list of strings representing the features to check for.

:return: A dictionary with feature names as keys and boolean values.

"""

feature_list = ", ".join(features)

prompt = f"""

Analyze the following real estate property description.

Based ONLY on the text provided, determine if the following features are present.

Respond with a JSON object where the keys are the feature names and the values are boolean (true or false).

The features to check for are: {feature_list}.

Description: "{description}"

JSON Response:

"""

try:

response = openai.chat.completions.create(

model="gpt-3.5-turbo",

messages=[

{"role": "system", "content": "You are a data extraction bot that only outputs JSON."},

{"role": "user", "content": prompt}

],

temperature=0.0

)

# Assuming the model returns a string that is a valid JSON object.

# In production, you'd need robust error handling and parsing here.

result = response.choices[0].message.content

return result

except Exception as e:

# A real implementation would have proper logging.

print(f"API call failed: {e}")

return {}

# Example Usage

mls_description = "Stunning 3/2 home with a newly renovated kitchen featuring quartz countertops. Enjoy the Florida lifestyle in your fenced-in yard. No HOA. Roof replaced in 2021. Note: Does not have a pool."

target_features = ["newly renovated kitchen", "fenced-in yard", "pool", "HOA"]

extracted_data = extract_property_features(mls_description, target_features)

print(extracted_data)

# Expected Output (as a JSON string):

# {

# "newly renovated kitchen": true,

# "fenced-in yard": true,

# "pool": false,

# "HOA": false

# }

This simple script replaces what used to require either tedious manual data entry or a complex, unreliable parsing engine. It’s not about the code’s complexity, but the power of the API call it makes.

Automating Lead Triage and Qualification

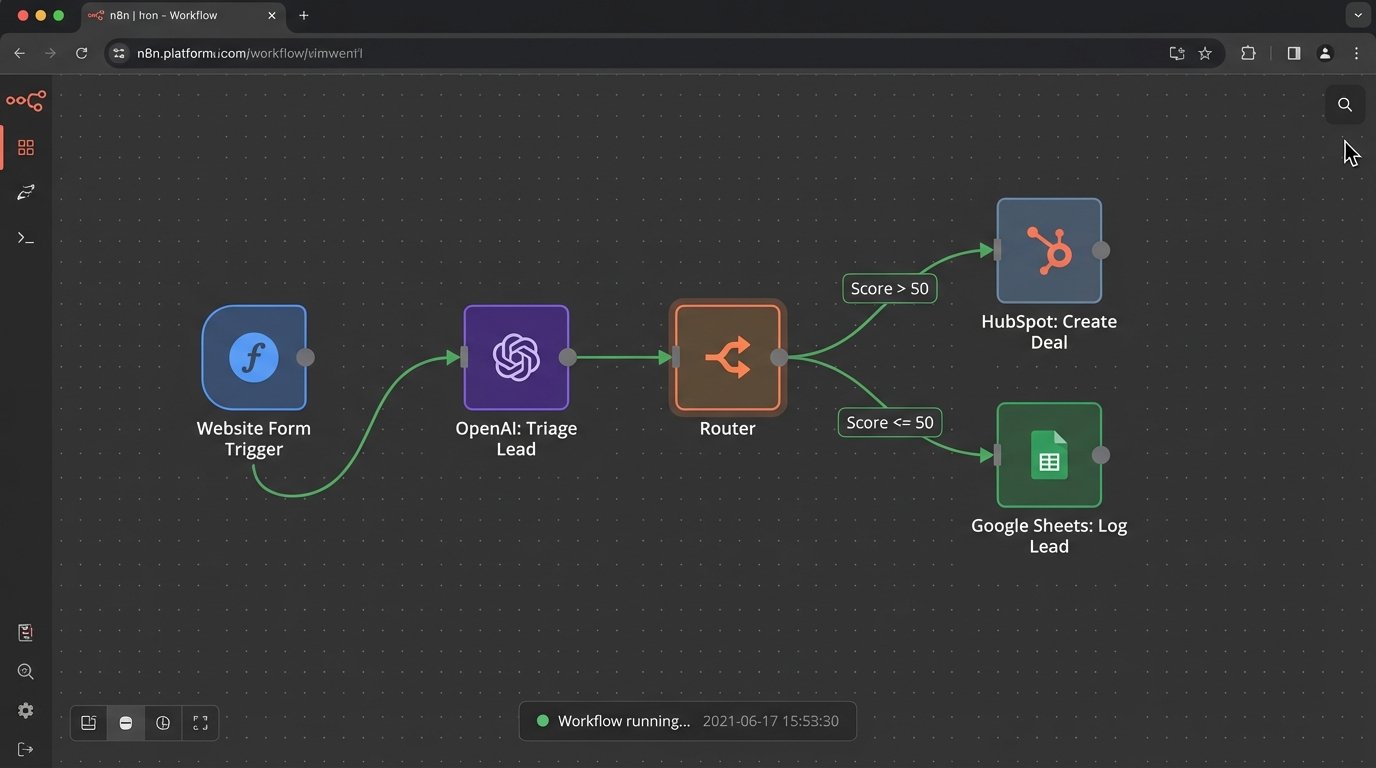

The next logical step is to point this capability at inbound leads. Every realtor’s website has a “Contact Us” form. The submissions are a mixed bag of high-intent clients, tire-kickers, spambots, and people looking for rentals. An agent’s most valuable asset is time, and sifting through this inbound traffic to find the real signals is a massive time sink.

You can pipe the raw text from every form submission, email, or Zillow inquiry directly into an LLM endpoint. The prompt’s job is to act as a triage nurse. It can classify the lead’s intent, extract key information like budget or timeline, and score the lead’s quality based on the language used. A message saying “I need to buy in the next 30 days, my budget is $800k” gets a score of 10 and triggers a webhook to the CRM for immediate follow-up. A message saying “wat homes u got” gets a score of 1 and is logged to a spreadsheet for a generic drip campaign.

This is a rule engine that you program with English. It’s more flexible than any set of dropdowns and conditional logic builders in a typical marketing automation platform. This is about shoving a firehose of messy, human-generated text through a needle of structured logic.

Latency and Hallucination Are Real Constraints

This process is not instantaneous. An API call to GPT-4 can take several seconds. This is unacceptable for a customer-facing chatbot but perfectly fine for backend lead processing that a human would take minutes to perform. The cost is also a factor. At high volume, API calls add up. But you must weigh that against the monthly subscription cost of a bloated marketing platform and the opportunity cost of an agent’s time spent on manual triage.

The model will also hallucinate if you ask it for information it cannot possibly know from the source text. You cannot ask it to determine a lead’s credit score from an email. The system must be designed defensively. It is a text processor, not an oracle. You use it to classify and structure the data you have, not to invent new data.

You must validate its output. If it extracts a phone number, run a logic check to ensure it has the correct number of digits. Never trust the output implicitly.

The Vision: An Agent-Specific Intelligence Layer

Parsing descriptions and triaging leads are just entry points. The real objective is to build a centralized reasoning engine that operates across all of an agent’s or brokerage’s data streams. Imagine a system that has read-only access to the local MLS feed, the agent’s calendar, and the CRM’s client notes.

This system can now perform complex, multi-step reasoning. It can monitor the MLS for new listings. When a new property appears, it can parse its features and cross-reference them against the needs of every client in the CRM. If it finds a strong match for a client the agent spoke with last week, it checks the agent’s calendar for open slots and generates a personalized, context-aware outreach message.

For example: “Hi [Client Name]. That 4-bedroom colonial we discussed in the Northwood district just hit the market. It has the fenced-in yard you wanted for the dog. I’m free tomorrow at 2 PM or 4 PM if you’d like to see it.”

This single text message is the result of synthesizing three different data sources, powered by an LLM that can understand the nuanced connection between them. This is not possible with any off-the-shelf real estate software. It requires a custom logic layer, and for the first time, that layer is accessible via a simple API.

Security and Privacy: The Undeniable Risks

Sending client data to a third-party API should set off alarm bells for any competent engineer. You are transmitting potentially sensitive information over the wire to a black box. For initial experiments on non-sensitive data like public MLS descriptions, this risk is manageable. For processing actual client emails and CRM data, it’s a serious concern.

Any production-grade system needs a robust data sanitization step to strip all personally identifiable information (PII) before the API call. The long-term solution will be locally hosted or virtual private cloud models that are fine-tuned on an agency’s own data. But for now, the risk must be acknowledged and mitigated. Do not build a system that forwards raw client emails directly to OpenAI.

Stop Buying Tools, Start Connecting Data

The last decade of real estate tech was defined by the proliferation of specialized tools. The next decade will be defined by their consolidation. Not by one company building a monolithic platform, but by individual agents and brokerages building their own lightweight, intelligent automation on top of their existing data sources.

The barrier to entry for this kind of automation has collapsed. It no longer requires a team of data scientists and machine learning experts. It requires a developer who can make a REST API call and write a defensive script to handle the response. The power is in the prompt and the workflow you build around it.

Start small. Take your worst, most chaotic data source and see if you can force an LLM to structure it. The initial experiments will prove the value. They will also reveal the limitations. This is not a magic bullet. It is a powerful new type of computational tool that is exceptionally good at manipulating language and structure. And real estate, at its core, is a business built on language.